Simultaneous equations model

Simultaneous equation models are a form of statistical model in the form of a set of linear simultaneous equations. They are often used in econometrics.

Structural and reduced form

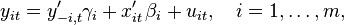

Suppose there are m regression equations of the form

where i is the equation number, and t = 1, ..., T is the observation index. In these equations xit is the ki×1 vector of exogenous variables, yit is the dependent variable, y−i,t is the ni×1 vector of all other endogenous variables which enter the ith equation on the right-hand side, and uit are the error terms. The “−i” notation indicates that the vector y−i,t may contain any of the y’s except for yit (since it is already present on the left-hand side). The regression coefficients βi and γi are of dimensions ki×1 and ni×1 correspondingly. Vertically stacking the T observations corresponding to the ith equation, we can write each equation in vector form as

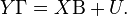

where yi and ui are T×1 vectors, Xi is a T×ki matrix of exogenous regressors, and Y−i is a T×ni matrix of endogenous regressors on the right-hand side of the ith equation. Finally, we can move all endogenous variables to the left-hand side and write the m equations jointly in vector form as

This representation is known as the structural form. In this equation Y = [y1 y2 ... ym] is the T×m matrix of dependent variables. Each of the matrices Y−i is in fact an ni-columned submatrix of this Y. The m×m matrix Γ, which describes the relation between the dependent variables, has a complicated structure. It has ones on the diagonal, and all other elements of each column i are either the components of the vector −γi or zeros, depending on which columns of Y were included in the matrix Y−i. The T×k matrix X contains all exogenous regressors from all equations, but without repetitions (that is, matrix X should be of full rank). Thus, each Xi is a ki-columned submatrix of X. Matrix Β has size k×m, and each of its columns consists of the components of vectors βi and zeros, depending on which of the regressors from X were included or excluded from Xi. Finally, U = [u1 u2 ... um] is a T×m matrix of the error terms.

Postmultiplying the structural equation by Γ −1, the system can be written in the reduced form as

This is already a simple general linear model, and it can be estimated for example by ordinary least squares. Unfortunately, the task of decomposing the estimated matrix  into the individual factors Β and Γ −1 is quite complicated, and therefore the reduced form is more suitable for prediction but not inference.

into the individual factors Β and Γ −1 is quite complicated, and therefore the reduced form is more suitable for prediction but not inference.

Assumptions

Firstly, the rank of the matrix X of exogenous regressors must be equal to k, both in finite samples and in the limit as T → ∞ (this later requirement means that in the limit the expression  should converge to a nondegenerate k×k matrix). Matrix Γ is also assumed to be non-degenerate.

should converge to a nondegenerate k×k matrix). Matrix Γ is also assumed to be non-degenerate.

Secondly, error terms are assumed to be serially independent and identically distributed. That is, if the tth row of matrix U is denoted by u(t), then the sequence of vectors {u(t)} should be iid, with zero mean and some covariance matrix Σ (which is unknown). In particular, this implies that E[U] = 0, and E[U′U] = T Σ.

Lastly, the identification conditions require that the number of unknowns in this system of equations should not exceed the number of equations. More specifically, the order condition requires that for each equation ki + ni ≤ k, which can be phrased as “the number of excluded exogenous variables is greater or equal to the number of included endogenous variables”. The rank condition of identifiability is that rank(Πi0) = ni, where Πi0 is a (k − ki)×ni matrix which is obtained from Π by crossing out those columns which correspond to the excluded endogenous variables, and those rows which correspond to the included exogenous variables.

Estimation

Two-stages least squares (2SLS)

The simplest and the most common[1] estimation method for the simultaneous equations model is the so-called two-stage least squares method, developed independently by Theil (1953) and Basmann (1957). It is an equation-by-equation technique, where the endogenous regressors on the right-hand side of each equation are being instrumented with the regressors X from all other equations. The method is called “two-stage” because it conducts estimation in two steps:[2]

- Step 1: Regress Y−i on X and obtain the predicted values

;

; - Step 2: Estimate γi, βi by the ordinary least squares regression of yi on

and Xi.

and Xi.

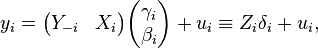

If the ith equation in the model is written as

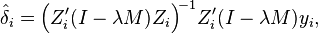

where Zi is a T×(ni + ki) matrix of both endogenous and exogenous regressors in the ith equation, and δi is an (ni + ki)-dimensional vector of regression coefficients, then the 2SLS estimator of δi will be given by[3]

where P = X (X ′X)−1X ′ is the projection matrix onto the linear space spanned by the exogenous regressors X.

Indirect least squares

Indirect least squares is an approach in econometrics where the coefficients in a simultaneous equations model are estimated from the reduced form model using ordinary least squares.[4][5] For this, the structural system of equations is transformed into the reduced form first. Once the coefficients are estimated the model is put back into the structural form.

Limited information maximum likelihood (LIML)

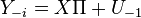

The “limited information” maximum likelihood method was suggested by Anderson & Rubin (1949). It is used when one is interested in estimating a single structural equation at a time (hence its name of limited information), say for observation i:

The structural equations for the remaining endogenous variables Y−1 are not specified, and they are given in their reduced form:

Notation in this context is different than for the simple IV case. One has:

-

: The endogenous variable(s).

: The endogenous variable(s). -

: The exogenous variable(s)

: The exogenous variable(s) -

: The instrument(s) (often denoted

: The instrument(s) (often denoted  )

)

The explicit formula for the LIML is:[6]

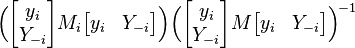

where M = I − X (X ′X)−1X ′, and λ is the smallest characteristic root of the matrix:

where, in a similar way, Mi = I − Xi (Xi′Xi)−1Xi′.

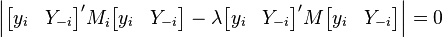

In other words, λ is the smallest solution of the generalized eigenvalue problem, see Theil (1971, p. 503):

K class estimators

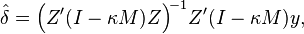

The LIML is a special case of the K-class estimators:[7]

with:

Several estimators belong to this class:

- κ=0: OLS

- κ=1: 2SLS. Note indeed that in this case,

the usual projection matrix of the 2SLS

the usual projection matrix of the 2SLS - κ=λ: LIML

- κ=λ - α (n-K): Fuller (1977) estimator. Here K represents the number of instruments, n the sample size, and α a positive constant to specify. A value of α=1 will yield an estimator that is approximately unbiased.[8]

Three-stage least squares (3SLS)

The three-stage least squares estimator was introduced by Zellner & Theil (1962). It combines two-stage least squares (2SLS) with seemingly unrelated regressions (SUR).

Using Cross Equation Restrictions to Achieve Identification

In Simultaneous Equations Models, the most common method to achieve identification is by imposing within-equation parameter restrictions. [9] Yet, identification is also possible using cross equation restrictions.

To illustrate how cross equation restrictions can be used for identification, consider the following example from Wooldridge [9]

y1 = γ12 y2 + δ11 z1 + δ12 z2 + δ13 z3 + u1

y2 = γ21 y1 + δ21 z1 + δ22 z2 + u2

where z’s are uncorrelated with u’s and y’s are endogenous variables. Without further restrictions, the first equation is not identified because there is no excluded exogenous variable. The second equation is just identified if δ13≠0, which is assumed to be true for the rest of discussion.

Now we impose the cross equation restriction of δ12=δ22. Since the second equation is identified, we can treat δ_12 as known for the purpose of identification. Then, the first equation becomes:

y1 - δ12 z2 = γ12 y2 + δ11 z1 + δ13 z3 + u1

Then, we can use (z1,z2,z3) as instruments to estimate the coefficients in the above equation since there are one endogenous variable (y2) and one excluded exogenous variable (z2) on the right hand side. Therefore, cross equation restrictions in place of within-equation restrictions can achieve identification.

See also

- General linear model

- Seemingly unrelated regressions

- Indirect least squares

- Reduced form

- Parameter identification problem

Notes

- ↑ Greene (2003, p. 398)

- ↑ Greene (2003, p. 399)

- ↑ Greene (2003, p. 399)

- ↑ Park, S-B. (1974) "On Indirect Least Squares Estimation of a Simultaneous Equation System", The Canadian Journal of Statistics / La Revue Canadienne de Statistique, 2 (1), 75–82 JSTOR 3314964

- ↑ Vajda, S.; Valko, P.; Godfrey, K.R. (1987). "Direct and indirect least squares methods in continuous-time parameter estimation". Automatica 23 (6): 707–718. doi:10.1016/0005-1098(87)90027-6.

- ↑ Amemiya (1985, p. 235)

- ↑ Davidson & Mackinnon (1993, p. 649)

- ↑ Davidson & Mackinnon (1993, p. 649)

- 1 2 Wooldridge, J.M., Econometric Analysis of Cross Section and Panel Data, MIT Press, Cambridge, Mass.

References

- Amemiya, Takeshi (1985). Advanced econometrics. Cambridge, Massachusetts: Harvard University Press. ISBN 0-674-00560-0.

- Anderson, T.W.; Rubin, H. (1949). "Estimator of the parameters of a single equation in a complete system of stochastic equations". Annals of Mathematical Statistics 20 (1): 46–63. doi:10.1214/aoms/1177730090. JSTOR 2236803.

- Basmann, R.L. (1957). "A generalized classical method of linear estimation of coefficients in a structural equation". Econometrica 25 (1): 77–83. doi:10.2307/1907743. JSTOR 1907743.

- Davidson, Russell; MacKinnon, James G. (1993). Estimation and inference in econometrics. Oxford University Press. ISBN 978-0-19-506011-9.

- Fuller, Wayne (1977). "Some Properties of a Modification of the Limited Information Estimator". Econometrica 45 (4): 939–953. doi:10.2307/1912683.

- Greene, William H. (2002). Econometric analysis (5th ed.). Prentice Hall. ISBN 0-13-066189-9.

- Maddala, G. S. (2001). "Simultaneous Equations Models". Introduction to Econometrics (Third ed.). New York: Wiley. pp. 343–390. ISBN 0-471-49728-2.

- Theil, Henri (1971). Principles of Econometrics. New York: John Wiley.

- Zellner, Arnold; Theil, Henri (1962). "Three-stage least squares: simultaneous estimation of simultaneous equations". Econometrica 30 (1): 54–78. doi:10.2307/1911287. JSTOR 1911287.

External links

- About.com:economics Online dictionary of economics, entry for ILS

- Lecture on the Identification Problem in 2SLS, and Estimation on YouTube by Mark Thoma