Linear multistep method

Linear multistep methods are used for the numerical solution of ordinary differential equations. Conceptually, a numerical method starts from an initial point and then takes a short step forward in time to find the next solution point. The process continues with subsequent steps to map out the solution. Single-step methods (such as Euler's method) refer to only one previous point and its derivative to determine the current value. Methods such as Runge–Kutta take some intermediate steps (for example, a half-step) to obtain a higher order method, but then discard all previous information before taking a second step. Multistep methods attempt to gain efficiency by keeping and using the information from previous steps rather than discarding it. Consequently, multistep methods refer to several previous points and derivative values. In the case of linear multistep methods, a linear combination of the previous points and derivative values is used.

Definitions

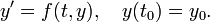

Numerical methods for ordinary differential equations approximate solutions to initial value problems of the form

The result is approximations for the value of  at discrete times

at discrete times  :

:

where  is the time step (sometimes referred to as

is the time step (sometimes referred to as  ) and

) and  is an integer.

is an integer.

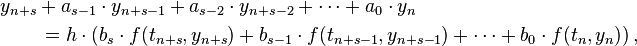

Multistep methods use information from the previous  steps to calculate the next value. In particular, a linear multistep method uses a linear combination of

steps to calculate the next value. In particular, a linear multistep method uses a linear combination of  and

and  to calculate the value of

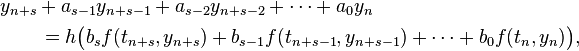

to calculate the value of  for the desired current step. Thus, a linear multistep method is a method of the form

for the desired current step. Thus, a linear multistep method is a method of the form

The coefficients  and

and  determine the method. The designer of the method chooses the coefficients, balancing the need to get a good approximation to the true solution against the desire to get a method that is easy to apply. Often, many coefficients are zero to simplify the method.

determine the method. The designer of the method chooses the coefficients, balancing the need to get a good approximation to the true solution against the desire to get a method that is easy to apply. Often, many coefficients are zero to simplify the method.

One can distinguish between explicit and implicit methods. If  , then the method is called "explicit", since the formula can directly compute

, then the method is called "explicit", since the formula can directly compute  . If

. If  then the method is called "implicit", since the value of

then the method is called "implicit", since the value of  depends on the value of

depends on the value of  , and the equation must be solved for

, and the equation must be solved for  . Iterative methods such as Newton's method are often used to solve the implicit formula.

. Iterative methods such as Newton's method are often used to solve the implicit formula.

Sometimes an explicit multistep method is used to "predict" the value of  . That value is then used in an implicit formula to "correct" the value. The result is a predictor–corrector method.

. That value is then used in an implicit formula to "correct" the value. The result is a predictor–corrector method.

Examples

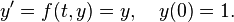

Consider for an example the problem

The exact solution is  .

.

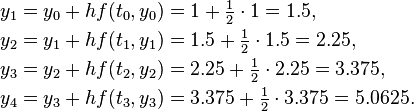

One-step Euler

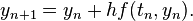

A simple numerical method is Euler's method:

Euler's method can be viewed as an explicit multistep method for the degenerate case of one step.

This method, applied with step size  on the problem

on the problem  , gives the following results:

, gives the following results:

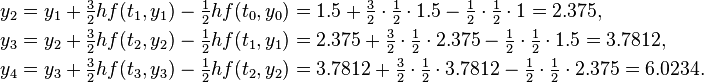

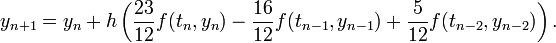

Two-step Adams–Bashforth

Euler's method is a one-step method. A simple multistep method is the two-step Adams–Bashforth method

This method needs two values,  and

and  , to compute the next value,

, to compute the next value,  . However, the initial value problem provides only one value,

. However, the initial value problem provides only one value,  . One possibility to resolve this issue is to use the

. One possibility to resolve this issue is to use the  computed by Euler's method as the second value. With this choice, the Adams–Bashforth method yields (rounded to four digits):

computed by Euler's method as the second value. With this choice, the Adams–Bashforth method yields (rounded to four digits):

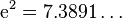

The exact solution at  is

is  , so the two-step Adams–Bashforth method is more accurate than Euler's method. This is always the case if the step size is small enough.

, so the two-step Adams–Bashforth method is more accurate than Euler's method. This is always the case if the step size is small enough.

Families of multistep methods

Three families of linear multistep methods are commonly used: Adams–Bashforth methods, Adams–Moulton methods, and the backward differentiation formulas (BDFs).

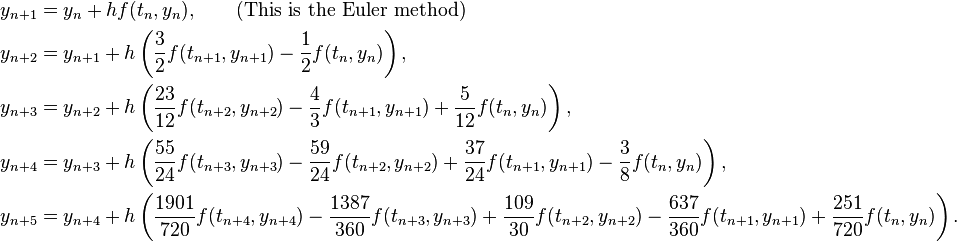

Adams–Bashforth methods

The Adams–Bashforth methods are explicit methods. The coefficients are  and

and  , while the

, while the  are chosen such that the methods has order s (this determines the methods uniquely).

are chosen such that the methods has order s (this determines the methods uniquely).

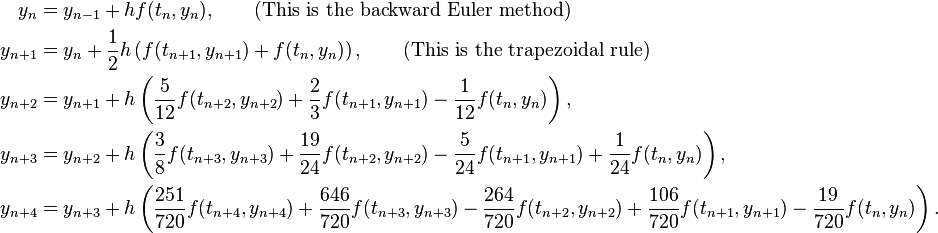

The Adams–Bashforth methods with s = 1, 2, 3, 4, 5 are (Hairer, Nørsett & Wanner 1993, §III.1; Butcher 2003, p. 103):

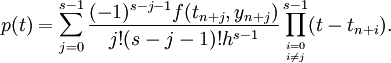

The coefficients  can be determined as follows. Use polynomial interpolation to find the polynomial p of degree

can be determined as follows. Use polynomial interpolation to find the polynomial p of degree  such that

such that

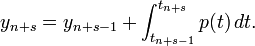

The Lagrange formula for polynomial interpolation yields

The polynomial p is locally a good approximation of the right-hand side of the differential equation  that is to be solved, so consider the equation

that is to be solved, so consider the equation  instead. This equation can be solved exactly; the solution is simply the integral of p. This suggests taking

instead. This equation can be solved exactly; the solution is simply the integral of p. This suggests taking

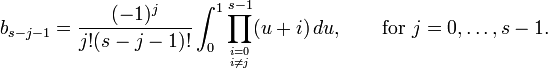

The Adams–Bashforth method arises when the formula for p is substituted. The coefficients  turn out to be given by

turn out to be given by

Replacing  by its interpolant p incurs an error of order hs, and it follows that the s-step Adams–Bashforth method has indeed order s (Iserles 1996, §2.1)

by its interpolant p incurs an error of order hs, and it follows that the s-step Adams–Bashforth method has indeed order s (Iserles 1996, §2.1)

The Adams–Bashforth methods were designed by John Couch Adams to solve a differential equation modelling capillary action due to Francis Bashforth. Bashforth (1883) published his theory and Adams' numerical method (Goldstine 1977).

Adams–Moulton methods

The Adams–Moulton methods are similar to the Adams–Bashforth methods in that they also have  and

and  . Again the b coefficients are chosen to obtain the highest order possible. However, the Adams–Moulton methods are implicit methods. By removing the restriction that

. Again the b coefficients are chosen to obtain the highest order possible. However, the Adams–Moulton methods are implicit methods. By removing the restriction that  , an s-step Adams–Moulton method can reach order

, an s-step Adams–Moulton method can reach order  , while an s-step Adams–Bashforth methods has only order s.

, while an s-step Adams–Bashforth methods has only order s.

The Adams–Moulton methods with s = 0, 1, 2, 3, 4 are (Hairer, Nørsett & Wanner 1993, §III.1; Quarteroni, Sacco & Saleri 2000):

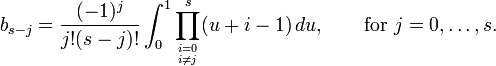

The derivation of the Adams–Moulton methods is similar to that of the Adams–Bashforth method; however, the interpolating polynomial uses not only the points  , as above, but also

, as above, but also  . The coefficients are given by

. The coefficients are given by

The Adams–Moulton methods are solely due to John Couch Adams, like the Adams–Bashforth methods. The name of Forest Ray Moulton became associated with these methods because he realized that they could be used in tandem with the Adams–Bashforth methods as a predictor-corrector pair (Moulton 1926); Milne (1926) had the same idea. Adams used Newton's method to solve the implicit equation (Hairer, Nørsett & Wanner 1993, §III.1).

Backward differentiation formulas (BDF)

- Main article: Backward differentiation formula

The BDF methods are implicit methods with  and the other coefficients chosen such that the method attains order s (the maximum possible). These methods are especially used for the solution of stiff differential equations.

and the other coefficients chosen such that the method attains order s (the maximum possible). These methods are especially used for the solution of stiff differential equations.

Analysis

The central concepts in the analysis of linear multistep methods, and indeed any numerical method for differential equations, are convergence, order, and stability.

Consistency and order

The first question is whether the method is consistent: is the difference equation

a good approximation of the differential equation  ? More precisely, a multistep method is consistent if the local truncation error goes to zero faster than the step size h as h goes to zero, where the local truncation error is defined to be the difference between the result

? More precisely, a multistep method is consistent if the local truncation error goes to zero faster than the step size h as h goes to zero, where the local truncation error is defined to be the difference between the result  of the method, assuming that all the previous values

of the method, assuming that all the previous values  are exact, and the exact solution of the equation at time

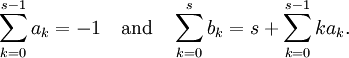

are exact, and the exact solution of the equation at time  . A computation using Taylor series shows out that a linear multistep method is consistent if and only if

. A computation using Taylor series shows out that a linear multistep method is consistent if and only if

All the methods mentioned above are consistent (Hairer, Nørsett & Wanner 1993, §III.2).

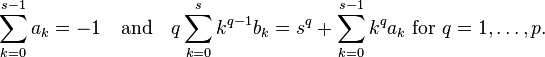

If the method is consistent, then the next question is how well the difference equation defining the numerical method approximates the differential equation. A multistep method is said to have order p if the local error is of order  as h goes to zero. This is equivalent to the following condition on the coefficients of the methods:

as h goes to zero. This is equivalent to the following condition on the coefficients of the methods:

The s-step Adams–Bashforth method has order s, while the s-step Adams–Moulton method has order  (Hairer, Nørsett & Wanner 1993, §III.2).

(Hairer, Nørsett & Wanner 1993, §III.2).

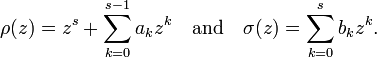

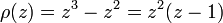

These conditions are often formulated using the characteristic polynomials

In terms of these polynomials, the above condition for the method to have order p becomes

In particular, the method is consistent if it has order one, which is the case if  and

and  .

.

Stability and convergence

The numerical solution of a one-step method depends on the initial condition  , but the numerical solution of an s-step method depend on all the s starting values,

, but the numerical solution of an s-step method depend on all the s starting values,  . It is thus of interest whether the numerical solution is stable with respect to perturbations in the starting values. A linear multistep method is zero-stable for a certain differential equation on a given time interval, if a perturbation in the starting values of size ε causes the numerical solution over that time interval to change by no more than Kε for some value of K which does not depend on the step size h. This is called "zero-stability" because it is enough to check the condition for the differential equation

. It is thus of interest whether the numerical solution is stable with respect to perturbations in the starting values. A linear multistep method is zero-stable for a certain differential equation on a given time interval, if a perturbation in the starting values of size ε causes the numerical solution over that time interval to change by no more than Kε for some value of K which does not depend on the step size h. This is called "zero-stability" because it is enough to check the condition for the differential equation  (Süli & Mayers 2003, p. 332).

(Süli & Mayers 2003, p. 332).

If the roots of the characteristic polynomial ρ all have modulus less than or equal to 1 and the roots of modulus 1 are of multiplicity 1, we say that the root condition is satisfied. A linear multistep method is zero-stable if and only if the root condition is satisfied (Süli & Mayers 2003, p. 335).

Now suppose that a consistent linear multistep method is applied to a sufficiently smooth differential equation and that the starting values  all converge to the initial value

all converge to the initial value  as

as  . Then, the numerical solution converges to the exact solution as

. Then, the numerical solution converges to the exact solution as  if and only if the method is zero-stable. This result is known as the Dahlquist equivalence theorem, named after Germund Dahlquist; this theorem is similar in spirit to the Lax equivalence theorem for finite difference methods. Furthermore, if the method has order p, then the global error (the difference between the numerical solution and the exact solution at a fixed time) is

if and only if the method is zero-stable. This result is known as the Dahlquist equivalence theorem, named after Germund Dahlquist; this theorem is similar in spirit to the Lax equivalence theorem for finite difference methods. Furthermore, if the method has order p, then the global error (the difference between the numerical solution and the exact solution at a fixed time) is  (Süli & Mayers 2003, p. 340).

(Süli & Mayers 2003, p. 340).

Furthermore, if the method is convergent, the method is said to be strongly stable if  is the only root of modulus 1. If it is convergent and all roots of modulus 1 are not repeated, but there is more than one such root, it is said to be relatively stable. Note that 1 must be a root for the method to be convergent; thus convergent methods are always one of these two.

is the only root of modulus 1. If it is convergent and all roots of modulus 1 are not repeated, but there is more than one such root, it is said to be relatively stable. Note that 1 must be a root for the method to be convergent; thus convergent methods are always one of these two.

To assess the performance of linear multistep methods on stiff equations, consider the linear test equation y' = λy. A multistep method applied to this differential equation with step size h yields a linear recurrence relation with characteristic polynomial

This polynomial is called the stability polynomial of the multistep method. If all of its roots have modulus less than one then the numerical solution of the multistep method will converge to zero and the multistep method is said to be absolutely stable for that value of hλ. The method is said to A-stable if it is absolutely stable for all hλ with negative real part. The region of absolute stability is the set of all hλ for which the multistep method is absolutely stable (Süli & Mayers 2003, pp. 347 & 348). For more details, see the section on stiff equations and multistep methods.

Example

Consider the Adams–Bashforth three-step method

The characteristic equation is thus

which has roots  , and the conditions above are satisfied. As

, and the conditions above are satisfied. As  is the only root of modulus 1, the method is strongly stable.

is the only root of modulus 1, the method is strongly stable.

First and second Dahlquist barriers

These two results were proved by Germund Dahlquist and represent an important bound for the order of convergence and for the A-stability of a linear multistep method. The first Dahlquist barrier was proved in Dahlquist (1956) and the second in Dahlquist (1963).

First Dahlquist barrier

A zero-stable and linear q-step multistep method cannot attain an order of convergence greater than q + 1 if q is odd and greater than q + 2 if q is even. If the method is also explicit, then it cannot attain an order greater than q (Hairer, Nørsett & Wanner 1993, Thm III.3.5).

Second Dahlquist barrier

There are no explicit A-stable and linear multistep methods. The implicit ones have order of convergence at most 2. The trapezoidal rule has the smallest error constant amongst the A-stable linear multistep methods of order 2.

See also

References

- Bashforth, Francis (1883), An Attempt to test the Theories of Capillary Action by comparing the theoretical and measured forms of drops of fluid. With an explanation of the method of integration employed in constructing the tables which give the theoretical forms of such drops, by J. C. Adams, Cambridge.

- Butcher, John C. (2003), Numerical Methods for Ordinary Differential Equations, John Wiley, ISBN 978-0-471-96758-3.

- Dahlquist, Germund (1956), "Convergence and stability in the numerical integration of ordinary differential equations", Mathematica Scandinavica 4: 33––53.

- Dahlquist, Germund (1963), "A special stability problem for linear multistep methods", BIT 3: 27–43, doi:10.1007/BF01963532, ISSN 0006-3835.

- Goldstine, Herman H. (1977), A History of Numerical Analysis from the 16th through the 19th Century, New York: Springer-Verlag, ISBN 978-0-387-90277-7.

- Hairer, Ernst; Nørsett, Syvert Paul; Wanner, Gerhard (1993), Solving ordinary differential equations I: Nonstiff problems (2nd ed.), Berlin: Springer Verlag, ISBN 978-3-540-56670-0.

- Hairer, Ernst; Wanner, Gerhard (1996), Solving ordinary differential equations II: Stiff and differential-algebraic problems (2nd ed.), Berlin, New York: Springer-Verlag, ISBN 978-3-540-60452-5.

- Iserles, Arieh (1996), A First Course in the Numerical Analysis of Differential Equations, Cambridge University Press, ISBN 978-0-521-55655-2.

- Milne, W. E. (1926), "Numerical integration of ordinary differential equations", American Mathematical Monthly (Mathematical Association of America) 33 (9): 455–460, doi:10.2307/2299609, JSTOR 2299609.

- Moulton, Forest R. (1926), New methods in exterior ballistics, University of Chicago Press.

- Quarteroni, Alfio; Sacco, Riccardo; Saleri, Fausto (2000), Matematica Numerica, Springer Verlag, ISBN 978-88-470-0077-3.

- Süli, Endre; Mayers, David (2003), An Introduction to Numerical Analysis, Cambridge University Press, ISBN 0-521-00794-1.

External links

- Weisstein, Eric W., "Adams Method", MathWorld.

- Adams-Bashforth-Moulton Method

- DotNumerics: Ordinary Differential Equations for C# and VB.NET Initial-value problem for nonstiff and stiff ordinary differential equations (explicit Runge-Kutta, implicit Runge-Kutta, Gear’s BDF and Adams-Moulton).

| ||||||||||||||