Kraft's inequality

In coding theory, Kraft's inequality, named after Leon Kraft, gives both a necessary and sufficient condition for the existence of a prefix code[1] for a given set of codeword lengths. Its applications to prefix codes and trees often find use in computer science and information theory.

More specifically, Kraft's inequality limits the lengths of codewords in a prefix code: if one takes an exponential of the length of each valid codeword, the resulting set of values must look like a probability mass function, that is, it must have total measure less than or equal to one. Kraft's inequality can be thought of in terms of a constrained budget to be spent on codewords, with shorter codewords being more expensive.

- If Kraft's inequality holds with strict inequality, the code has some redundancy.

- If Kraft's inequality holds with equality, the code in question is a complete code.

- If Kraft's inequality does not hold, the code is not uniquely decodable.

Kraft's inequality was published by Kraft (1949). However, Kraft's paper discusses only prefix codes, and attributes the analysis leading to the inequality to Raymond Redheffer. The inequality is sometimes also called the Kraft–McMillan theorem after the independent discovery of the result by McMillan (1956); McMillan proves the result for the general case of uniquely decodable codes, and attributes the version for prefix codes to a spoken observation in 1955 by Joseph Leo Doob.

Examples

Binary trees

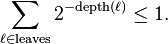

Any binary tree can be viewed as defining a prefix code for the leaves of the tree. Kraft's inequality states that

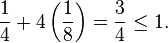

Here the sum is taken over the leaves of the tree, i.e. the nodes without any children. The depth is the distance to the root node. In the tree to the right, this sum is

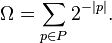

Chaitin's constant

In algorithmic information theory, Chaitin's constant is defined as

This is an infinite sum, which has one summand for every syntactically correct program that halts. |p| stands for the length of the bit string of p. The programs are required to be prefix-free in the sense that no summand has a prefix representing a syntactically valid program that halts. Hence the bit strings are prefix codes, and Kraft's inequality gives that  .

.

Formal statement

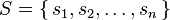

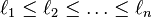

Let each source symbol from the alphabet

be encoded into a uniquely decodable code over an alphabet of size  with codeword lengths

with codeword lengths

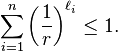

Then

Conversely, for a given set of natural numbers  satisfying the above inequality, there exists a uniquely decodable code over an alphabet of size

satisfying the above inequality, there exists a uniquely decodable code over an alphabet of size  with those codeword lengths.

with those codeword lengths.

A commonly occurring special case of a uniquely decodable code is a prefix code. Kraft's inequality therefore also holds for any prefix code.

Proof

Proof for prefix codes

Suppose that  . Let

. Let  be the full

be the full  -ary tree of depth

-ary tree of depth  . Every word of length

. Every word of length  over an

over an  -ary alphabet corresponds to a node in this tree at depth

-ary alphabet corresponds to a node in this tree at depth  . The

. The  th word in the prefix code corresponds to a node

th word in the prefix code corresponds to a node  ; let

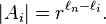

; let  be the set of all leaf nodes in the subtree of

be the set of all leaf nodes in the subtree of  rooted at

rooted at  . Clearly

. Clearly

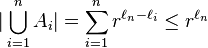

Since the code is a prefix code,

.

.

Thus, given that the total number of nodes at depth  is

is  ,

,

from which the result follows.

Conversely, given any ordered sequence of  natural numbers,

natural numbers,

satisfying the Kraft inequality, one can construct a prefix code with codeword lengths equal to  by pruning subtrees from a full

by pruning subtrees from a full  -ary tree of depth

-ary tree of depth  . First choose any node from the full tree at depth

. First choose any node from the full tree at depth  and remove all of its descendants. This removes

and remove all of its descendants. This removes  fraction of the nodes from the full tree from being considered for the rest of the remaining codewords. The next iteration removes

fraction of the nodes from the full tree from being considered for the rest of the remaining codewords. The next iteration removes  fraction of the full tree for total of

fraction of the full tree for total of  . After

. After  iterations,

iterations,

fraction of the full tree nodes are removed from consideration for any remaining codewords. But, by the assumption, this sum is less than 1 for all  . Thus prefix code with lengths

. Thus prefix code with lengths  can be constructed for all

can be constructed for all  source symbols.

source symbols.

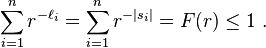

Probabilistic Proof for prefix codes

Generate a sequence of symbols from the r character alphabet, independently and uniformly at random. Define Ei to be the event that codeword i is a prefix of this sequence. Because we have a prefix code, these events are mutually exclusive. Therefore,

The proof of the converse half of the result is given above.

Proof of the general case

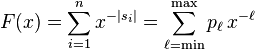

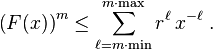

Consider the generating function in inverse of x for the code S

in which  —the coefficient in front of

—the coefficient in front of  —is the number of distinct codewords of length

—is the number of distinct codewords of length  . Here min is the length of the shortest codeword in S, and max is the length of the longest codeword in S.

. Here min is the length of the shortest codeword in S, and max is the length of the longest codeword in S.

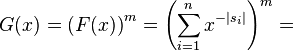

Consider all m-powers Sm, in the form of words  , where

, where  are indices between 1 and n. Note that, since S was assumed to uniquely decodable,

are indices between 1 and n. Note that, since S was assumed to uniquely decodable,

implies

implies  . Because of this property, one can compute the generating function

. Because of this property, one can compute the generating function  for

for  from the generating function

from the generating function  as

as

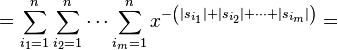

Here, similarly as before,  —the coefficient in front of

—the coefficient in front of  in

in  —is the number of words of length

—is the number of words of length  in

in  . Clearly,

. Clearly,  cannot exceed

cannot exceed  .

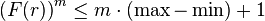

Hence for any positive x

.

Hence for any positive x

Substituting the value x = r we have

for any positive integer  . The left side of the inequality grows exponentially in

. The left side of the inequality grows exponentially in  and the right side only linearly. The only possibility for the inequality to be valid for all

and the right side only linearly. The only possibility for the inequality to be valid for all  is that

is that  .

Looking back on the definition of

.

Looking back on the definition of  we finally get the inequality.

we finally get the inequality.

Alternative construction for the converse

Given a sequence of  natural numbers,

natural numbers,

satisfying the Kraft inequality, we can construct a prefix code as follows. Define the ith codeword, Ci, to be the first li digits after the radix point (e.g. decimal point) in the base r representation of

Note that by Kraft's inequality, this sum is never more than 1. Hence the codewords capture the entire value of the sum. Therefore, for j > i, the first li digits of Cj form a larger number than Ci, so the code is prefix free.

See also Canonical Huffman code.

Notes

- ↑ Cover, Thomas M.; Thomas, Joy A. (2006), Elements of Information Theory (PDF) (2nd ed.), John Wiley & Sons, Inc, pp. 108–109, doi:10.1002/047174882X.ch5, ISBN 0-471-24195-4

References

- Kraft, Leon G. (1949), A device for quantizing, grouping, and coding amplitude modulated pulses, Cambridge, MA: MS Thesis, Electrical Engineering Department, Massachusetts Institute of Technology.

- McMillan, Brockway (1956), "Two inequalities implied by unique decipherability", IEEE Trans. Information Theory 2 (4): 115–116, doi:10.1109/TIT.1956.1056818.