Direct Rendering Manager

| Original author(s) | kernel.org & freedesktop.org |

|---|---|

| Developer(s) | kernel.org & freedesktop.org |

| Written in | C |

| Type | |

| License | |

| Website |

dri |

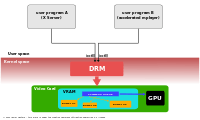

The Direct Rendering Manager (DRM) is a subsystem of the Linux kernel responsible for interfacing with GPUs of modern video cards. DRM exposes an API that user space programs can use to send commands and data to the GPU, and perform operations such as configuring the mode setting of the display. DRM was first developed as the kernel space component of the X Server's Direct Rendering Infrastructure,[1] but since then it has been used by other graphic stack alternatives such as Wayland.

User space programs can use the DRM API to command the GPU to do hardware accelerated 3D rendering, video decoding as well as GPGPU computing.

Overview

The Linux Kernel already had an API called fbdev allowing to manage the framebuffer of a graphics adapter,[2] but it couldn't be used to handle the needs of modern 3D accelerated GPU based video cards. These type of cards usually require setting and managing a command queue in the card's memory (Video RAM) to dispatch commands to the GPU, and also they need a proper management of the buffers and free space of the Video RAM itself.[3] Initially user space programs (such as the X Server) directly managed these resources, but these programs usually acted as if they were the only ones with access to the card's resources. When two or more programs tried to control the same video card at the same time, and set its resources each one in its own way, most times they ended catastrophically.[3]

When the Direct Rendering Manager was first created, the purpose was that multiple programs using resources from the video card can cooperate through it. The DRM gets an exclusive access to the video card, and it's responsible for initializing and maintaining the command queue, the VRAM and any other hardware resource. The programs that want to use the GPU send their requests to DRM, which acts as an arbitrator and takes care to avoid possible conflicts.

Since then, the scope of DRM has been expanded over the years to cover more functionality previously handled by user space programs, such as framebuffer managing and mode setting, memory sharing objects and memory synchronization.[4][5] Some of these expansions had carried their own specific names, such as Graphics Execution Manager (GEM) or Kernel Mode-Setting (KMS), and the terminology prevails when the functionality they provide is specifically alluded. But they are really parts of the whole kernel DRM subsystem.

Software architecture

The Direct Rendering Manager resides in kernel space, so the user space programs must use kernel system calls to request its services. However, DRM doesn't define its own customized system calls. Instead, it follows the Unix principle "everything is a file" to expose the GPUs through the filesystem name space using device files under the /dev hierarchy. Each GPU detected by DRM is referred as a DRM device, and a device file /dev/dri/cardX (where X is a sequential number) is created to interface with it.[6][7] User space programs that want to talk to the GPU must open the file and use ioctl calls to communicate with DRM. Different ioctls correspond to different functions of the DRM API.

A library called libdrm was created to facilitate the interface of user space programs with the DRM subsystem. This library is merely a wrapper that provides a function written in C for every ioctl of the DRM API, as well as constants, structures and other helper elements.[8] The use of libdrm not only avoids exposing the kernel interface directly to user space, but presents the usual advantages of reusing and sharing code between programs.

DRM consists of two parts: a generic "DRM core" and a specific one ("DRM Driver") for each type of supported hardware.[9] DRM core provides the basic framework where different DRM drivers can register, and also provides to user space a minimum set of ioctls with common, hardware-independent functionality. A DRM driver, on the other hand, implements the hardware-dependent part of the API, specific to the type of GPU it supports; it should provide the implementation to the remainder ioctls not covered by DRM core, but it may also extend the API offering additional ioctls with extra functionality only available on such hardware.[6] When a specific DRM driver provides an enhanced API, user space libdrm is also extended by an extra library libdrm-driver that can be used by user space to interface with the additional ioctls.

API

The DRM core exports several interfaces to user-space applications, generally intended to be used through corresponding libdrm wrapper functions. In addition, drivers export device-specific interfaces for use by user-space drivers & device-aware applications through ioctls and sysfs files. External interfaces include: memory mapping, context management, DMA operations, AGP management, vblank control, fence management, memory management, and output management.

Translation Table Maps

The Translation Table Maps memory manager was developed by Tungsten Graphics but later superseded by GEM.

Graphics Execution Manager

Due to the increasing size of video memory and the growing complexity of graphics APIs such as OpenGL, the strategy of reinitializing the graphics card state at each context switch was too expensive, performance-wise. Also, modern Linux desktops needed an optimal way to share off-screen buffers with the compositing manager. This leads to the development of new methods to manage graphics buffers inside the kernel. The Graphics Execution Manager (GEM) emerged as one of these methods.[5]

GEM provides an API with explicit memory management primitives.[5] Through GEM, a user space program can create, handle and destroy memory objects living in the GPU's video memory. These objects, called "GEM objects",[10] are persistent from the user space program's perspective, and don't need to be reloaded every time the program regains control of the GPU. When a user space program needs a chunk of video memory (to store a framebuffer, texture or any other data required by the GPU[11]), it requests the allocation to the DRM driver using the GEM API. The DRM driver keeps track of the used video memory, and is able to comply with the request if there is free memory available, returning a "handle" to user space to further refer the allocated memory in coming operations.[5][10] GEM API also provides operations to populate the buffer and to release it when is no more needed.

GEM also allows two or more user space processes using the same DRM device (hence the same DRM driver) to share a GEM object.[12] GEM handles are local 32-bit integers unique to a process but repeatable in other processes, therefore not suitable for sharing. What is needed is a global namespace, and GEM provides one through the use of global handles called GEM names. A GEM name refers to one, and only one, GEM object managed by the same DRM driver, by using a unique 32 bit integer. GEM provides an operation, flink, to obtain a GEM name from a GEM handle. The process can then pass this GEM name —this 32-bit integer— to another process using any IPC mechanism available. The GEM name can be used by the receiving process to obtain a local GEM handler pointing to the original GEM object.

Unfortunately, the use of GEM names to share buffers is not secure.[13][14][15] A malicious third party process accessing the same DRM device could try and guess a GEM name of a buffer shared by another two processes, simply by probing 32-bit integers.[16] Once a GEM name is found, its contents can be accessed and modified, violating the confidentiality and integrity of the buffer's information. This drawback was overcome later by the introduction of DMA-BUF support into DRM.

Another important task for any video memory management system besides managing the video memory space is handling the memory synchronization between the GPU and the CPU. Current memory architectures are very complex and usually involve various levels of caches for the system memory and sometimes for the video memory too. Hence video memory managers should also handle the cache coherence to ensure the data shared between CPU and GPU are consistent. This means that often video memory management internals are highly dependent of hardware details of the GPU and memory architecture, and therefore driver-specific.[17]

GEM was initially developed by Intel engineers to provide a video memory manager for its i915 driver. The Intel GMA 9xx family are integrated GPUs with a Uniform Memory Architecture (UMA) where the GPU and CPU share the physical memory, and there is not a dedicated VRAM.[18] GEM defines "memory domains" for memory synchronization, and while these memory domains are GPU-independent,[5] they are specifically designed with an UMA memory architecture in mind.

AGP, PCIe and other graphics cards contain an IOMMU called Graphics address remapping table (GART) which can be used to map various pages of system memory into the GPU's address space. The result is that, at any time, an arbitrary (scattered) subset of the system's RAM pages are accessible to the GPU.[4]

Kernel Mode Setting

In order to work properly, a video card or graphics adapter must set a mode —a combination of screen resolution, color depth and refresh rate— that is within the range of values supported by itself and the attached display screen. This operation is called mode-setting,[19] and it usually requires raw access to the graphics hardware —i.e. the ability to write to certain registers of the video card.[20][21] A mode-setting operation must be performed prior to start using the framebuffer, and also when the mode is required to change by an application or the user.

In early days, the user space programs that want to use the graphical framebuffer were also responsible for providing the mode-setting operations,[3] and therefore they needed to run with privileged access to the video hardware. In Unix-type operating systems, the X Server was the most prominent example, and its mode-setting implementation lived in the DDX driver for each specific type of video card.[22] This approach, later referred as User space Mode-Setting or UMS,[23][24] poses several issues.[25][19] It not only breaks the isolation that operating systems should provide between programs and hardware, raising both stability and security concerns, but also could leave the graphics hardware in an inconsistent state if two or more user space programs try to do the mode-setting at the same time. To avoid these conflicts, the X Server became in practice the only user space program that performed mode-setting operations, initially only in the server's startup process and later gaining the ability to do it on the fly. The remainder user space programs relied on the X Server to set the appropriate mode and to handle any other operation involving mode-setting.

However, this was not the only code doing mode-setting in a Linux system. During the booting process, the Linux kernel should set a minimal text mode for the virtual console (based in the standard modes defined by VESA BIOS extensions).[26] Also the kernel's framebuffer driver contained mode-setting code to configure framebuffer devices.[2] The XFree86 Server —and later the X.Org Server— handled the case when the user switched from the graphical environment to a text virtual console by saving its mode-setting state, and restoring it when the user switched back to X.[27] This process caused an annoying flicker in the transition, and also can fail, leading to a corrupted or unusable output display.[28]

With the advent of DRI, other programs, especially games, gained the ability to bypass entirely the X Server and talk directly to the graphics hardware[26] through the kernel DRM module. Several user space programs needed to be able to handle the mode-setting besides the X server, all in a coordinated way to avoid conflicts. The first approach to solve the problem was provided by XRandR, a new extension to the X protocol that, among other features, let X clients —DRI or not— ask the X Server for mode-setting changes. The X server was still in charge of the mode-setting operations, but it had to keep the current state of the mode-setting synchronized with the remainder direct rendering clients through DRI.

Finally, it was decided that the best approach was to move the mode-setting code to an single place inside the kernel, specifically to the existing DRM module.[25][29][30][31] Then, every process —including the X Server— should be able to command the kernel to perform mode-setting operations, and the kernel would ensure that concurrent operations don't result in an inconsistent state. The new kernel API and code added to the DRM module to perform these mode-setting operations was called Kernel Mode-Setting (KMS).[19]

http://elinux.org/images/4/45/Atomic_kms_driver_pinchart.pdf

Render nodes

A render node is a character device that exposes a GPU's off-screen rendering and GPGPU capabilities to unprivileged programs, without exposing any display manipulation access. This is the first step in an effort to decouple the kernel's interfaces for GPUs and display controllers from the obsolete notion of a graphics card.[7] Unprivileged off-screen rendering is presumed by both Wayland and Mir display protocols — only the compositor is entitled to send its output to a display, and rendering on behalf of client programs is outside the scope of these protocols.

Universal plane

Patches for universal plane were submitted by Intel's Matthew. D. Roper in May 2014. The idea behind universal plane is to expose all types of hardware planes to userspace via one consistent Kernel–user space API.[32] Universal plane brings framebuffers (primary planes), overlays (secondary planes) and cursors (cursor planes) together under the same API. No more type specific ioctls, but common ioctls shared by them all.[22]

Universal plane prepares the way for Atomic mode setting and nuclear pageflip.

Hardware support

The Linux DRM subsystem includes free and open source drivers to support hardware from the 3 main manufacturers of GPUs for desktop computers (AMD. NVIDIA and Intel), as well as from a growing number of mobile GPU and System on a chip (SoC) integrators. The quality of each driver highly varies, depending on the degree of cooperation by the manufacturer and other matters.

| Driver | Since kernel | Supported hardware | Status/Notes |

|---|---|---|---|

| radeon | 2.4.1 | AMD (formerly ATi) Radeon GPU series, including R100, R200, R300, R400, Radeon X1000, HD 2000, HD 4000, HD 5000 ("Evergreen"), HD 6000 ("Northern Islands"), HD 7000/HD 8000 ("Southern Islands") and Rx 200 series | |

| i915 | 2.6.9 | Intel GMA 830M, 845G, 852GM, 855GM, 865G, 915G, 945G, 965G, G35, G41, G43, G45 chipsets. Intel HD and Iris Graphics HD Graphics 2000/3000/2500/4000/4200/4400/4600/P4600/P4700/5000, Iris Graphics 5100, Iris Pro Graphics 5200 integrated GPUs. | |

| nouveau | 2.6.33[34] | NVIDIA Tesla, Fermi, Kepler, Maxwell based GeForce GPUs, Tegra K1 SoC | |

| exynos | 3.2 | Samsung ARM-based Exynos SoCs | |

| vmwgfx | 3.2 (from staging) | Virtual GPU for the VMware SVGA2 | virtual driver |

| gma500 | 3.3 (from staging) | Intel GMA 500 and other Imagination Technologies (PowerVR) based graphics GPUs | experimental 2D KMS-only driver |

| ast | 3.5 | ASpeed Technologies 2000 series | experimental |

| shmobile | 3.7 | Renesas SH Mobile | |

| tegra | 3.8 | Nvidia Tegra20, Tegra30 SoCs | |

| omapdrm | 3.9 | Texas Instruments OMAP5 SoCs | |

| msm | 3.12.[35][36] | Qualcomm's Adreno A2xx/A3xx/A4xx GPU families (Snapdragon SOCs)[37] | |

| armada | 3.13[38] | Marvell Armada 510 SoCs | |

| bochs | 3.14 | Virtual VGA cards using the Bochs dispi vga interface (such as QEMU stdvga) | virtual driver |

| sti | 3.17 | STMicroelectronics SoC stiH41x series | |

| imx | 3.19[39][40] (from staging) | Freescale i.MX SoCs | |

| rockchip | 3.19[41] | Rockchip SoC-based GPUs | KMS-only |

| amdgpu[33] | 4.2[42][43] | AMD GCN 1.2 ("Volcanic Islands") microarchitecture GPUs, including Radeon R9 285 ("Tonga") and Radeon Rx 300 series ("Fiji"),[44] as well as "Carrizo" integrated APUs | |

| virtio | 4.2 | virtual GPU driver for QEMU based virtual machine managers (like KVM or Xen) | virtual driver |

| vc4 | 4.4[45][46] | Raspberry Pi's Broadcom BCM2835 and BCM2836 SoCs (VideoCore IV GPU) | KMS-only driver[47] |

There is also a number of drivers for old, obsolete hardware detailed in the next table for historical purposes. Some of them still remains in the kernel code, but others have been already removed.

| Driver | Since kernel | Supported hardware | Status/Notes |

|---|---|---|---|

| gamma | 2.3.18 | 3Dlabs GLINT GMX 2000 | Removed since 2.6.14[48] |

| ffb | 2.4 | Creator/Creator3D (used by Sun Microsystems Ultra workstations) | Removed since 2.6.21[49] |

| tdfx | 2.4 | 3dfx Banshee/Voodoo3+ | |

| mga | 2.4 | Matrox G200/G400/G450 | |

| r128 | 2.4 | ATI Rage 128 | |

| i810 | 2.4 | Intel i810 | |

| sis | 2.4.17 | SiS 300/630/540 | |

| i830 | 2.4.20 | Intel 830M/845G/852GM/855GM/865G | Removed since 2.6.39[50] (replaced by i915 driver) |

| via | 2.6.13[51] | VIA Unichrome / Unichrome Pro | |

| savage | 2.6.14[52] | S3 Graphics Savage 3D/MX/IX/4/SuperSavage/Pro/Twister |

Development

The Direct Rendering Manager is developed within the Linux kernel, and its source code resides in the /drivers/gpu/drm directory of the Linux source code. The subsystem maintainter is Dave Airlie, with other maintainers taking care of specific drivers.[53] As usual in the Linux kernel development, DRM submaintainers and contributors send their patches with new features and bug fixes to the main DRM maintainer which integrates them into its own Linux repository. The DRM maintainer in turn submits all of these patches that are ready to be mainlined to Linus Torvalds whenever a new Linux version is going to be released. Torvalds, as top maintainer of the whole kernel, holds the last word on whether a patch is suitable or not for inclusion in the kernel.

For historical reasons, the source code of the libdrm library is maintained under the umbrella of the Mesa project.[54]

History

In 1999, while developing DRI for XFree86, Precision Insight created the first version of DRM for the 3dfx video cards, as a linux kernel patch included within the Mesa source code.[55] Later that year, the DRM code was mainlined in Linux kernel 2.3.18 under the /drivers/char/drm/ directory for character devices.[56] During the following years the number of supported video cards grew. When Linux 2.4.0 was released in January 2001 there was already support for Creative Labs GMX 2000, Intel i810, Matrox G200/G400 and ATI Rage 128, in addition to 3dfx Voodoo3 cards,[57] and that list expanded during the 2.4.x series, with drivers for ATI Radeon cards, some SiS video cards and Intel 830M and subsequent integrated GPUs.

The split of DRM into two components, DRM core and DRM driver, called DRM core/personality split was done during the second half of 2004,[58] and merged into kernel version 2.6.11.[59] This split allowed multiple DRM drivers for multiple devices to work simultaneously, opening the way to multi-GPU support.

The increasing complexity of video memory management led to several approaches to solving this issue. The first attempt was the Translation Table Maps (TTM) memory manager, developed by Thomas Hellstrom (Tungsten Graphics) in collaboration with Eric Anholt (Intel) and Dave Airlie (Red Hat).[4] TTM was proposed for inclusion into mainline kernel 2.6.25 in November 2007,[4] and again in May 2008, but was ditched in favor of a new approach called Graphics Execution Manager (GEM).[60] GEM was first developed by Keith Packard and Eric Anholt from Intel as simpler solution for memory management for their i915 driver.[5] Intel's GEM also provides control execution flow for their i915 —and later— GPUs, but no other driver has attempted to use the whole GEM API beyond the memory management specific ioctls.

GEM was well received and merged into the Linux kernel version 2.6.28.[61]

KMS was finally merged into the Linux kernel version 2.6.29.[62][19]

Recent developments

Render nodes

In 2013, as part of GSoC, David Herrmann developed the multiple render nodes feature.[63] His code was added to the Linux kernel version 3.12 as an experimental feature[64][65][66][67][68] and enabled by default since Linux 3.17.[69]

Adoption

The Direct Rendering Manager kernel subsystem was initially developed to be used with the new Direct Rendering Infrastructure of the XFree86 4.0 display server, later inherited by its successor the X.Org Server. Therefore, the main users of DRM were DRI clients that link to the hardware accelerated Open GL implementation that lives in the Mesa 3D library, as well as the X Server itself. Nowadays DRM is also used by several Wayland compositors including Weston reference compositor. kmscon is a virtual console implementation that runs in user space using DRM's KMS facilities.[70]

Version 358.09 (beta) of the proprietary Nvidia GeForce driver received support for the DRM mode-setting interface implemented as a new kernel blob called nvidia-modeset.ko. This new driver component works in conjunction with the nvidia.ko kernel module to program the display engine (i.e. display controller) of the GPU.[71]

See also

- Free and open-source graphics device driver

- Mesa 3D

- Graphics address remapping table (GART)

- Vblank & V-sync

References

- ↑ "Linux kernel/drivers/gpu/drm/README.drm". kernel.org. Retrieved 2014-02-26.

- 1 2 Uytterhoeven, Geert. "The Frame Buffer Device". Kernel.org. Retrieved 28 January 2015.

- 1 2 3 White, Thomas. "How DRI and DRM Work". Retrieved 22 July 2014.

- 1 2 3 4 Corbet, Jonathan (6 November 2007). "Memory management for graphics processors". LWN.net. Retrieved 23 July 2014.

- 1 2 3 4 5 6 Packard, Keith; Anholt, Eric (13 May 2008). "GEM - the Graphics Execution Manager". dri-devel mailing list. Retrieved 23 July 2014.

- 1 2 Kitching, Simon. "DRM and KMS kernel modules". Retrieved 23 July 2014.

- 1 2 Herrmann, David (1 September 2013). "Splitting DRM and KMS device nodes". Retrieved 23 July 2014.

- ↑ "libdrm README". Retrieved 23 July 2014.

- ↑ Airlie, Dave (4 September 2004). "New proposed DRM interface design". dri-devel (Mailing list).

- 1 2 Barnes, Jesse; Pinchart, Laurent; Vetter, Daniel. "Linux GPU Driver Developer's Guide - Memory management". Kernel.org. Retrieved 31 January 2015.

- ↑ Vetter, Daniel. "i915/GEM Crashcourse by Daniel Vetter". Intel Open Source Technology Center. Retrieved 31 January 2015.

GEM essentially deals with graphics buffer objects (which can contain textures, renderbuffers, shaders, or all kinds of other state objects and data used by the gpu)

- ↑ Vetter, Daniel (4 May 2011). "GEM Overview". Retrieved 13 February 2015.

- ↑ Perens, Martin; Ravier, Timothée. "DRI-next/DRM2: A walkthrough the Linux Graphics stack and its security" (PDF). Retrieved 13 February 2015.

- ↑ Packard, Keith (28 September 2012). "DRI-Next". Retrieved 13 February 2015.

GEM flink has lots of issues. The flink names are global, allowing anyone with access to the device to access the flink data contents.

- ↑ Herrmann, David. "DRM Security". The 2013 X.Org Developer's Conference (XDC2013) Proceedings. Retrieved 13 February 2015.

gem-flink doesn't provide any private namespaces to applications and servers. Instead, only one global namespace is provided per DRM node. Malicious authenticated applications can attack other clients via brute-force "name-guessing" of gem buffers

- ↑ Kerrisk, Michael (25 September 2012). "XDC2012: Graphics stack security". LWN.net. Retrieved 25 November 2015.

- ↑ "drm-memory man page". Retrieved 29 January 2015.

Many modern high-end GPUs come with their own memory managers. They even include several different caches that need to be synchronized during access. [...] . Therefore, memory management on GPUs is highly driver- and hardware-dependent.

- ↑ "Intel Graphics Media Accelerator Developer's Guide". Intel Corporation. Retrieved 24 November 2015.

- 1 2 3 4 "Linux 2.6.29 - Kernel Modesetting". Linux Kernel Newbies. Retrieved 19 November 2015.

- ↑ "VGA Hardware". OSDev.org. Retrieved 23 November 2015.

- ↑ Rathmann, B. (15 February 2008). "The state of Nouveau, part I". LWN.net. Retrieved 23 November 2015.

Graphics cards are programmed in numerous ways, but most initialization and mode setting is done via memory-mapped IO. This is just a set of registers accessible to the CPU via its standard memory address space. The registers in this address space are split up into ranges dealing with various features of the graphics card such as mode setup, output control, or clock configuration.

- 1 2 Paalanen, Pekka (5 June 2014). "From pre-history to beyond the global thermonuclear war". Retrieved 29 July 2014.

- ↑ "drm-kms manpage". Ubuntu manuals. Retrieved 19 November 2015.

- ↑ Corbet, Jonathan (13 January 2010). "The end of user-space mode setting?". LWN.net. Retrieved 20 November 2015.

- 1 2 "Mode Setting Design Discussion". X.Org Wiki. Retrieved 19 November 2015.

- 1 2 Corbet, Jonathan (20 July 2004). "Kernel Summit: Video Drivers". LWN.net. Retrieved 23 November 2015.

- ↑ "Fedora - Features/KernelModeSetting". Fedora Project. Retrieved 20 November 2015.

- ↑ Barnes, Jesse (17 May 2007). "[RFC] enhancing the kernel's graphics subsystem". linux-kernel (Mailing list).

- ↑ Corbet, Jonathan (22 January 2007). "LCA: Updates on the X Window System". LWN.net. Retrieved 23 November 2015.

Looking further ahead, the X developers would like to move video card mode setting into the kernel.

- ↑ Packard, Keith (16 September 2007). "kernel-mode-drivers". Retrieved 23 November 2015.

- ↑ "DrmModesetting - Enhancing kernel graphics". DRI Wiki. Retrieved 23 November 2015.

- ↑ Roper, Matt (7 March 2014). "[RFCv2 00/10] Universal plane support". dri-devel (Mailing list).

- 1 2 Deucher, Alex (20 April 2015). "Initial amdgpu driver release". dri-devel (Mailing list).

- ↑ Skeggs, Ben. "drm/nouveau: Add DRM driver for NVIDIA GPUs". Retrieved 27 January 2015.

- ↑ "Merge the MSM driver from Rob Clark". freedesktop.org. 2013-08-28. Retrieved 2014-06-25.

- ↑ Larabel, Michael. "Snapdragon DRM/KMS Driver Merged For Linux 3.12". Phoronix. Retrieved 26 January 2015.

- ↑ Edge, Jake. "An update on the freedreno graphics driver". LWN.net. Retrieved 23 April 2015.

- ↑ King, Russell (18 October 2013). "[GIT PULL] Armada DRM support". dri-devel (Mailing list).

- ↑ Corbet, Jonathan. "3.19 Merge window part 2". LWN.net. Retrieved 9 February 2015.

- ↑ Zabel, Philipp. "drm: imx: Move imx-drm driver out of staging". Retrieved 9 February 2015.

- ↑ Corbet, Jonathan. "3.19 Merge window part 2". LWN.net. Retrieved 9 February 2015.

- ↑ Larabel, Michael. "Linux 4.2 DRM Updates: Lots Of AMD Attention, No Nouveau Driver Changes". Phoronix. Retrieved 31 August 2015.

- ↑ Corbet, Jonathan. "4.2 Merge window part 2". LWN.net. Retrieved 31 August 2015.

- ↑ Deucher, Alex (3 August 2015). "[PATCH 00/11] Add Fiji Support". dri-devel (Mailing list).

- ↑ Corbet, Jonathan (11 November 2015). "4.4 Merge window, part 1". LWN.net. Retrieved 11 January 2016.

- ↑ Larabel, Michael. "A Look At The New Features Of The Linux 4.4 Kernel". Phoronix. Retrieved 11 January 2016.

- ↑ "drm/vc4: Add KMS support for Raspberry Pi.".

- ↑ Airlie, Dave. "drm: remove the gamma driver". Retrieved 27 January 2015.

- ↑ Miller, David S. "[DRM]: Delete sparc64 FFB driver code that never gets built". Retrieved 27 January 2015.

- ↑ Bergmann, Arnd. "drm: remove i830 driver". Retrieved 27 January 2015.

- ↑ Airlie, Dave. "drm: Add via unichrome support". Retrieved 27 January 2015.

- ↑ Airlie, Dave. "drm: add savage driver". Retrieved 27 January 2015.

- ↑ "List of maintainers of the linux kernel". Kernel.org. Retrieved 14 July 2014.

- ↑ "libdrm git repository". Retrieved 23 July 2014.

- ↑ "First DRI release of 3dfx driver.". Mesa 3D. Retrieved 15 July 2014.

- ↑ "Import 2.3.18pre1". The History of Linux in GIT Repository Format 1992-2010 (2010). Retrieved 15 July 2014.

- ↑ Torvalds, Linus. "Linux 2.4.0 source code". Kernel.org. Retrieved 29 July 2014.

- ↑ Airlie, Dave (30 December 2004). "[bk pull] drm core/personality split". linux-kernel (Mailing list).

- ↑ Torvalds, Linus (11 January 2005). "Linux 2.6.11-rc1". linux-kernel (Mailing list).

- ↑ Corbet, Jonathan (28 May 2008). "GEM v. TTM". LWN.net. Retrieved 10 February 2015.

- ↑ "Linux 2.6.28 - The GEM Memory Manager for GPU memory". Linux Kernel Newbies. Retrieved 23 July 2014.

- ↑ "DRM: add mode setting support".

- ↑ Herrmann, David. "DRM Render- and Modeset-Nodes". Retrieved 21 July 2014.

- ↑ Corbet, Jonathan. "3.12 merge window, part 2". LWN.net. Retrieved 21 July 2014.

- ↑ "drm: implement experimental render nodes".

- ↑ "drm/i915: Support render nodes".

- ↑ "drm/radeon: Support render nodes".

- ↑ "drm/nouveau: Support render nodes".

- ↑ Corbet, Jonathan. "3.17 merge window, part 2". LWN.net. Retrieved 7 October 2014.

- ↑ Herrmann, David (10 December 2012). "KMSCON Introduction". Retrieved 22 November 2015.

- ↑ "Linux, Solaris, and FreeBSD driver 358.09 (beta)".

External links

- DRM home page

- The Direct Rendering Manager: Kernel Support for the Direct Rendering Infrastructure

- Linux GPU Driver Developer's Guide (formerly Linux DRM Developer's Guide)

- David Herrmann on Splitting DRM and KMS device nodes

- Embedded Linux Conference 2013 - Anatomy of an Embedded KMS driver on YouTube

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| ||||||||||||||||||||