Gesture recognition

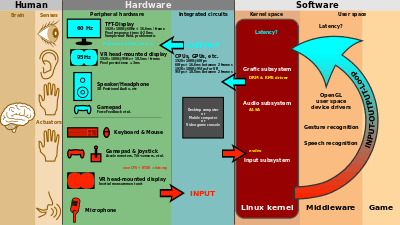

Gesture recognition is a topic in computer science and language technology with the goal of interpreting human gestures via mathematical algorithms. Gestures can originate from any bodily motion or state but commonly originate from the face or hand. Current focuses in the field include emotion recognition from face and hand gesture recognition. Many approaches have been made using cameras and computer vision algorithms to interpret sign language. However, the identification and recognition of posture, gait, proxemics, and human behaviors is also the subject of gesture recognition techniques.[1] Gesture recognition can be seen as a way for computers to begin to understand human body language, thus building a richer bridge between machines and humans than primitive text user interfaces or even GUIs (graphical user interfaces), which still limit the majority of input to keyboard and mouse.

Gesture recognition enables humans to communicate with the machine (HMI) and interact naturally without any mechanical devices. Using the concept of gesture recognition, it is possible to point a finger at the computer screen so that the cursor will move accordingly. This could potentially make conventional input devices such as mouse, keyboards and even touch-screens redundant.

Gesture recognition can be conducted with techniques from computer vision and image processing.

The literature includes ongoing work in the computer vision field on capturing gestures or more general human pose and movements by cameras connected to a computer.[2][3][4][5]

Gesture recognition and pen computing: Pen computing reduces the hardware impact of a system and also increases the range of physical world objects usable for control beyond traditional digital objects like keyboards and mice. Such implements can enable a new range of hardware not requiring monitors. This idea may lead to the creation of holographic display. The term gesture recognition has been used to refer more narrowly to non-text-input handwriting symbols, such as inking on a graphics tablet, multi-touch gestures, and mouse gesture recognition. This is computer interaction through the drawing of symbols with a pointing device cursor.[6][7][8] (see Pen computing)

Gesture types

In computer interfaces, two types of gestures are distinguished:[9] We consider online gestures, which can also be regarded as direct manipulations like scaling and rotating. In contrast, offline gestures are usually processed after the interaction is finished; e. g. a circle is drawn to activate a context menu.

- Offline gestures: Those gestures that are processed after the user interaction with the object. An example is the gesture to activate a menu.

- Online gestures: Direct manipulation gestures. They are used to scale or rotate a tangible object.

Input devices

The ability to track a person's movements and determine what gestures they may be performing can be achieved through various tools. Although there is a large amount of research done in image/video based gesture recognition, there is some variation within the tools and environments used between implementations.

- Wired gloves. These can provide input to the computer about the position and rotation of the hands using magnetic or inertial tracking devices. Furthermore, some gloves can detect finger bending with a high degree of accuracy (5-10 degrees), or even provide haptic feedback to the user, which is a simulation of the sense of touch. The first commercially available hand-tracking glove-type device was the DataGlove,[10] a glove-type device which could detect hand position, movement and finger bending. This uses fiber optic cables running down the back of the hand. Light pulses are created and when the fingers are bent, light leaks through small cracks and the loss is registered, giving an approximation of the hand pose.

- Depth-aware cameras. Using specialized cameras such as structured light or time-of-flight cameras, one can generate a depth map of what is being seen through the camera at a short range, and use this data to approximate a 3d representation of what is being seen. These can be effective for detection of hand gestures due to their short range capabilities.[11]

- Stereo cameras. Using two cameras whose relations to one another are known, a 3d representation can be approximated by the output of the cameras. To get the cameras' relations, one can use a positioning reference such as a lexian-stripe or infrared emitters.[12] In combination with direct motion measurement (6D-Vision) gestures can directly be detected.

- Controller-based gestures. These controllers act as an extension of the body so that when gestures are performed, some of their motion can be conveniently captured by software. Mouse gestures are one such example, where the motion of the mouse is correlated to a symbol being drawn by a person's hand, as is the Wii Remote or the Myo armband, which can study changes in acceleration over time to represent gestures.[13][14][15] Devices such as the LG Electronics Magic Wand, the Loop and the Scoop use Hillcrest Labs' Freespace technology, which uses MEMS accelerometers, gyroscopes and other sensors to translate gestures into cursor movement. The software also compensates for human tremor and inadvertent movement.[16][17][18] AudioCubes are another example. The sensors of these smart light emitting cubes can be used to sense hands and fingers as well as other objects nearby, and can be used to process data. Most applications are in music and sound synthesis,[19] but can be applied to other fields.

- Single camera. A standard 2D camera can be used for gesture recognition where the resources/environment would not be convenient for other forms of image-based recognition. Earlier it was thought that single camera may not be as effective as stereo or depth aware cameras, but some companies are challenging this theory. Software-based gesture recognition technology using a standard 2D camera that can detect robust hand gestures, hand signs, as well as track hands or fingertip at high accuracy has already been embedded in Lenovo’s Yoga ultrabooks , Pantech’s Vega LTE smartphones, Hisense’s Smart TV models, among other devices.

- Radar. See Project Soli revealed at Google I/O 2015. starting at 13:30, Google I/O 2015 – A little badass. Beautiful. Tech and human. Work and love. ATAP. - YouTube, and a short introduction video, Welcome to Project Soli – YouTube

Algorithms

Depending on the type of the input data, the approach for interpreting a gesture could be done in different ways. However, most of the techniques rely on key pointers represented in a 3D coordinate system. Based on the relative motion of these, the gesture can be detected with a high accuracy, depending on the quality of the input and the algorithm’s approach.

In order to interpret movements of the body, one has to classify them according to common properties and the message the movements may express. For example, in sign language each gesture represents a word or phrase. The taxonomy that seems very appropriate for Human-Computer Interaction has been proposed by Quek in "Toward a Vision-Based Hand Gesture Interface".[20] He presents several interactive gesture systems in order to capture the whole space of the gestures: 1. Manipulative; 2. Semaphoric; 3. Conversational.

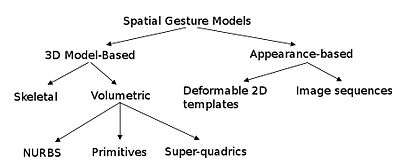

Some literature differentiates 2 different approaches in gesture recognition: a 3D model based and an appearance-based.[21] The foremost method makes use of 3D information of key elements of the body parts in order to obtain several important parameters, like palm position or joint angles. On the other hand, Appearance-based systems use images or videos for direct interpretation.

3D model-based algorithms

The 3D model approach can use volumetric or skeletal models, or even a combination of the two. Volumetric approaches have been heavily used in computer animation industry and for computer vision purposes. The models are generally created of complicated 3D surfaces, like NURBS or polygon meshes.

The drawback of this method is that is very computational intensive, and systems for live analysis are still to be developed. For the moment, a more interesting approach would be to map simple primitive objects to the person’s most important body parts ( for example cylinders for the arms and neck, sphere for the head) and analyse the way these interact with each other. Furthermore, some abstract structures like super-quadrics and generalised cylinders may be even more suitable for approximating the body parts. The exciting thing about this approach is that the parameters for these objects are quite simple. In order to better model the relation between these, we make use of constraints and hierarchies between our objects.

Skeletal-based algorithms

Instead of using intensive processing of the 3D models and dealing with a lot of parameters, one can just use a simplified version of joint angle parameters along with segment lengths. This is known as a skeletal representation of the body, where a virtual skeleton of the person is computed and parts of the body are mapped to certain segments. The analysis here is done using the position and orientation of these segments and the relation between each one of them( for example the angle between the joints and the relative position or orientation)

Advantages of using skeletal models:

- Algorithms are faster because only key parameters are analyzed.

- Pattern matching against a template database is possible

- Using key points allows the detection program to focus on the significant parts of the body

Appearance-based models

These models don’t use a spatial representation of the body anymore, because they derive the parameters directly from the images or videos using a template database. Some are based on the deformable 2D templates of the human parts of the body, particularly hands. Deformable templates are sets of points on the outline of an object, used as interpolation nodes for the object’s outline approximation. One of the simplest interpolation function is linear, which performs an average shape from point sets, point variability parameters and external deformators. These template-based models are mostly used for hand-tracking, but could also be of use for simple gesture classification.

A second approach in gesture detecting using appearance-based models uses image sequences as gesture templates. Parameters for this method are either the images themselves, or certain features derived from these. Most of the time, only one ( monoscopic) or two ( stereoscopic ) views are used.

Challenges

There are many challenges associated with the accuracy and usefulness of gesture recognition software. For image-based gesture recognition there are limitations on the equipment used and image noise. Images or video may not be under consistent lighting, or in the same location. Items in the background or distinct features of the users may make recognition more difficult.

The variety of implementations for image-based gesture recognition may also cause issue for viability of the technology to general usage. For example, an algorithm calibrated for one camera may not work for a different camera. The amount of background noise also causes tracking and recognition difficulties, especially when occlusions (partial and full) occur. Furthermore, the distance from the camera, and the camera's resolution and quality, also cause variations in recognition accuracy.

In order to capture human gestures by visual sensors, robust computer vision methods are also required, for example for hand tracking and hand posture recognition[22][23][24][25][26][27][28][29][30] or for capturing movements of the head, facial expressions or gaze direction.

"Gorilla arm"

"Gorilla arm" was a side-effect of vertically oriented touch-screen or light-pen use. In periods of prolonged use, users' arms began to feel fatigue and/or discomfort. This effect contributed to the decline of touch-screen input despite initial popularity in the 1980s.[31][32]

In order to measure arm fatigue and the gorilla arm side effect, researchers developed a technique called Consumed Endurance.[33][34]

Market trend

The market is changing rapidly due to evolving technology and more and more OEM’s are moving towards gesture recognition technology adoption. As per a report published by Markets and Markets, the gesture recognition market is estimated to grow at a healthy CAGR from 2013 till 2018 and is expected to cross $15.02 billion by the end of these five years. Analysts forecast the Global Gesture Recognition market to grow at a CAGR of 29.2 percent over the period 2013–2018.

If we talk in terms of industry, then currently consumer electronics application contributes to more than 99% of the global gesture recognition market. As per the report published, the Healthcare application is expected to emerge as a significant market for gesture recognition technologies over the next five years. The automotive application for gesture recognition is expected to be commercialized in 2015. [35]

References

- ↑ Matthias Rehm, Nikolaus Bee, Elisabeth André, Wave Like an Egyptian – Accelerometer Based Gesture Recognition for Culture Specific Interactions, British Computer Society, 2007

- ↑ Pavlovic, V., Sharma, R. & Huang, T. (1997), "Visual interpretation of hand gestures for human-computer interaction: A review", IEEE Trans. Pattern Analysis and Machine Intelligence., July, 1997. Vol. 19(7), pp. 677 -695.

- ↑ R. Cipolla and A. Pentland, Computer Vision for Human-Machine Interaction, Cambridge University Press, 1998, ISBN 978-0-521-62253-0

- ↑ Ying Wu and Thomas S. Huang, "Vision-Based Gesture Recognition: A Review", In: Gesture-Based Communication in Human-Computer Interaction, Volume 1739 of Springer Lecture Notes in Computer Science, pages 103-115, 1999, ISBN 978-3-540-66935-7, doi:10.1007/3-540-46616-9

- ↑ Alejandro Jaimes and Nicu Sebe, Multimodal human–computer interaction: A survey, Computer Vision and Image Understanding Volume 108, Issues 1-2, October–November 2007, Pages 116-134 Special Issue on Vision for Human-Computer Interaction, doi:10.1016/j.cviu.2006.10.019

- ↑ Dopertchouk, Oleg; "Recognition of Handwriting Gestures", gamedev.net, January 9, 2004

- ↑ Chen, Shijie; "Gesture Recognition Techniques in Handwriting Recognition Application", Frontiers in Handwriting Recognition p 142-147 November 2010

- ↑ Balaji, R; Deepu, V; Madhvanath, Sriganesh; Prabhakaran, Jayasree "Handwritten Gesture Recognition for Gesture Keyboard", Hewlett-Packard Laboratories

- ↑ Dietrich Kammer, Mandy Keck, Georg Freitag, Markus Wacker, Taxonomy and Overview of Multi-touch Frameworks: Architecture, Scope and Features

- ↑ Thomas G. Zimmerman, Jaron Lanier, Chuck Blanchard, Steve Bryson and Young Harvill. http://portal.acm.org. "A HAND GESTURE INTERFACE DEVICE." http://portal.acm.org.

- ↑ Yang Liu, Yunde Jia, A Robust Hand Tracking and Gesture Recognition Method for Wearable Visual Interfaces and Its Applications, Proceedings of the Third International Conference on Image and Graphics (ICIG’04), 2004

- ↑ Kue-Bum Lee, Jung-Hyun Kim, Kwang-Seok Hong, An Implementation of Multi-Modal Game Interface Based on PDAs, Fifth International Conference on Software Engineering Research, Management and Applications, 2007

- ↑ Per Malmestig, Sofie Sundberg, SignWiiver – implementation of sign language technology

- ↑ Thomas Schlomer, Benjamin Poppinga, Niels Henze, Susanne Boll, Gesture Recognition with a Wii Controller, Proceedings of the 2nd international Conference on Tangible and Embedded interaction, 2008

- ↑ AiLive Inc., LiveMove White Paper, 2006

- ↑ Electronic Design September 8, 2011. William Wong. Natural User Interface Employs Sensor Integration.

- ↑ Cable & Satellite International September/October, 2011. Stephen Cousins. A view to a thrill.

- ↑ TechJournal South January 7, 2008. Hillcrest Labs rings up $25M D round.

- ↑ Percussa AudioCubes Blog October 4, 2012. Gestural Control in Sound Synthesis.

- ↑ Quek, F., "Toward a vision-based hand gesture interface" Proceedings of the Virtual Reality System Technology Conference, pp. 17-29, August 23–26, 1994, Singapore

- ↑ Vladimir I. Pavlovic, Rajeev Sharma, Thomas S. Huang, Visual Interpretation of Hand Gestures for Human-Computer Interaction; A Review, IEEE Transactions on Pattern Analysis and Machine Intelligence, 1997

- ↑ Ivan Laptev and Tony Lindeberg "Tracking of Multi-state Hand Models Using Particle Filtering and a Hierarchy of Multi-scale Image Features", Proceedings Scale-Space and Morphology in Computer Vision, Volume 2106 of Springer Lecture Notes in Computer Science, pages 63-74, Vancouver, BC, 1999. ISBN 978-3-540-42317-1, doi:10.1007/3-540-47778-0

- ↑ von Hardenberg, Christian; Bérard, François (2001). "Bare-hand human-computer interaction". Proceedings of the 2001 workshop on Perceptive user interfaces. ACM International Conference Proceeding Series. Orlando, Florida. pp. 1–8. CiteSeerX: 10

.1 ..1 .23 .4541 - ↑ Lars Bretzner, Ivan Laptev, Tony Lindeberg "Hand gesture recognition using multi-scale colour features, hierarchical models and particle filtering", Proceedings of the Fifth IEEE International Conference on Automatic Face and Gesture Recognition, Washington, DC, USA, 21–21 May 2002, pages 423-428. ISBN 0-7695-1602-5, doi:10.1109/AFGR.2002.1004190

- ↑ Domitilla Del Vecchio, Richard M. Murray Pietro Perona, "Decomposition of human motion into dynamics-based primitives with application to drawing tasks", Automatica Volume 39, Issue 12, December 2003, Pages 2085–2098 , doi:10.1016/S0005-1098(03)00250-4.

- ↑ Thomas B. Moeslund and Lau Nørgaard, "A Brief Overview of Hand Gestures used in Wearable Human Computer Interfaces", Technical report: CVMT 03-02, ISSN 1601-3646, Laboratory of Computer Vision and Media Technology, Aalborg University, Denmark.

- ↑ M. Kolsch and M. Turk "Fast 2D Hand Tracking with Flocks of Features and Multi-Cue Integration", CVPRW '04. Proceedings Computer Vision and Pattern Recognition Workshop, May 27-June 2, 2004, doi:10.1109/CVPR.2004.71

- ↑ Xia Liu Fujimura, K., "Hand gesture recognition using depth data", Proceedings of the Sixth IEEE International Conference on Automatic Face and Gesture Recognition, May 17–19, 2004 pages 529- 534, ISBN 0-7695-2122-3, doi:10.1109/AFGR.2004.1301587.

- ↑ Stenger B, Thayananthan A, Torr PH, Cipolla R: "Model-based hand tracking using a hierarchical Bayesian filter", IEEE Transactions on Pattern Analysis and Machine Intelligence, 28(9):1372-84, Sep 2006.

- ↑ A Erol, G Bebis, M Nicolescu, RD Boyle, X Twombly, "Vision-based hand pose estimation: A review", Computer Vision and Image Understanding Volume 108, Issues 1-2, October–November 2007, Pages 52-73 Special Issue on Vision for Human-Computer Interaction, doi:10.1016/j.cviu.2006.10.012.

- ↑ Rupert Goodwins. "Windows 7? No arm in it". ZDNet.

- ↑ "gorilla arm". catb.org.

- ↑ Hincapié-Ramos, J.D., Guo, X., Moghadasian, P. and Irani. P. 2014. "Consumed Endurance: A Metric to Quantify Arm Fatigue of Mid-Air Interactions". In Proceedings of the 32nd annual ACM conference on Human factors in computing systems (CHI '14). ACM, New York, NY, USA, 1063–1072. DOI=10.1145/2556288.2557130

- ↑ Hincapié-Ramos, J.D., Guo, X., and Irani, P. 2014. "The Consumed Endurance Workbench: A Tool to Assess Arm Fatigue During Mid-Air Interactions". In Proceedings of the 2014 companion publication on Designing interactive systems (DIS Companion '14). ACM, New York, NY, USA, 109-112. DOI=10.1145/2598784.2602795

- ↑ http://www.hcltech.com/sites/default/files/gesture_recognition.pdf

External links

- Annotated bibliography of references to gesture and pen computing

- Notes on the History of Pen-based Computing (YouTube)

- The future, it is all a Gesture—Gesture interfaces and video gaming

- Ford's Gesturally Interactive Advert—Gestures used to interact with digital signage

- Kinetic Space—3D Gesture Recognition, Training & Analysis

- Look Ma No Trackpad—Gestures used to control Spotify & iTunes

- The Gesture Recognition Toolkit—An open-source toolkit for gesture recognition