Second law of thermodynamics

| Thermodynamics | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

The classical Carnot heat engine | ||||||||||||

|

Branches |

||||||||||||

|

||||||||||||

| Book:Thermodynamics | ||||||||||||

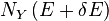

The second law of thermodynamics specifies the characteristic change in the entropy of a system undergoing a real process. The law accounts for the irreversibility of natural processes, and the asymmetry between future and past. For a system without exchange of matter with the surroundings, the change in system entropy exceeds the heat exchanged with the surroundings, divided by the temperature of the surroundings. In the idealized limiting case of a reversible process, the two quantities are equal, and the total entropy of system and surroundings remains unchanged. When heat exchange with the surroundings is prevented, the law states that in every real process the sum of the entropies of all participating bodies is increased.

While applicable to more general processes, the law is often analyzed for an event in which bodies initially in thermodynamic equilibrium are put into contact and allowed to come to a new equilibrium. This equilibration process involves the spread, dispersal, or dissipation[1] of matter or energy and results in an increase of entropy.

The second law is an empirical finding that has been accepted as an axiom of thermodynamic theory. Statistical thermodynamics, classical or quantum, explains the microscopic origin of the law.

The second law has been expressed in many ways. Its first formulation is credited to the French scientist Sadi Carnot in 1824 (see Timeline of thermodynamics). Carnot showed that there is an upper limit to the efficiency of conversion of heat to work in a cyclic heat engine operating between two given temperatures.

Introduction

Intuitive meaning of the law

The second law is about thermodynamic systems or bodies of matter and radiation, initially each in its own state of internal thermodynamic equilibrium, and separated from one another by walls that partly or wholly allow or prevent the passage of matter and energy between them.[2][3][4][5][6][7]

The law envisages that the walls are changed by some external agency, making them less restrictive or constraining and more permeable in various ways.[8][9][10][11] Thereby a process is defined, establishing new equilibrium states.

The process invariably spreads,[12][13][14][15] disperses, and dissipates[1][16] matter or energy, or both, amongst the bodies. Some energy, inside or outside the system, is degraded in its ability to do work.[17] This is quantitatively described by increase of entropy. It is the consequence of decrease of constraint by a wall. An increase of constraint by a wall has no effect on an established thermodynamic equilibrium.

For an example of the spreading of matter, one may consider a gas initially confined by an impermeable wall to one of two compartments of an isolated system. The wall is then removed. The gas spreads throughout both compartments.[11] The sum of the entropies of the two compartments increases. Reinsertion of the impermeable wall does not change the spread of the gas between the compartments. For an example of the spreading of energy, one may consider a wall impermeable to matter and energy initially separating two otherwise isolated bodies at different temperatures. A thermodynamic operation makes the wall become permeable only to heat, which then passes from the hotter to the colder body, until their temperatures become equal. The sum of the entropies of the two bodies increases. Restoration of the complete impermeability of the wall does not change the equality of the temperatures. The spreading is a change from heterogeneity towards homogeneity.

It is the unconstraining of the initial equilibrium that causes the increase of entropy and the change towards homogeneity.[9] The following reasoning offers intuitive understanding of this fact. One may imagine that the freshly unconstrained system, still relatively heterogeneous, immediately after the intervention that increased the wall permeability, in its transient condition, arose by spontaneous evolution from an unconstrained previous transient condition of the system. One can then ask, what is the probable such imagined previous condition. The answer is that, overwhelmingly probably, it is just the very same kind of homogeneous condition as that to which the relatively heterogeneous condition will overwhelmingly probably evolve. Obviously, this is possible only in the imagined absence of the constraint that was actually present until its removal. In this light, the reversibility of the dynamics of the evolution of the unconstrained system is evident, in accord with the ordinary laws of microscopic dynamics. It is the removal of the constraint that is effective in causing the change towards homogeneity, not some imagined or apparent "irreversibility" of the laws of spontaneous evolution.[18] This reasoning is of intuitive interest, but is essentially about microstates, and therefore does not belong to macroscopic equilibrium thermodynamics, which studiously ignores consideration of microstates, and non-equilibrium considerations of this kind. It does, however, forestall futile puzzling about some famous proposed "paradoxes", imagining of a "derivation" of an "arrow of time" from the second law,[19] and meaningless speculation about an imagined "low entropy state" of the early universe.[20]

Though it is more or less intuitive to imagine 'spreading', such loose intuition is, for many thermodynamic processes, too vague or imprecise to be usefully quantitatively informative, because competing possibilities of spreading can coexist, for example due to an increase of some constraint combined with decrease of another. The second law justifies the concept of entropy, which makes the notion of 'spreading' suitably precise, allowing quantitative predictions of just how spreading will occur in particular circumstances. It is characteristic of the physical quantity entropy that it refers to states of thermodynamic equilibrium.[21][22][23]

General significance of the law

The first law of thermodynamics provides the basic definition of thermodynamic energy, also called internal energy, associated with all thermodynamic systems, but unknown in classical mechanics, and states the rule of conservation of energy in nature.[24][25]

The concept of energy in the first law does not, however, account for the observation that natural processes have a preferred direction of progress. The first law is symmetrical with respect to the initial and final states of an evolving system. But the second law asserts that a natural process runs only in one sense, and is not reversible. For example, heat always flows spontaneously from hotter to colder bodies, and never the reverse, unless external work is performed on the system. The key concept for the explanation of this phenomenon through the second law of thermodynamics is the definition of a new physical quantity, the entropy.[26][27]

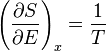

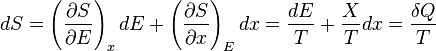

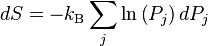

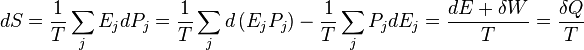

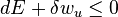

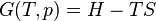

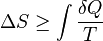

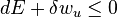

For mathematical analysis of processes, entropy is introduced as follows. In a fictive reversible process, an infinitesimal increment in the entropy (dS) of a system results from an infinitesimal transfer of heat (δQ) to a closed system divided by the common temperature (T) of the system and the surroundings which supply the heat:[28]

For an actually possible infinitesimal process without exchange of matter with the surroundings, the second law requires that the increment in system entropy be greater than that:

This is because a general process for this case may include work being done on the system by its surroundings, which must have frictional or viscous effects inside the system, and because heat transfer actually occurs only irreversibly, driven by a finite temperature difference.[29][30]

The zeroth law of thermodynamics in its usual short statement allows recognition that two bodies in a relation of thermal equilibrium have the same temperature, especially that a test body has the same temperature as a reference thermometric body.[31] For a body in thermal equilibrium with another, there are indefinitely many empirical temperature scales, in general respectively depending on the properties of a particular reference thermometric body. The second law allows a distinguished temperature scale, which defines an absolute, thermodynamic temperature, independent of the properties of any particular reference thermometric body.[32][33]

Various statements of the law

The second law of thermodynamics may be expressed in many specific ways,[34] the most prominent classical statements[35] being the statement by Rudolf Clausius (1854), the statement by Lord Kelvin (1851), and the statement in axiomatic thermodynamics by Constantin Carathéodory (1909). These statements cast the law in general physical terms citing the impossibility of certain processes. The Clausius and the Kelvin statements have been shown to be equivalent.[36]

Carnot's principle

The historical origin of the second law of thermodynamics was in Carnot's principle. It refers to a cycle of a Carnot engine, fictively operated in the limiting mode of extreme slowness known as quasi-static, so that the heat and work transfers are between subsystems that are always in their own internal states of thermodynamic equilibrium. The Carnot engine is an idealized device of special interest to engineers who are concerned with the efficiency of heat engines. Carnot's principle was recognized by Carnot at a time when the caloric theory of heat was seriously considered, before the recognition of the first law of thermodynamics, and before the mathematical expression of the concept of entropy. Interpreted in the light of the first law, it is physically equivalent to the second law of thermodynamics, and remains valid today. It states

The efficiency of a quasi-static or reversible Carnot cycle depends only on the temperatures of the two heat reservoirs, and is the same, whatever the working substance. A Carnot engine operated in this way is the most efficient possible heat engine using those two temperatures.[37][38][39][40][41][42][43]

Clausius statement

The German scientist Rudolf Clausius laid the foundation for the second law of thermodynamics in 1850 by examining the relation between heat transfer and work.[44] His formulation of the second law, which was published in German in 1854, is known as the Clausius statement:

Heat can never pass from a colder to a warmer body without some other change, connected therewith, occurring at the same time.[45]

The statement by Clausius uses the concept of 'passage of heat'. As is usual in thermodynamic discussions, this means 'net transfer of energy as heat', and does not refer to contributory transfers one way and the other.

Heat cannot spontaneously flow from cold regions to hot regions without external work being performed on the system, which is evident from ordinary experience of refrigeration, for example. In a refrigerator, heat flows from cold to hot, but only when forced by an external agent, the refrigeration system.

Kelvin statement

Lord Kelvin expressed the second law as

It is impossible, by means of inanimate material agency, to derive mechanical effect from any portion of matter by cooling it below the temperature of the coldest of the surrounding objects.[46]

Equivalence of the Clausius and the Kelvin statements

Suppose there is an engine violating the Kelvin statement: i.e., one that drains heat and converts it completely into work in a cyclic fashion without any other result. Now pair it with a reversed Carnot engine as shown by the figure. The net and sole effect of this newly created engine consisting of the two engines mentioned is transferring heat  from the cooler reservoir to the hotter one, which violates the Clausius statement. Thus a violation of the Kelvin statement implies a violation of the Clausius statement, i.e. the Clausius statement implies the Kelvin statement. We can prove in a similar manner that the Kelvin statement implies the Clausius statement, and hence the two are equivalent.

from the cooler reservoir to the hotter one, which violates the Clausius statement. Thus a violation of the Kelvin statement implies a violation of the Clausius statement, i.e. the Clausius statement implies the Kelvin statement. We can prove in a similar manner that the Kelvin statement implies the Clausius statement, and hence the two are equivalent.

Planck's proposition

Planck offered the following proposition as derived directly from experience. This is sometimes regarded as his statement of the second law, but he regarded it as a starting point for the derivation of the second law.

Relation between Kelvin's statement and Planck's proposition

It is almost customary in textbooks to speak of the "Kelvin-Planck statement" of the law, as for example in the text by ter Haar and Wergeland.[49] One text gives a statement very like Planck's proposition, but attributes it to Kelvin without mention of Planck.[50] One monograph quotes Planck's proposition as the "Kelvin-Planck" formulation, the text naming Kelvin as its author, though it correctly cites Planck in its references.[51] The reader may compare the two statements quoted just above here.

Planck's statement

Planck stated the second law as follows.

Rather like Planck's statement is that of Uhlenbeck and Ford for irreversible phenomena.

- ... in an irreversible or spontaneous change from one equilibrium state to another (as for example the equalization of temperature of two bodies A and B, when brought in contact) the entropy always increases.[55]

Principle of Carathéodory

Constantin Carathéodory formulated thermodynamics on a purely mathematical axiomatic foundation. His statement of the second law is known as the Principle of Carathéodory, which may be formulated as follows:[56]

In every neighborhood of any state S of an adiabatically enclosed system there are states inaccessible from S.[57]

With this formulation, he described the concept of adiabatic accessibility for the first time and provided the foundation for a new subfield of classical thermodynamics, often called geometrical thermodynamics. It follows from Carathéodory's principle that quantity of energy quasi-statically transferred as heat is a holonomic process function, in other words,  .[58]

.[58]

Though it is almost customary in textbooks to say that Carathéodory's principle expresses the second law and to treat it as equivalent to the Clausius or to the Kelvin-Planck statements, such is not the case. To get all the content of the second law, Carathéodory's principle needs to be supplemented by Planck's principle, that isochoric work always increases the internal energy of a closed system that was initially in its own internal thermodynamic equilibrium.[59][60][61][62]

Planck's Principle

In 1926, Max Planck wrote an important paper on the basics of thermodynamics.[61][63] He indicated the principle

This formulation does not mention heat and does not mention temperature, nor even entropy, and does not necessarily implicitly rely on those concepts, but it implies the content of the second law. A closely related statement is that "Frictional pressure never does positive work."[64] Using a now-obsolete form of words, Planck himself wrote: "The production of heat by friction is irreversible."[65][66]

Not mentioning entropy, this principle of Planck is stated in physical terms. It is very closely related to the Kelvin statement given just above.[67] It is relevant that for a system at constant volume and mole numbers, the entropy is a monotonic function of the internal energy. Nevertheless, this principle of Planck is not actually Planck's preferred statement of the second law, which is quoted above, in a previous sub-section of the present section of this present article, and relies on the concept of entropy.

A statement that in a sense is complementary to Planck's principle is made by Borgnakke and Sonntag. They do not offer it as a full statement of the second law:

- ... there is only one way in which the entropy of a [closed] system can be decreased, and that is to transfer heat from the system.[68]

Differing from Planck's just foregoing principle, this one is explicitly in terms of entropy change. Of course, removal of matter from a system can also decrease its entropy.

Statement for a system that has a known expression of its internal energy as a function of its extensive state variables

The second law has been shown to be equivalent to the internal energy U being a weakly convex function, when written as a function of extensive properties (mass, volume, entropy, ...).[69][70]

Corollaries

Perpetual motion of the second kind

Before the establishment of the Second Law, many people who were interested in inventing a perpetual motion machine had tried to circumvent the restrictions of First Law of Thermodynamics by extracting the massive internal energy of the environment as the power of the machine. Such a machine is called a "perpetual motion machine of the second kind". The second law declared the impossibility of such machines.

Carnot theorem

Carnot's theorem (1824) is a principle that limits the maximum efficiency for any possible engine. The efficiency solely depends on the temperature difference between the hot and cold thermal reservoirs. Carnot's theorem states:

- All irreversible heat engines between two heat reservoirs are less efficient than a Carnot engine operating between the same reservoirs.

- All reversible heat engines between two heat reservoirs are equally efficient with a Carnot engine operating between the same reservoirs.

In his ideal model, the heat of caloric converted into work could be reinstated by reversing the motion of the cycle, a concept subsequently known as thermodynamic reversibility. Carnot, however, further postulated that some caloric is lost, not being converted to mechanical work. Hence, no real heat engine could realise the Carnot cycle's reversibility and was condemned to be less efficient.

Though formulated in terms of caloric (see the obsolete caloric theory), rather than entropy, this was an early insight into the second law.

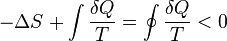

Clausius Inequality

The Clausius Theorem (1854) states that in a cyclic process

The equality holds in the reversible case[71] and the '<' is in the irreversible case. The reversible case is used to introduce the state function entropy. This is because in cyclic processes the variation of a state function is zero from state functionality.

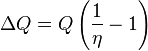

Thermodynamic temperature

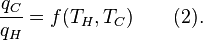

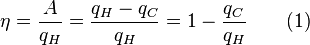

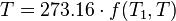

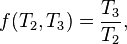

For an arbitrary heat engine, the efficiency is:

where A is the work done per cycle. Thus the efficiency depends only on qC/qH.

Carnot's theorem states that all reversible engines operating between the same heat reservoirs are equally efficient.

Thus, any reversible heat engine operating between temperatures T1 and T2 must have the same efficiency, that is to say, the efficiency is the function of temperatures only:

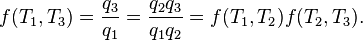

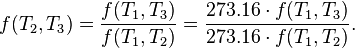

In addition, a reversible heat engine operating between temperatures T1 and T3 must have the same efficiency as one consisting of two cycles, one between T1 and another (intermediate) temperature T2, and the second between T2 andT3. This can only be the case if

Now consider the case where  is a fixed reference temperature: the temperature of the triple point of water. Then for any T2 and T3,

is a fixed reference temperature: the temperature of the triple point of water. Then for any T2 and T3,

Therefore, if thermodynamic temperature is defined by

then the function f, viewed as a function of thermodynamic temperature, is simply

and the reference temperature T1 will have the value 273.16. (Of course any reference temperature and any positive numerical value could be used—the choice here corresponds to the Kelvin scale.)

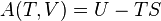

Entropy

According to the Clausius equality, for a reversible process

That means the line integral  is path independent.

is path independent.

So we can define a state function S called entropy, which satisfies

With this we can only obtain the difference of entropy by integrating the above formula. To obtain the absolute value, we need the Third Law of Thermodynamics, which states that S=0 at absolute zero for perfect crystals.

For any irreversible process, since entropy is a state function, we can always connect the initial and terminal states with an imaginary reversible process and integrating on that path to calculate the difference in entropy.

Now reverse the reversible process and combine it with the said irreversible process. Applying Clausius inequality on this loop,

Thus,

where the equality holds if the transformation is reversible.

Notice that if the process is an adiabatic process, then  , so

, so  .

.

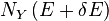

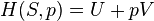

Energy, available useful work

An important and revealing idealized special case is to consider applying the Second Law to the scenario of an isolated system (called the total system or universe), made up of two parts: a sub-system of interest, and the sub-system's surroundings. These surroundings are imagined to be so large that they can be considered as an unlimited heat reservoir at temperature TR and pressure PR — so that no matter how much heat is transferred to (or from) the sub-system, the temperature of the surroundings will remain TR; and no matter how much the volume of the sub-system expands (or contracts), the pressure of the surroundings will remain PR.

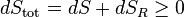

Whatever changes to dS and dSR occur in the entropies of the sub-system and the surroundings individually, according to the Second Law the entropy Stot of the isolated total system must not decrease:

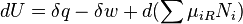

According to the First Law of Thermodynamics, the change dU in the internal energy of the sub-system is the sum of the heat δq added to the sub-system, less any work δw done by the sub-system, plus any net chemical energy entering the sub-system d ∑μiRNi, so that:

where μiR are the chemical potentials of chemical species in the external surroundings.

Now the heat leaving the reservoir and entering the sub-system is

where we have first used the definition of entropy in classical thermodynamics (alternatively, in statistical thermodynamics, the relation between entropy change, temperature and absorbed heat can be derived); and then the Second Law inequality from above.

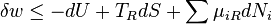

It therefore follows that any net work δw done by the sub-system must obey

It is useful to separate the work δw done by the subsystem into the useful work δwu that can be done by the sub-system, over and beyond the work pR dV done merely by the sub-system expanding against the surrounding external pressure, giving the following relation for the useful work (exergy) that can be done:

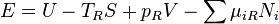

It is convenient to define the right-hand-side as the exact derivative of a thermodynamic potential, called the availability or exergy E of the subsystem,

The Second Law therefore implies that for any process which can be considered as divided simply into a subsystem, and an unlimited temperature and pressure reservoir with which it is in contact,

i.e. the change in the subsystem's exergy plus the useful work done by the subsystem (or, the change in the subsystem's exergy less any work, additional to that done by the pressure reservoir, done on the system) must be less than or equal to zero.

In sum, if a proper infinite-reservoir-like reference state is chosen as the system surroundings in the real world, then the Second Law predicts a decrease in E for an irreversible process and no change for a reversible process.

-

Is equivalent to

Is equivalent to

This expression together with the associated reference state permits a design engineer working at the macroscopic scale (above the thermodynamic limit) to utilize the Second Law without directly measuring or considering entropy change in a total isolated system. (Also, see process engineer). Those changes have already been considered by the assumption that the system under consideration can reach equilibrium with the reference state without altering the reference state. An efficiency for a process or collection of processes that compares it to the reversible ideal may also be found (See second law efficiency.)

This approach to the Second Law is widely utilized in engineering practice, environmental accounting, systems ecology, and other disciplines.

History

The first theory of the conversion of heat into mechanical work is due to Nicolas Léonard Sadi Carnot in 1824. He was the first to realize correctly that the efficiency of this conversion depends on the difference of temperature between an engine and its environment.

Recognizing the significance of James Prescott Joule's work on the conservation of energy, Rudolf Clausius was the first to formulate the second law during 1850, in this form: heat does not flow spontaneously from cold to hot bodies. While common knowledge now, this was contrary to the caloric theory of heat popular at the time, which considered heat as a fluid. From there he was able to infer the principle of Sadi Carnot and the definition of entropy (1865).

Established during the 19th century, the Kelvin-Planck statement of the Second Law says, "It is impossible for any device that operates on a cycle to receive heat from a single reservoir and produce a net amount of work." This was shown to be equivalent to the statement of Clausius.

The ergodic hypothesis is also important for the Boltzmann approach. It says that, over long periods of time, the time spent in some region of the phase space of microstates with the same energy is proportional to the volume of this region, i.e. that all accessible microstates are equally probable over a long period of time. Equivalently, it says that time average and average over the statistical ensemble are the same.

There is a traditional doctrine, starting with Clausius, that entropy can be understood in terms of molecular 'disorder' within a macroscopic system. This doctrine is obsolescent.[72][73][74]

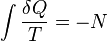

Account given by Clausius

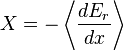

In 1856, the German physicist Rudolf Clausius stated what he called the "second fundamental theorem in the mechanical theory of heat" in the following form:[75]

where Q is heat, T is temperature and N is the "equivalence-value" of all uncompensated transformations involved in a cyclical process. Later, in 1865, Clausius would come to define "equivalence-value" as entropy. On the heels of this definition, that same year, the most famous version of the second law was read in a presentation at the Philosophical Society of Zurich on April 24, in which, in the end of his presentation, Clausius concludes:

The entropy of the universe tends to a maximum.

This statement is the best-known phrasing of the second law. Because of the looseness of its language, e.g. universe, as well as lack of specific conditions, e.g. open, closed, or isolated, many people take this simple statement to mean that the second law of thermodynamics applies virtually to every subject imaginable. This, of course, is not true; this statement is only a simplified version of a more extended and precise description.

In terms of time variation, the mathematical statement of the second law for an isolated system undergoing an arbitrary transformation is:

where

- S is the entropy of the system and

- t is time.

The equality sign applies after equilibration. An alternative way of formulating of the second law for isolated systems is:

-

with

with

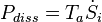

with  the sum of the rate of entropy production by all processes inside the system. The advantage of this formulation is that it shows the effect of the entropy production. The rate of entropy production is a very important concept since it determines (limits) the efficiency of thermal machines. Multiplied with ambient temperature

the sum of the rate of entropy production by all processes inside the system. The advantage of this formulation is that it shows the effect of the entropy production. The rate of entropy production is a very important concept since it determines (limits) the efficiency of thermal machines. Multiplied with ambient temperature  it gives the so-called dissipated energy

it gives the so-called dissipated energy  .

.

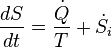

The expression of the second law for closed systems (so, allowing heat exchange and moving boundaries, but not exchange of matter) is:

-

with

with

Here

-

is the heat flow into the system

is the heat flow into the system -

is the temperature at the point where the heat enters the system.

is the temperature at the point where the heat enters the system.

The equality sign holds in the case that only reversible processes take place inside the system. If irreversible processes take place (which is the case in real systems in operation) the >-sign holds. If heat is supplied to the system at several places we have to take the algebraic sum of the corresponding terms.

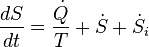

For open systems (also allowing exchange of matter):

-

with

with

Here  is the flow of entropy into the system associated with the flow of matter entering the system. It should not be confused with the time derivative of the entropy. If matter is supplied at several places we have to take the algebraic sum of these contributions.

is the flow of entropy into the system associated with the flow of matter entering the system. It should not be confused with the time derivative of the entropy. If matter is supplied at several places we have to take the algebraic sum of these contributions.

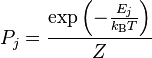

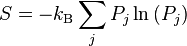

Statistical mechanics

Statistical mechanics gives an explanation for the second law by postulating that a material is composed of atoms and molecules which are in constant motion. A particular set of positions and velocities for each particle in the system is called a microstate of the system and because of the constant motion, the system is constantly changing its microstate. Statistical mechanics postulates that, in equilibrium, each microstate that the system might be in is equally likely to occur, and when this assumption is made, it leads directly to the conclusion that the second law must hold in a statistical sense. That is, the second law will hold on average, with a statistical variation on the order of 1/√N where N is the number of particles in the system. For everyday (macroscopic) situations, the probability that the second law will be violated is practically zero. However, for systems with a small number of particles, thermodynamic parameters, including the entropy, may show significant statistical deviations from that predicted by the second law. Classical thermodynamic theory does not deal with these statistical variations.

Derivation from statistical mechanics

Due to Loschmidt's paradox, derivations of the Second Law have to make an assumption regarding the past, namely that the system is uncorrelated at some time in the past; this allows for simple probabilistic treatment. This assumption is usually thought as a boundary condition, and thus the second Law is ultimately a consequence of the initial conditions somewhere in the past, probably at the beginning of the universe (the Big Bang), though other scenarios have also been suggested.[76][77][78]

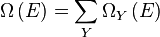

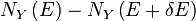

Given these assumptions, in statistical mechanics, the Second Law is not a postulate, rather it is a consequence of the fundamental postulate, also known as the equal prior probability postulate, so long as one is clear that simple probability arguments are applied only to the future, while for the past there are auxiliary sources of information which tell us that it was low entropy. The first part of the second law, which states that the entropy of a thermally isolated system can only increase, is a trivial consequence of the equal prior probability postulate, if we restrict the notion of the entropy to systems in thermal equilibrium. The entropy of an isolated system in thermal equilibrium containing an amount of energy of  is:

is:

where  is the number of quantum states in a small interval between

is the number of quantum states in a small interval between  and

and  . Here

. Here  is a macroscopically small energy interval that is kept fixed. Strictly speaking this means that the entropy depends on the choice of

is a macroscopically small energy interval that is kept fixed. Strictly speaking this means that the entropy depends on the choice of  . However, in the thermodynamic limit (i.e. in the limit of infinitely large system size), the specific entropy (entropy per unit volume or per unit mass) does not depend on

. However, in the thermodynamic limit (i.e. in the limit of infinitely large system size), the specific entropy (entropy per unit volume or per unit mass) does not depend on  .

.

Suppose we have an isolated system whose macroscopic state is specified by a number of variables. These macroscopic variables can, e.g., refer to the total volume, the positions of pistons in the system, etc. Then  will depend on the values of these variables. If a variable is not fixed, (e.g. we do not clamp a piston in a certain position), then because all the accessible states are equally likely in equilibrium, the free variable in equilibrium will be such that

will depend on the values of these variables. If a variable is not fixed, (e.g. we do not clamp a piston in a certain position), then because all the accessible states are equally likely in equilibrium, the free variable in equilibrium will be such that  is maximized as that is the most probable situation in equilibrium.

is maximized as that is the most probable situation in equilibrium.

If the variable was initially fixed to some value then upon release and when the new equilibrium has been reached, the fact the variable will adjust itself so that  is maximized, implies that the entropy will have increased or it will have stayed the same (if the value at which the variable was fixed happened to be the equilibrium value).

Suppose we start from an equilibrium situation and we suddenly remove a constraint on a variable. Then right after we do this, there are a number

is maximized, implies that the entropy will have increased or it will have stayed the same (if the value at which the variable was fixed happened to be the equilibrium value).

Suppose we start from an equilibrium situation and we suddenly remove a constraint on a variable. Then right after we do this, there are a number  of accessible microstates, but equilibrium has not yet been reached, so the actual probabilities of the system being in some accessible state are not yet equal to the prior probability of

of accessible microstates, but equilibrium has not yet been reached, so the actual probabilities of the system being in some accessible state are not yet equal to the prior probability of  . We have already seen that in the final equilibrium state, the entropy will have increased or have stayed the same relative to the previous equilibrium state. Boltzmann's H-theorem, however, proves that the quantity H increases monotonically as a function of time during the intermediate out of equilibrium state.

. We have already seen that in the final equilibrium state, the entropy will have increased or have stayed the same relative to the previous equilibrium state. Boltzmann's H-theorem, however, proves that the quantity H increases monotonically as a function of time during the intermediate out of equilibrium state.

Derivation of the entropy change for reversible processes

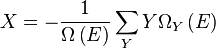

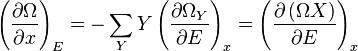

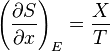

The second part of the Second Law states that the entropy change of a system undergoing a reversible process is given by:

where the temperature is defined as:

See here for the justification for this definition. Suppose that the system has some external parameter, x, that can be changed. In general, the energy eigenstates of the system will depend on x. According to the adiabatic theorem of quantum mechanics, in the limit of an infinitely slow change of the system's Hamiltonian, the system will stay in the same energy eigenstate and thus change its energy according to the change in energy of the energy eigenstate it is in.

The generalized force, X, corresponding to the external variable x is defined such that  is the work performed by the system if x is increased by an amount dx. E.g., if x is the volume, then X is the pressure. The generalized force for a system known to be in energy eigenstate

is the work performed by the system if x is increased by an amount dx. E.g., if x is the volume, then X is the pressure. The generalized force for a system known to be in energy eigenstate  is given by:

is given by:

Since the system can be in any energy eigenstate within an interval of  , we define the generalized force for the system as the expectation value of the above expression:

, we define the generalized force for the system as the expectation value of the above expression:

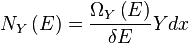

To evaluate the average, we partition the  energy eigenstates by counting how many of them have a value for

energy eigenstates by counting how many of them have a value for  within a range between

within a range between  and

and  . Calling this number

. Calling this number  , we have:

, we have:

The average defining the generalized force can now be written:

We can relate this to the derivative of the entropy with respect to x at constant energy E as follows. Suppose we change x to x + dx. Then  will change because the energy eigenstates depend on x, causing energy eigenstates to move into or out of the range between

will change because the energy eigenstates depend on x, causing energy eigenstates to move into or out of the range between  and

and  . Let's focus again on the energy eigenstates for which

. Let's focus again on the energy eigenstates for which  lies within the range between

lies within the range between  and

and  . Since these energy eigenstates increase in energy by Y dx, all such energy eigenstates that are in the interval ranging from E – Y dx to E move from below E to above E. There are

. Since these energy eigenstates increase in energy by Y dx, all such energy eigenstates that are in the interval ranging from E – Y dx to E move from below E to above E. There are

such energy eigenstates. If  , all these energy eigenstates will move into the range between

, all these energy eigenstates will move into the range between  and

and  and contribute to an increase in

and contribute to an increase in  . The number of energy eigenstates that move from below

. The number of energy eigenstates that move from below  to above

to above  is, of course, given by

is, of course, given by  . The difference

. The difference

is thus the net contribution to the increase in  . Note that if Y dx is larger than

. Note that if Y dx is larger than  there will be the energy eigenstates that move from below E to above

there will be the energy eigenstates that move from below E to above  . They are counted in both

. They are counted in both  and

and  , therefore the above expression is also valid in that case.

, therefore the above expression is also valid in that case.

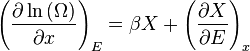

Expressing the above expression as a derivative with respect to E and summing over Y yields the expression:

The logarithmic derivative of  with respect to x is thus given by:

with respect to x is thus given by:

The first term is intensive, i.e. it does not scale with system size. In contrast, the last term scales as the inverse system size and will thus vanishes in the thermodynamic limit. We have thus found that:

Combining this with

Gives:

Derivation for systems described by the canonical ensemble

If a system is in thermal contact with a heat bath at some temperature T then, in equilibrium, the probability distribution over the energy eigenvalues are given by the canonical ensemble:

Here Z is a factor that normalizes the sum of all the probabilities to 1, this function is known as the partition function. We now consider an infinitesimal reversible change in the temperature and in the external parameters on which the energy levels depend. It follows from the general formula for the entropy:

that

Inserting the formula for  for the canonical ensemble in here gives:

for the canonical ensemble in here gives:

Gravitational systems

In systems that do not require for their descriptions the general theory of relativity, bodies always have positive heat capacity, meaning that the temperature rises with energy. Therefore, when energy flows from a high-temperature object to a low-temperature object, the source temperature is decreased while the sink temperature is increased; hence temperature differences tend to diminish over time. This is not always the case for systems in which the gravitational force is important and the general theory of relativity is required. Such systems can spontaneously change towards uneven spread of mass and energy. This applies to the universe in large scale, and consequently it may be difficult or impossible to apply the second law to it.[20] Beyond this, the thermodynamics of systems described by the general theory of relativity is beyond the scope of the present article.

Non-equilibrium states

The theory of classical or equilibrium thermodynamics is idealized. A main postulate or assumption, often not even explicitly stated, is the existence of systems in their own internal states of thermodynamic equilibrium. In general, a region of space containing a physical system at a given time, that may be found in nature, is not in thermodynamic equilibrium, read in the most stringent terms. In looser terms, nothing in the entire universe is or has ever been truly in exact thermodynamic equilibrium.[20][79]

For purposes of physical analysis, it is often enough convenient to make an assumption of thermodynamic equilibrium. Such an assumption may rely on trial and error for its justification. If the assumption is justified, it can often be very valuable and useful because it makes available the theory of thermodynamics. Elements of the equilibrium assumption are that a system is observed to be unchanging over an indefinitely long time, and that there are so many particles in a system, that its particulate nature can be entirely ignored. Under such an equilibrium assumption, in general, there are no macroscopically detectable fluctuations. There is an exception, the case of critical states, which exhibit to the naked eye the phenomenon of critical opalescence. For laboratory studies of critical states, exceptionally long observation times are needed.

In all cases, the assumption of thermodynamic equilibrium, once made, implies as a consequence that no putative candidate "fluctuation" alters the entropy of the system.

It can easily happen that a physical system exhibits internal macroscopic changes that are fast enough to invalidate the assumption of the constancy of the entropy. Or that a physical system has so few particles that the particulate nature is manifest in observable fluctuations. Then the assumption of thermodynamic equilibrium is to be abandoned. There is no unqualified general definition of entropy for non-equilibrium states.[80]

There are intermediate cases, in which the assumption of local thermodynamic equilibrium is a very good approximation,[81][82][83][84] but strictly speaking it is still an approximation, not theoretically ideal. For non-equilibrium situations in general, it may be useful to consider statistical mechanical definitions of other quantities that may be conveniently called 'entropy', but they should not be confused or conflated with thermodynamic entropy properly defined for the second law. These other quantities indeed belong to statistical mechanics, not to thermodynamics, the primary realm of the second law.

The physics of macroscopically observable fluctuations is beyond the scope of this article.

Arrow of time

The second law of thermodynamics is a physical law that is not symmetric to reversal of the time direction.

The second law has been proposed to supply an explanation of the difference between moving forward and backwards in time, such as why the cause precedes the effect (the causal arrow of time).[85]

Irreversibility

Irreversibility in thermodynamic processes is a consequence of the asymmetric character of thermodynamic operations, and not of any internally irreversible microscopic properties of the bodies. Thermodynamic operations are macroscopic external interventions imposed on the participating bodies, not derived from their internal properties. There are reputed "paradoxes" that arise from failure to recognize this.

Loschmidt's paradox

Loschmidt's paradox, also known as the reversibility paradox, is the objection that it should not be possible to deduce an irreversible process from the time-symmetric dynamics that describe the microscopic evolution of a macroscopic system.

In the opinion of Schrödinger, "It is now quite obvious in what manner you have to reformulate the law of entropy—or for that matter, all other irreversible statements—so that they be capable of being derived from reversible models. You must not speak of one isolated system but at least of two, which you may for the moment consider isolated from the rest of the world, but not always from each other."[86] The two systems are isolated from each other by the wall, until it is removed by the thermodynamic operation, as envisaged by the law. The thermodynamic operation is externally imposed, not subject to the reversible microscopic dynamical laws that govern the constituents of the systems. It is the cause of the irreversibility. The statement of the law in this present article complies with Schrödinger's advice. The cause–effect relation is logically prior to the second law, not derived from it.

Poincaré recurrence theorem

The Poincaré recurrence theorem considers a theoretical microscopic description of an isolated physical system. This may be considered as a model of a thermodynamic system after a thermodynamic operation has removed an internal wall. The system will, after a sufficiently long time, return to a microscopically defined state very close to the initial one. The Poincaré recurrence time is the length of time elapsed until the return. It is exceedingly long, likely longer than the life of the universe, and depends sensitively on the geometry of the wall that was removed by the thermodynamic operation. The recurrence theorem may be perceived as apparently contradicting the second law of thermodynamics. More obviously, however, it is simply a microscopic model of thermodynamic equilibrium in an isolated system formed by removal of a wall between two systems. For a typical thermodynamical system, the recurrence time is so large (many many times longer than the lifetime of the universe) that, for all practical purposes, one cannot observe the recurrence. One might wish, nevertheless, to imagine that one could wait for the Poincaré recurrence, and then re-insert the wall that was removed by the thermodynamic operation. It is then evident that the appearance of irreversibility is due to the utter unpredictability of the Poincaré recurrence given only that the initial state was one of thermodynamic equilibrium, as is the case in macroscopic thermodynamics. Even if one could wait for it, one has no practical possibility of picking the right instant at which to re-insert the wall.

Maxwell's demon

James Clerk Maxwell imagined one container divided into two parts, A and B. Both parts are filled with the same gas at equal temperatures and placed next to each other, separated by a wall. Observing the molecules on both sides, an imaginary demon guards a microscopic trapdoor in the wall. When a faster-than-average molecule from A flies towards the trapdoor, the demon opens it, and the molecule will fly from A to B. The average speed of the molecules in B will have increased while in A they will have slowed down on average. Since average molecular speed corresponds to temperature, the temperature decreases in A and increases in B, contrary to the second law of thermodynamics.

One response to this question was suggested in 1929 by Leó Szilárd and later by Léon Brillouin. Szilárd pointed out that a real-life Maxwell's demon would need to have some means of measuring molecular speed, and that the act of acquiring information would require an expenditure of energy.

Maxwell's demon repeatedly alters the permeability of the wall between A and B. It is therefore performing thermodynamic operations on a microscopic scale, not just observing ordinary spontaneous or natural macroscopic thermodynamic processes.

Quotations

| Wikiquote has quotations related to: Second law of thermodynamics |

The law that entropy always increases holds, I think, the supreme position among the laws of Nature. If someone points out to you that your pet theory of the universe is in disagreement with Maxwell's equations — then so much the worse for Maxwell's equations. If it is found to be contradicted by observation — well, these experimentalists do bungle things sometimes. But if your theory is found to be against the second law of thermodynamics I can give you no hope; there is nothing for it but to collapse in deepest humiliation.— Sir Arthur Stanley Eddington, The Nature of the Physical World (1927)

There have been nearly as many formulations of the second law as there have been discussions of it.— Philosopher / Physicist P.W. Bridgman, (1941)

Clausius is the author of the sibyllic utterance, "The energy of the universe is constant; the entropy of the universe tends to a maximum." The objectives of continuum thermomechanics stop far short of explaining the "universe", but within that theory we may easily derive an explicit statement in some ways reminiscent of Clausius, but referring only to a modest object: an isolated body of finite size.— Truesdell, C., Muncaster, R.G. (1980). Fundamentals of Maxwell's Kinetic Theory of a Simple Monatomic Gas, Treated as a Branch of Rational Mechanics, Academic Press, New York, ISBN 0-12-701350-4, p.17.

See also

References

- 1 2 W. Thomson (1852).

- ↑ Adkins, C.J. (1968/1983), p. 4: "contain the system within walls of some special kind that allow or prevent various sorts of interaction between the system and its surroundings"; p. 5: "a section of wall through which one or more of the components may pass while others are contained; such a wall is called semipermeable; p. 49: "Take the walls to be thermally insulating"; p. 144: "If isotropic radiation is trapped in a vessel with perfectly reflecting walls ..."

- ↑ Callen, H.B. (1960/1985), p. 16: "In general, a wall that constrains an extensive parameter of a system is said to be restrictive with respect to that parameter."

- ↑ Baierlein, R. (1999), p. 22: "no energy passes through any walls"; p. 118: "cubical cavity with perfectly reflecting metal walls".

- ↑ Pippard, A.B. (1957/1966), p. 106: "In this example we have considered the constraint to take the form of a mechanical barrier"; p. 108: "We shall consider the equilibrium between a liquid and its vapour, under two different constraints: first, with the vessel immersed in a constant-temperature bath, and open to a constant external pressure; and secondly, with the vessel closed and thermally isolated."

- ↑ Buchdahl, H.A. (1966), p. 72: "It should be noted that there need be no restriction on heat exchange within the system."

- ↑ Blundell, S.J., Blundell, K.M. (2006), p. 16: "we are applying a constraint to the system, either constraining the volume of the gas to be fixed, or constraining the pressure of the gas to be fixed"; p. 108: "The first constraint is that of keeping the volume constant."

- ↑ Guggenheim, E.A. (1949), p.454: "It is usually when a system is tampered with that changes take place."

- 1 2 Pippard, A.B. (1957/1966), p. 96: "In the first two examples the changes treated involved the transition from one equilibrium state to another, and were effected by altering the constraints imposed upon the systems, in the first by removal of an adiabatic wall and in the second by altering the volume to which the gas was confined"; "It is not possible to vary the constraints of an isolated system in such a way as to decrease the entropy"; p. 97: "It will be seen then that our second statement of the entropy law has much to recommend it in that it concentrates upon the essential feature of a thermodynamic change, the variation of the constraints to which a system is subjected"; "In the same way when two bodies at different temperatures are placed in thermal contact by removal of an adiabatic wall, it is the act of removing the wall and not the subsequent flow of heat which increases the entropy".

- ↑ Kittel, C., Kroemer, H. (1969/1980), p. 46: "the entropy of a closed system tends to remain constant or to increase when a constraint internal to the system is removed."

- 1 2 Uffink, J. (2003), p. 133: "A process is then conceived of as being triggered by the cancellation of one or more of these constraints (e.g. mixing or expansion of gases after the removal of a partition, loosening a previously fixed piston, etc.)."

- ↑ Bridgman, P.W. (1943), p. 153: "The entropy increase arising from this process, that is, exchange of radiation between bodies at different temperatures, is not to be sought in the initial act of absorption, which may be non-entropy-increasing, but is to be found after the initial absorption in the spreading out of the spectrum of the absorbed energy from the distribution characteristic of the higher temperature of its source to the distribution characteristic of the lower temperature of the sink."

- ↑ Guggenheim, E.A. (1949).

- ↑ Denbigh, K. (1954/1981), p. 75.

- ↑ Atkins, P.W., de Paula, J. (2006), p. 78: "The opposite change, the spreading of the object’s energy into the surroundings as thermal motion, is natural. It may seem very puzzling that the spreading out of energy and matter, the collapse into disorder, can lead to the formation of such ordered structures as crystals or proteins. Nevertheless, in due course, we shall see that dispersal of energy and matter accounts for change in all its forms."

- ↑ Callen, H.B. (1960/1985). P. 63: "In order for the change to be quasi-static, this compression must be dissipated throughout the entire volume of gas before the next appreciable compression occurs"; p. 69: "The excess work done in an irreversible process over that done in a reversible process, is called dissipative work"; p. 321: "But in any real case the motion of the piston will cause viscous damping within the systems and the kinetic energy of the piston will eventually be dissipated."

- ↑ Adkins, C.J. (1968/1983), p. 76.

- ↑ Phil Attard (2012). Non-equilibrium Thermodynamics and Statistical Mechanics: Foundations and Applications. Oxford University Press. p. 24. ISBN 019163977X.: "Physically, the result means that for every fluctuation from equilibrium, there is a reverse fluctuation back to equilibrium, where equilibrium means the most likely macrostate."

- ↑ Uffink, J. (2001).

- 1 2 3 Grandy, W.T. (Jr) (2008), p. 151.

- ↑

- Tisza, L. (1966), p. 40: "This conforms to the notion that entropy, a state function, is associated with equilibrium states."

- ↑ Callen, H.B. (1960/1985), p. 27: "It must be stressed that we postulate the existence of the entropy only for equilibrium states and that our postulate makes no reference whatsoever to nonequilibrium states."

- ↑ Uffink, J. (2001), p. 306: "A common and preliminary description of the Second Law is that it guarantees that all physical systems in thermal equilibrium can be characterised by a quantity called entropy"; p. 308: "In thermodynamics, entropy is not defined for arbitrary states out of equilibrium."

- ↑ Planck, M. (1897/1903), pp. 40–41.

- ↑ Munster A. (1970), pp. 8–9, 50–51.

- ↑ Planck, M. (1897/1903), pp. 79–107.

- ↑ Bailyn, M. (1994), Section 71, pp. 113–154.

- ↑ Bailyn, M. (1994), p. 120.

- ↑ Adkins, C.J. (1968/1983), p. 75.

- ↑ Münster, A. (1970), p. 45.

- ↑ J. S. Dugdale (1996). Entropy and its Physical Meaning. Taylor & Francis. p. 13. ISBN 0-7484-0569-0.

This law is the basis of temperature.

- ↑ Zemansky, M.W. (1968), pp. 207–209.

- ↑ Quinn, T.J. (1983), p. 8.

- ↑ "Concept and Statements of the Second Law". web.mit.edu. Retrieved 2010-10-07.

- ↑ Lieb & Yngvason (1999).

- ↑ Rao (2004), p. 213.

- ↑ Carnot, S. (1824/1986).

- ↑ Truesdell, C. (1980), Chapter 5.

- ↑ Adkins, C.J. (1968/1983), pp. 56–58.

- ↑ Münster, A. (1970), p. 11.

- ↑ Kondepudi, D., Prigogine, I. (1998), pp.67–75.

- ↑ Lebon, G., Jou, D., Casas-Vázquez, J. (2008), p. 10.

- ↑ Eu, B.C. (2002), pp. 32–35.

- ↑ Clausius (1850).

- ↑ Clausius (1854), p. 86.

- ↑ Thomson (1851).

- ↑ Planck, M. (1897/1903), p. 86.

- ↑ Roberts, J.K., Miller, A.R. (1928/1960), p. 319.

- ↑ ter Haar, D., Wergeland, H. (1966), p. 17.

- ↑ Pippard, A.B. (1957/1966), p. 30.

- ↑ Čápek, V., Sheehan, D.P. (2005), p. 3

- ↑ Planck, M. (1897/1903), p. 100.

- ↑ Planck, M. (1926), p. 463, translation by Uffink, J. (2003), p. 131.

- ↑ Roberts, J.K., Miller, A.R. (1928/1960), p. 382. This source is partly verbatim from Planck's statement, but does not cite Planck. This source calls the statement the principle of the increase of entropy.

- ↑ Uhlenbeck, G.E., Ford, G.W. (1963), p. 16.

- ↑ Carathéodory, C. (1909).

- ↑ Buchdahl, H.A. (1966), p. 68.

- ↑ Sychev, V. V. (1991). The Differential Equations of Thermodynamics. Taylor & Francis. ISBN 978-1560321217. Retrieved 2012-11-26.

- 1 2 Münster, A. (1970), p. 45.

- 1 2 Lieb & Yngvason (1999), p. 49.

- 1 2 Planck, M. (1926).

- ↑ Buchdahl, H.A. (1966), p. 69.

- ↑ Uffink, J. (2003), pp. 129–132.

- ↑ Truesdell, C., Muncaster, R.G. (1980). Fundamentals of Maxwell's Kinetic Theory of a Simple Monatomic Gas, Treated as a Branch of Rational Mechanics, Academic Press, New York, ISBN 0-12-701350-4, p. 15.

- ↑ Planck, M. (1897/1903), p. 81.

- ↑ Planck, M. (1926), p. 457, Wikipedia editor's translation.

- ↑ Lieb, E.H., Yngvason, J. (2003), p. 149.

- ↑ Borgnakke, C., Sonntag., R.E. (2009), p. 304.

- ↑ van Gool, W.; Bruggink, J.J.C. (Eds) (1985). Energy and time in the economic and physical sciences. North-Holland. pp. 41–56. ISBN 0444877487.

- ↑ Grubbström, Robert W. (2007). "An Attempt to Introduce Dynamics Into Generalised Exergy Considerations". Applied Energy 84: 701–718. doi:10.1016/j.apenergy.2007.01.003.

- ↑ Clausius theorem at Wolfram Research

- ↑ Denbigh, K.G., Denbigh, J.S. (1985). Entropy in Relation to Incomplete Knowledge, Cambridge University Press, Cambridge UK, ISBN 0-521-25677-1, pp. 43–44.

- ↑ Grandy, W.T., Jr (2008). Entropy and the Time Evolution of Macroscopic Systems, Oxford University Press, Oxford, ISBN 978-0-19-954617-6, pp. 55–58.

- ↑ Entropy Sites — A Guide Content selected by Frank L. Lambert

- ↑ Clausius (1867).

- ↑ Hawking, SW (1985). "Arrow of time in cosmology". Phys. Rev. D 32 (10): 2489–2495. Bibcode:1985PhRvD..32.2489H. doi:10.1103/PhysRevD.32.2489. Retrieved 2013-02-15.

- ↑ Greene, Brian (2004). The Fabric of the Cosmos. Alfred A. Knopf. p. 171. ISBN 0-375-41288-3.

- ↑ Lebowitz, Joel L. (September 1993). "Boltzmann's Entropy and Time's Arrow" (PDF). Physics Today 46 (9): 32–38. Bibcode:1993PhT....46i..32L. doi:10.1063/1.881363. Retrieved 2013-02-22.

- ↑ Callen, H.B. (1960/1985), p. 15.

- ↑ Lieb, E.H., Yngvason, J. (2003), p. 190.

- ↑ Gyarmati, I. (1967/1970), pp. 4-14.

- ↑ Glansdorff, P., Prigogine, I. (1971).

- ↑ Müller, I. (1985).

- ↑ Müller, I. (2003).

- ↑ Halliwell, J.J.; et al. (1994). Physical Origins of Time Asymmetry. Cambridge. ISBN 0-521-56837-4. chapter 6

- ↑ Schrödinger, E. (1950), p. 192.

Bibliography of citations

- Adkins, C.J. (1968/1983). Equilibrium Thermodynamics, (1st edition 1968), third edition 1983, Cambridge University Press, Cambridge UK, ISBN 0-521-25445-0.

- Atkins, P.W., de Paula, J. (2006). Atkins' Physical Chemistry, eighth edition, W.H. Freeman, New York, ISBN 978-0-7167-8759-4.

- Attard, P. (2012). Non-equilibrium Thermodynamics and Statistical Mechanics: Foundations and Applications, Oxford University Press, Oxford UK, ISBN 978-0-19-966276-0.

- Baierlein, R. (1999). Thermal Physics, Cambridge University Press, Cambridge UK, ISBN 0-521-59082-5.

- Bailyn, M. (1994). A Survey of Thermodynamics, American Institute of Physics, New York, ISBN 0-88318-797-3.

- Blundell, S.J., Blundell, K.M. (2006). Concepts in Thermal Physics, Oxford University Press, Oxford UK, ISBN 978-0-19-856769-1.

- Boltzmann, L. (1896/1964). Lectures on Gas Theory, translated by S.G. Brush, University of California Press, Berkeley.

- Borgnakke, C., Sonntag., R.E. (2009). Fundamentals of Thermodynamics, seventh edition, Wiley, ISBN 978-0-470-04192-5.

- Buchdahl, H.A. (1966). The Concepts of Classical Thermodynamics, Cambridge University Press, Cambridge UK.

- Bridgman, P.W. (1943). The Nature of Thermodynamics, Harvard University Press, Cambridge MA.

- Callen, H.B. (1960/1985). Thermodynamics and an Introduction to Thermostatistics, (1st edition 1960) 2nd edition 1985, Wiley, New York, ISBN 0-471-86256-8.

- Čápek, V., Sheehan, D.P. (2005). Challenges to the Second Law of Thermodynamics: Theory and Experiment, Springer, Dordrecht, ISBN 1-4020-3015-0.

- C. Carathéodory (1909). "Untersuchungen über die Grundlagen der Thermodynamik". Mathematische Annalen 67: 355–386. doi:10.1007/bf01450409.

Axiom II: In jeder beliebigen Umgebung eines willkürlich vorgeschriebenen Anfangszustandes gibt es Zustände, die durch adiabatische Zustandsänderungen nicht beliebig approximiert werden können. (p.363)

. A translation may be found here. Also a mostly reliable translation is to be found at Kestin, J. (1976). The Second Law of Thermodynamics, Dowden, Hutchinson & Ross, Stroudsburg PA. - Carnot, S. (1824/1986). Reflections on the motive power of fire, Manchester University Press, Manchester UK, ISBN 0719017416. Also here.

- Chapman, S., Cowling, T.G. (1939/1970). The Mathematical Theory of Non-uniform gases. An Account of the Kinetic Theory of Viscosity, Thermal Conduction and Diffusion in Gases, third edition 1970, Cambridge University Press, London.

- Clausius, R. (1850). "Ueber Die Bewegende Kraft Der Wärme Und Die Gesetze, Welche Sich Daraus Für Die Wärmelehre Selbst Ableiten Lassen". Annalen der Physik 79: 368–397, 500–524. Bibcode:1850AnP...155..500C. doi:10.1002/andp.18501550403. Retrieved 26 June 2012. Translated into English: Clausius, R. (July 1851). "On the Moving Force of Heat, and the Laws regarding the Nature of Heat itself which are deducible therefrom". London, Edinburgh and Dublin Philosophical Magazine and Journal of Science. 4th 2 (VIII): 1–21; 102–119. Retrieved 26 June 2012.

- Clausius, R. (1854). "Über eine veränderte Form des zweiten Hauptsatzes der mechanischen Wärmetheorie" (PDF). Annalen der Physik (Poggendoff). xciii: 481–506. Bibcode:1854AnP...169..481C. doi:10.1002/andp.18541691202. Retrieved 24 March 2014. Translated into English: Clausius, R. (July 1856). "On a Modified Form of the Second Fundamental Theorem in the Mechanical Theory of Heat". London, Edinburgh and Dublin Philosophical Magazine and Journal of Science. 4th 2: 86. Retrieved 24 March 2014. Reprinted in: Clausius, R. (1867). The Mechanical Theory of Heat – with its Applications to the Steam Engine and to Physical Properties of Bodies. London: John van Voorst. Retrieved 19 June 2012.

- Denbigh, K. (1954/1981). The Principles of Chemical Equilibrium. With Applications in Chemistry and Chemical Engineering, fourth edition, Cambridge University Press, Cambridge UK, ISBN 0-521-23682-7.

- Eu, B.C. (2002). Generalized Thermodynamics. The Thermodynamics of Irreversible Processes and Generalized Hydrodynamics, Kluwer Academic Publishers, Dordrecht, ISBN 1–4020–0788–4.

- Gibbs, J.W. (1876/1878). On the equilibrium of heterogeneous substances, Trans. Conn. Acad., 3: 108-248, 343-524, reprinted in The Collected Works of J. Willard Gibbs, Ph.D, LL. D., edited by W.R. Longley, R.G. Van Name, Longmans, Green & Co., New York, 1928, volume 1, pp. 55–353.

- Griem, H.R. (2005). Principles of Plasma Spectroscopy (Cambridge Monographs on Plasma Physics), Cambridge University Press, New York ISBN 0-521-61941-6.

- Glansdorff, P., Prigogine, I. (1971). Thermodynamic Theory of Structure, Stability, and Fluctuations, Wiley-Interscience, London, 1971, ISBN 0-471-30280-5.

- Grandy, W.T., Jr (2008). Entropy and the Time Evolution of Macroscopic Systems. Oxford University Press. ISBN 978-0-19-954617-6.

- Greven, A., Keller, G., Warnecke (editors) (2003). Entropy, Princeton University Press, Princeton NJ, ISBN 0-691-11338-6.

- Guggenheim, E.A. (1949). 'Statistical basis of thermodynamics', Research, 2: 450–454.

- Guggenheim, E.A. (1967). Thermodynamics. An Advanced Treatment for Chemists and Physicists, fifth revised edition, North Holland, Amsterdam.

- Gyarmati, I. (1967/1970) Non-equilibrium Thermodynamics. Field Theory and Variational Principles, translated by E. Gyarmati and W.F. Heinz, Springer, New York.

- Kittel, C., Kroemer, H. (1969/1980). Thermal Physics, second edition, Freeman, San Francisco CA, ISBN 0-7167-1088-9.

- Kondepudi, D., Prigogine, I. (1998). Modern Thermodynamics: From Heat Engines to Dissipative Structures, John Wiley & Sons, Chichester, ISBN 0–471–97393–9.

- Lebon, G., Jou, D., Casas-Vázquez, J. (2008). Understanding Non-equilibrium Thermodynamics: Foundations, Applications, Frontiers, Springer-Verlag, Berlin, e-ISBN 978-3-540-74252-4.

- Lieb, E. H.; Yngvason, J. (1999). "The Physics and Mathematics of the Second Law of Thermodynamics" (PDF). Physics Reports 310: 1–96. arXiv:cond-mat/9708200. Bibcode:1999PhR...310....1L. doi:10.1016/S0370-1573(98)00082-9. Retrieved 24 March 2014.

- Lieb, E.H., Yngvason, J. (2003). The Entropy of Classical Thermodynamics, pp. 147–195, Chapter 8 of Entropy, Greven, A., Keller, G., Warnecke (editors) (2003).

- Maxwell, J.C. (1867). "On the dynamical theory of gases". Phil. Trans. Roy. Soc. London 157: 49–88.

- Müller, I. (1985). Thermodynamics, Pitman, London, ISBN 0-273-08577-8.

- Müller, I. (2003). Entropy in Nonequilibrium, pp. 79–109, Chapter 5 of Entropy, Greven, A., Keller, G., Warnecke (editors) (2003).

- Münster, A. (1970), Classical Thermodynamics, translated by E.S. Halberstadt, Wiley–Interscience, London, ISBN 0-471-62430-6.

- Pippard, A.B. (1957/1966). Elements of Classical Thermodynamics for Advanced Students of Physics, original publication 1957, reprint 1966, Cambridge University Press, Cambridge UK.

- Planck, M. (1897/1903). Treatise on Thermodynamics, translated by A. Ogg, Longmans Green, London, p. 100.

- Planck. M. (1914). The Theory of Heat Radiation, a translation by Masius, M. of the second German edition, P. Blakiston's Son & Co., Philadelphia.

- Planck, M. (1926). Über die Begründung des zweiten Hauptsatzes der Thermodynamik, Sitzungsberichte der Preussischen Akademie der Wissenschaften: Physikalisch-mathematische Klasse: 453–463.

- Quinn, T.J. (1983). Temperature, Academic Press, London, ISBN 0-12-569680-9.

- Rao, Y.V.C. (2004). An Introduction to thermodynamics. Universities Press. p. 213. ISBN 978-81-7371-461-0.

- Roberts, J.K., Miller, A.R. (1928/1960). Heat and Thermodynamics, (first edition 1928), fifth edition, Blackie & Son Limited, Glasgow.

- Schrödinger, E. (1950). Irreversibility, Proc. Roy. Irish Acad., A53: 189–195.

- ter Haar, D., Wergeland, H. (1966). Elements of Thermodynamics, Addison-Wesley Publishing, Reading MA.

- Thomson, W. (1851). "On the Dynamical Theory of Heat, with numerical results deduced from Mr Joule's equivalent of a Thermal Unit, and M. Regnault's Observations on Steam". Transactions of the Royal Society of Edinburgh XX (part II): 261–268; 289–298. Also published in Thomson, W. (December 1852). "On the Dynamical Theory of Heat, with numerical results deduced from Mr Joule's equivalent of a Thermal Unit, and M. Regnault's Observations on Steam". Philos. Mag. 4 IV (22): 13. Retrieved 25 June 2012.

- Thomson, W. (1852). On the universal tendency in nature to the dissipation of mechanical energy Philosophical Magazine, Ser. 4, p. 304.

- Tisza, L. (1966). Generalized Thermodynamics, M.I.T Press, Cambridge MA.

- Truesdell, C. (1980). The Tragicomical History of Thermodynamics 1822–1854, Springer, New York, ISBN 0–387–90403–4.

- Uffink, J. (2001). Bluff your way in the second law of thermodynamics, Stud. Hist. Phil. Mod. Phys., 32(3): 305–394.

- Uffink, J. (2003). Irreversibility and the Second Law of Thermodynamics, Chapter 7 of Entropy, Greven, A., Keller, G., Warnecke (editors) (2003), Princeton University Press, Princeton NJ, ISBN 0-691-11338-6.

- Uhlenbeck, G.E., Ford, G.W. (1963). Lectures in Statistical Mechanics, American Mathematical Society, Providence RI.

- Zemansky, M.W. (1968). Heat and Thermodynamics. An Intermediate Textbook, fifth edition, McGraw-Hill Book Company, New York.

Further reading

- Goldstein, Martin, and Inge F., 1993. The Refrigerator and the Universe. Harvard Univ. Press. Chpts. 4–9 contain an introduction to the Second Law, one a bit less technical than this entry. ISBN 978-0-674-75324-2

- Leff, Harvey S., and Rex, Andrew F. (eds.) 2003. Maxwell's Demon 2 : Entropy, classical and quantum information, computing. Bristol UK; Philadelphia PA: Institute of Physics. ISBN 978-0-585-49237-7

- Halliwell, J.J. (1994). Physical Origins of Time Asymmetry. Cambridge. ISBN 0-521-56837-4.(technical).

- Carnot, Sadi; Thurston, Robert Henry (editor and translator) (1890). Reflections on the Motive Power of Heat and on Machines Fitted to Develop That Power. New York: J. Wiley & Sons. Cite uses deprecated parameter

|coauthors=(help) (full text of 1897 ed.) (html) - Stephen Jay Kline (1999). The Low-Down on Entropy and Interpretive Thermodynamics, La Cañada, CA: DCW Industries. ISBN 1928729010.

- Kostic, M (2011). "Revisiting The Second Law of Energy Degradation and Entropy Generation: From Sadi Carnot's Ingenious Reasoning to Holistic Generalization". AIP Conf. Proc. 1411: 327–350. Bibcode:2011AIPC.1411..327K. doi:10.1063/1.3665247. ISBN 978-0-7354-0985-9. also at .

External links

- Stanford Encyclopedia of Philosophy: "Philosophy of Statistical Mechanics" – by Lawrence Sklar.

- Second law of thermodynamics in the MIT Course Unified Thermodynamics and Propulsion from Prof. Z. S. Spakovszky

- E.T. Jaynes, 1988, "The evolution of Carnot's principle," in G. J. Erickson and C. R. Smith (eds.)Maximum-Entropy and Bayesian Methods in Science and Engineering, Vol 1: p. 267.

- Caratheodory, C., "Examination of the foundations of thermodynamics," trans. by D. H. Delphenich

![S = k_{\mathrm B} \ln\left[\Omega\left(E\right)\right]\,](../I/m/c9a4155c99dc196648017bba2b85e0c1.png)

![\frac{1}{k_{\mathrm B} T}\equiv\beta\equiv\frac{d\ln\left[\Omega\left(E\right)\right]}{dE}](../I/m/9aa130c8295d811f02f994634ee80ca4.png)