Renewal theory

Renewal theory is the branch of probability theory that generalizes Poisson processes for arbitrary holding times. Applications include calculating the best strategy for replacing worn-out machinery in a factory and comparing the long-term benefits of different insurance policies.

Renewal processes

Introduction

A renewal process is a generalization of the Poisson process. In essence, the Poisson process is a continuous-time Markov process on the positive integers (usually starting at zero) which has independent identically distributed holding times at each integer  (exponentially distributed) before advancing (with probability 1) to the next integer:

(exponentially distributed) before advancing (with probability 1) to the next integer: . In the same informal spirit, we may define a renewal process to be the same thing, except that the holding times take on a more general distribution. (Note however that the independence and identical distribution (IID) property of the holding times is retained).

. In the same informal spirit, we may define a renewal process to be the same thing, except that the holding times take on a more general distribution. (Note however that the independence and identical distribution (IID) property of the holding times is retained).

Formal definition

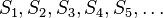

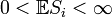

Let  be a sequence of positive independent identically distributed random variables such that

be a sequence of positive independent identically distributed random variables such that

We refer to the random variable  as the "

as the " th" holding time.

th" holding time. ![\mathbb{E}[S_i]](../I/m/92e2d7bbd26c3eb3dfb9666dad1b61a3.png) is the expectation of

is the expectation of  .

.

Define for each n > 0 :

each  referred to as the "

referred to as the " th" jump time and the intervals

th" jump time and the intervals

being called renewal intervals.

Then the random variable  given by

given by

(where  is the indicator function) represents the number of jumps that have occurred by time t, and is called a renewal process.

is the indicator function) represents the number of jumps that have occurred by time t, and is called a renewal process.

Interpretation

If one considers events occurring at random times, one may choose to think of the holding times  as the random time elapsed between two subsequent events. For example, if the renewal process is modelling the breakdown of different machines, then the holding times represent the time between one machine breaking down before another one does.

as the random time elapsed between two subsequent events. For example, if the renewal process is modelling the breakdown of different machines, then the holding times represent the time between one machine breaking down before another one does.

Renewal-reward processes

Let  be a sequence of IID random variables (rewards) satisfying

be a sequence of IID random variables (rewards) satisfying

Then the random variable

is called a renewal-reward process. Note that unlike the  , each

, each  may take negative values as well as positive values.

may take negative values as well as positive values.

The random variable  depends on two sequences: the holding times

depends on two sequences: the holding times  and the rewards

and the rewards

These two sequences need not be independent. In particular,

These two sequences need not be independent. In particular,  may be a function

of

may be a function

of  .

.

Interpretation

In the context of the above interpretation of the holding times as the time between successive malfunctions of a machine, the "rewards"  (which in this case happen to be negative) may be viewed as the successive repair costs incurred as a result of the successive malfunctions.

(which in this case happen to be negative) may be viewed as the successive repair costs incurred as a result of the successive malfunctions.

An alternative analogy is that we have a magic goose which lays eggs at intervals (holding times) distributed as  . Sometimes it lays golden eggs of random weight, and sometimes it lays toxic eggs (also of random weight) which require responsible (and costly) disposal. The "rewards"

. Sometimes it lays golden eggs of random weight, and sometimes it lays toxic eggs (also of random weight) which require responsible (and costly) disposal. The "rewards"  are the successive (random) financial losses/gains resulting from successive eggs (i = 1,2,3,...) and

are the successive (random) financial losses/gains resulting from successive eggs (i = 1,2,3,...) and  records the total financial "reward" at time t.

records the total financial "reward" at time t.

Properties of renewal processes and renewal-reward processes

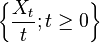

We define the renewal function as the expected value of the number of jumps observed up to some time  :

:

The elementary renewal theorem

The renewal function satisfies

Proof

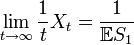

Below, you find that the strong law of large numbers for renewal processes tell us that

To prove the elementary renewal theorem, it is sufficient to show that  is uniformly integrable.

is uniformly integrable.

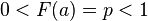

To do this, consider some truncated renewal process where the holding times are defined by  where

where  is a point such that

is a point such that  which exists for all non-deterministic renewal processes. This new renewal process

which exists for all non-deterministic renewal processes. This new renewal process  is an upper bound on

is an upper bound on  and its renewals can only occur on the lattice

and its renewals can only occur on the lattice  . Furthermore, the number of renewals at each time is geometric with parameter

. Furthermore, the number of renewals at each time is geometric with parameter  . So we have

. So we have

The elementary renewal theorem for renewal reward processes

We define the reward function:

The reward function satisfies

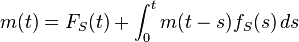

The renewal equation

The renewal function satisfies

where  is the cumulative distribution function of

is the cumulative distribution function of  and

and  is the corresponding probability density function.

is the corresponding probability density function.

Proof of the renewal equation

- We may iterate the expectation about the first holding time:

- But by the Markov property

- So

- as required.

Asymptotic properties

and

and  satisfy

satisfy

(strong law of large numbers for renewal processes)

(strong law of large numbers for renewal processes)

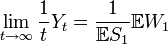

(strong law of large numbers for renewal-reward processes)

(strong law of large numbers for renewal-reward processes)

almost surely.

Proof

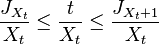

- First consider

. By definition we have:

. By definition we have:

- for all

and so

and so

- for all t ≥ 0.

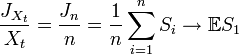

- Now since

we have:

we have:

- as

almost surely (with probability 1). Hence:

almost surely (with probability 1). Hence:

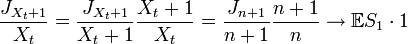

- almost surely (using the strong law of large numbers); similarly:

- almost surely.

- Thus (since

is sandwiched between the two terms)

is sandwiched between the two terms)

- almost surely.

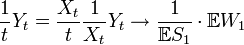

- Next consider

. We have

. We have

- almost surely (using the first result and using the law of large numbers on

).

).

The inspection paradox

A curious feature of renewal processes is that if we wait some predetermined time t and then observe how large the renewal interval containing t is, we should expect it to be typically larger than a renewal interval of average size.

Mathematically the inspection paradox states: for any t > 0 the renewal interval containing t is stochastically larger than the first renewal interval. That is, for all x > 0 and for all t > 0:

where FS is the cumulative distribution function of the IID holding times Si.

Proof of the inspection paradox

Observe that the last jump-time before t is  ; and that the renewal interval containing t is

; and that the renewal interval containing t is  . Then

. Then

as required.

Superposition

The superposition of independent renewal processes is not generally a renewal process, but it can be described within a larger class of processes called the Markov-renewal processes.[1] However, the cumulative distribution function of the first inter-event time in the superposition process is given by[2]

where Rk(t) and αk > 0 are the CDF of the inter-event times and the arrival rate of process k.[3]

Example applications

Example 1: use of the strong law of large numbers

Eric the entrepreneur has n machines, each having an operational lifetime uniformly distributed between zero and two years. Eric may let each machine run until it fails with replacement cost €2600; alternatively he may replace a machine at any time while it is still functional at a cost of €200.

What is his optimal replacement policy?

Solution

We may model the lifetime of the n machines as n independent concurrent renewal-reward processes, so it is sufficient to consider the case n=1. Denote this process by  . The successive lifetimes S of the replacement machines are independent and identically distributed, so the optimal policy is the same for all replacement machines in the process.

. The successive lifetimes S of the replacement machines are independent and identically distributed, so the optimal policy is the same for all replacement machines in the process.

If Eric decides at the start of a machine's life to replace it at time 0 < t < 2 but the machine happens to fail before that time then the lifetime S of the machine is uniformly distributed on [0, t] and thus has expectation 0.5t. So the overall expected lifetime of the machine is:

and the expected cost W per machine is:

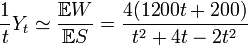

So by the strong law of large numbers, his long-term average cost per unit time is:

then differentiating with respect to t:

this implies that the turning points satisfy:

and thus

We take the only solution t in [0, 2]: t = 2/3. This is indeed a minimum (and not a maximum) since the cost per unit time tends to infinity as t tends to zero, meaning that the cost is decreasing as t increases, until the point 2/3 where it starts to increase.

See also

- Campbell's theorem (probability)

- Compound Poisson process

- Continuous-time Markov process

- Little's lemma

- Palm–Khintchine theorem

- Poisson process

- Queueing theory

- Ruin theory

- Semi-Markov process

References

- ↑ Çinlar, Erhan (1969). "Markov Renewal Theory". Advances in Applied Probability (Applied Probability Trust) 1 (2): 123–187. JSTOR 1426216.

- ↑ Lawrence, A. J. (1973). "Dependency of Intervals Between Events in Superposition Processes". Journal of the Royal Statistical Society. Series B (Methodological) 35 (2): 306–315. JSTOR 2984914. Retrieved Nov 15, 2012. formula 4.1

- ↑ Choungmo Fofack, Nicaise; Nain, Philippe; Neglia, Giovanni; Towsley, Don. "Analysis of TTL-based Cache Networks". Proceedings of 6th International Conference on Performance Evaluation Methodologies and Tools. Retrieved Nov 15, 2012.

- Cox, David (1970). Renewal Theory. London: Methuen & Co. p. 142. ISBN 0-412-20570-X.

- Doob, J. L. (1948). "Renewal Theory From the Point of View of the Theory of Probability". Transactions of the American Mathematical Society 63 (3): 422–438. JSTOR 1990567.

- Smith, Walter L. (1958). "Renewal Theory and Its Ramifications". Journal of the Royal Statistical Society, Series B 20 (2): 243–302. JSTOR 2983891.

![0 < \mathbb{E}[S_i] < \infty.](../I/m/642646acd87e01cd6befabcb8ba03731.png)

![[J_n,J_{n+1}]](../I/m/6bec9ae0b54f0d735f3511a0aa7f0507.png)

![m(t) = \mathbb{E}[X_t].\,](../I/m/009160a35144cc0ec12f24068d79c63e.png)

![\lim_{t \to \infty} \frac{1}{t}m(t) = 1/\mathbb{E}[S_1].](../I/m/4fc12001d16290a2504d8c5010bbbabc.png)

![\lim_{t \to \infty} \frac {X_t}{t} = \frac{1}{\mathbb{E}[S_1]}.](../I/m/dcada189893531c045d5225983dad269.png)

![\begin{align}

\overline{X_t} &\leq \sum_{i=1}^{[at]} \mathrm{Geometric}(p) \\

\mathbb{E}\left[\,\overline{X_t}^2\,\right] &\leq C_1 t + C_2 t^2 \\

P\left(\frac{X_t}{t} > x\right) &\leq \frac{E\left[X_t^2\right]}{t^2x^2} \leq \frac{E\left[\overline{X_t}^2\right]}{t^2x^2} \leq \frac{C}{x^2}.

\end{align}](../I/m/a880a9acc0704a26433d1d05bf159463.png)

![g(t) = \mathbb{E}[Y_t].\,](../I/m/92ca53fe80f16ce621387f832af85ac4.png)

![\lim_{t \to \infty} \frac{1}{t}g(t) = \frac{\mathbb{E}[W_1]}{\mathbb{E}[S_1]}.](../I/m/0a45885f7946b36baa367708fc3e5b3b.png)

![m(t) = \mathbb{E}[X_t] = \mathbb{E}[\mathbb{E}(X_t \mid S_1)]. \,](../I/m/322ac9078941c6dcaf8350b5d144ad64.png)

![\mathbb{E}(X_t \mid S_1=s) = \mathbb{I}_{\{t \geq s\}} \left( 1 + \mathbb{E}[X_{t-s}] \right). \,](../I/m/278cda164cd64d4a08006368b93703c1.png)

![\begin{align}

m(t) & {} = \mathbb{E}[X_t] \\[12pt]

& {} = \mathbb{E}[\mathbb{E}(X_t \mid S_1)] \\[12pt]

& {} = \int_0^\infty \mathbb{E}(X_t \mid S_1=s) f_S(s)\, ds \\[12pt]

& {} = \int_0^\infty \mathbb{I}_{\{t \geq s\}} \left( 1 + \mathbb{E}[X_{t-s}] \right) f_S(s)\, ds \\[12pt]

& {} = \int_0^t \left( 1 + m(t-s) \right) f_S(s)\, ds \\[12pt]

& {} = F_S(t) + \int_0^t m(t-s) f_S(s)\, ds,

\end{align}](../I/m/bdf3ef1f7307ea50a4fc5ae06647f416.png)

![\begin{align}

\mathbb{P}(S_{X_t+1}>x) & {} = \int_0^\infty \mathbb{P}(S_{X_t+1}>x \mid J_{X_t} = s) f_S(s) \, ds \\[12pt]

& {} = \int_0^\infty \mathbb{P}(S_{X_t+1}>x | S_{X_t+1}>t-s) f_S(s)\, ds \\[12pt]

& {} = \int_0^\infty \frac{\mathbb{P}(S_{X_t+1}>x \, , \, S_{X_t+1}>t-s)}{\mathbb{P}(S_{X_t+1}>t-s)} f_S(s) \, ds \\[12pt]

& {} = \int_0^\infty \frac{ 1-F(\max \{ x,t-s \}) }{1-F(t-s)} f_S(s) \, ds \\[12pt]

& {} = \int_0^\infty \min \left\{\frac{ 1-F(x) }{1-F(t-s)},\frac{ 1-F(t-s) }{1-F(t-s)}\right\} f_S(s) \, ds \\[12pt]

& {} = \int_0^\infty \min \left\{\frac{ 1-F(x) }{1-F(t-s)},1\right\} f_S(s) \, ds \\[12pt]

& {} \geq 1-F(x) \\[12pt]

& {} = \mathbb{P}(S_1>x)

\end{align}](../I/m/0588bbdc291aad8cbad51bc86fc2237b.png)

![\begin{align}

\mathbb{E}S & = \mathbb{E}[S \mid \mbox{fails before } t] \cdot \mathbb{P}[\mbox{fails before } t] + \mathbb{E}[S \mid \mbox{does not fail before } t] \cdot \mathbb{P}[\mbox{does not fail before } t] \\

& = \frac{t}{2}\left(0.5t\right) + \frac{2-t}{2}\left( t \right)

\end{align}](../I/m/d1e607a841f5510df621b204eabb7bf5.png)