Phasor

In physics and engineering, a phasor (a portmanteau of phase vector[1][2]), is a complex number representing a sinusoidal function whose amplitude (A), frequency (ω), and phase (θ) are time-invariant. It is a special case of a more general concept called analytic representation.[3] Phasors separate the dependencies on A, ω, and θ into three independent factors. This can be particularly useful because the frequency factor (which includes the time-dependence of the sinusoid) is often common to all the components of a linear combination of sinusoids. In those situations, phasors allow this common feature to be factored out, leaving just the A and θ features. A phasor may also be called a complex amplitude[4][5] and—in older texts—a phasor is also called a sinor[6] or even complexor.[6]

The origin of the term phasor rightfully suggests that a (diagrammatic) calculus somewhat similar to that possible for vectors is possible for phasors as well.[6] An important additional feature of the phasor transform is that differentiation and integration of sinusoidal signals (having constant amplitude, period and phase) corresponds to simple algebraic operations on the phasors; the phasor transform thus allows the analysis (calculation) of the AC steady state of RLC circuits by solving simple algebraic equations (albeit with complex coefficients) in the phasor domain instead of solving differential equations (with real coefficients) in the time domain.[7][8] The originator of the phasor transform was Charles Proteus Steinmetz working at General Electric in the late 19th century.[9][10]

Glossing over some mathematical details, the phasor transform can also be seen as a particular case of the Laplace transform, which additionally can be used to (simultaneously) derive the transient response of an RLC circuit.[10][8] However, the Laplace transform is mathematically more difficult to apply and the effort may be unjustified if only steady state analysis is required.[10]

Definition

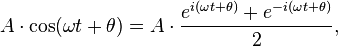

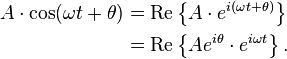

Euler's formula indicates that sinusoids can be represented mathematically as the sum of two complex-valued functions:

or as the real part of one of the functions:

The term phasor can refer to either  or just the complex constant,

or just the complex constant,  . In the latter case, it is understood to be a shorthand notation, encoding the amplitude and phase of an underlying sinusoid.

. In the latter case, it is understood to be a shorthand notation, encoding the amplitude and phase of an underlying sinusoid.

An even more compact shorthand is angle notation:  See also vector notation.

See also vector notation.

. The phase constant

. The phase constant  represents the angle that the vector forms with the real axis at t = 0.

represents the angle that the vector forms with the real axis at t = 0.Phasor arithmetic

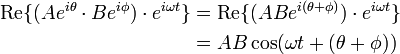

Multiplication by a constant (scalar)

Multiplication of the phasor  by a complex constant,

by a complex constant,  , produces another phasor. That means its only effect is to change the amplitude and phase of the underlying sinusoid:

, produces another phasor. That means its only effect is to change the amplitude and phase of the underlying sinusoid:

In electronics,  would represent an impedance, which is independent of time. In particular it is not the shorthand notation for another phasor. Multiplying a phasor current by an impedance produces a phasor voltage. But the product of two phasors (or squaring a phasor) would represent the product of two sinusoids, which is a non-linear operation that produces new frequency components. Phasor notation can only represent systems with one frequency, such as a linear system stimulated by a sinusoid.

would represent an impedance, which is independent of time. In particular it is not the shorthand notation for another phasor. Multiplying a phasor current by an impedance produces a phasor voltage. But the product of two phasors (or squaring a phasor) would represent the product of two sinusoids, which is a non-linear operation that produces new frequency components. Phasor notation can only represent systems with one frequency, such as a linear system stimulated by a sinusoid.

Differentiation and integration

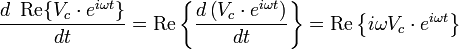

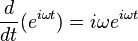

The time derivative or integral of a phasor produces another phasor.[lower-alpha 2] For example:

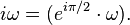

Therefore, in phasor representation, the time derivative of a sinusoid becomes just multiplication by the constant,

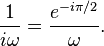

Similarly, integrating a phasor corresponds to multiplication by  The time-dependent factor,

The time-dependent factor,  , is unaffected.

, is unaffected.

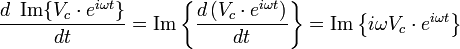

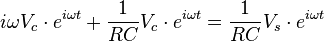

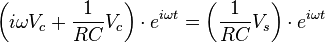

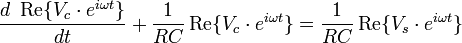

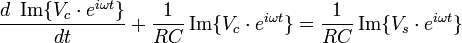

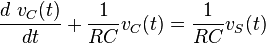

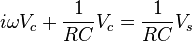

When we solve a linear differential equation with phasor arithmetic, we are merely factoring  out of all terms of the equation, and reinserting it into the answer. For example, consider the following differential equation for the voltage across the capacitor in an RC circuit:

out of all terms of the equation, and reinserting it into the answer. For example, consider the following differential equation for the voltage across the capacitor in an RC circuit:

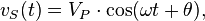

When the voltage source in this circuit is sinusoidal:

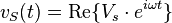

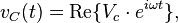

we may substitute:

where phasor  and phasor

and phasor  is the unknown quantity to be determined.

is the unknown quantity to be determined.

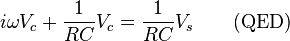

In the phasor shorthand notation, the differential equation reduces to[lower-alpha 3]:

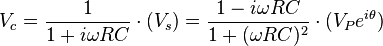

Solving for the phasor capacitor voltage gives:

As we have seen, the factor multiplying  represents differences of the amplitude and phase of

represents differences of the amplitude and phase of  relative to

relative to  and

and

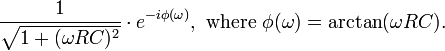

In polar coordinate form, it is:

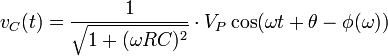

Therefore:

Addition

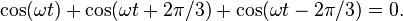

The sum of multiple phasors produces another phasor. That is because the sum of sinusoids with the same frequency is also a sinusoid with that frequency:

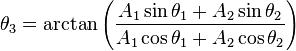

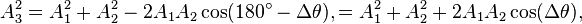

where:

or, via the law of cosines on the complex plane (or the trigonometric identity for angle differences):

where  .

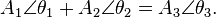

A key point is that A3 and θ3 do not depend on ω or t, which is what makes phasor notation possible. The time and frequency dependence can be suppressed and re-inserted into the outcome as long as the only operations used in between are ones that produce another phasor. In angle notation, the operation shown above is written:

.

A key point is that A3 and θ3 do not depend on ω or t, which is what makes phasor notation possible. The time and frequency dependence can be suppressed and re-inserted into the outcome as long as the only operations used in between are ones that produce another phasor. In angle notation, the operation shown above is written:

Another way to view addition is that two vectors with coordinates [A1 cos(ωt + θ1), A1 sin(ωt + θ1)] and [A2 cos(ωt + θ2), A2 sin(ωt + θ2)] are added vectorially to produce a resultant vector with coordinates [A3 cos(ωt + θ3), A3 sin(ωt + θ3)]. (see animation)

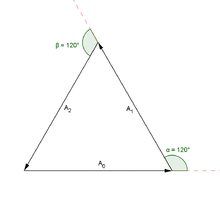

In physics, this sort of addition occurs when sinusoids interfere with each other, constructively or destructively. The static vector concept provides useful insight into questions like this: "What phase difference would be required between three identical sinusoids for perfect cancellation?" In this case, simply imagine taking three vectors of equal length and placing them head to tail such that the last head matches up with the first tail. Clearly, the shape which satisfies these conditions is an equilateral triangle, so the angle between each phasor to the next is 120° (2π/3 radians), or one third of a wavelength λ/3. So the phase difference between each wave must also be 120°, as is the case in three-phase power

In other words, what this shows is:

In the example of three waves, the phase difference between the first and the last wave was 240 degrees, while for two waves destructive interference happens at 180 degrees. In the limit of many waves, the phasors must form a circle for destructive interference, so that the first phasor is nearly parallel with the last. This means that for many sources, destructive interference happens when the first and last wave differ by 360 degrees, a full wavelength  . This is why in single slit diffraction, the minima occurs when light from the far edge travels a full wavelength further than the light from the near edge.

. This is why in single slit diffraction, the minima occurs when light from the far edge travels a full wavelength further than the light from the near edge.

Phasor diagrams

Electrical engineers, electronics engineers, electronic engineering technicians and aircraft engineers all use phasor diagrams to visualize complex constants and variables (phasors). Like vectors, arrows drawn on graph paper or computer displays represent phasors. Cartesian and polar representations each have advantages, with the Cartesian coordinates showing the real and imaginary parts of the phasor and the polar coordinates showing its magnitude and phase.

Applications

Circuit laws

With phasors, the techniques for solving DC circuits can be applied to solve AC circuits. A list of the basic laws is given below.

- Ohm's law for resistors: a resistor has no time delays and therefore doesn't change the phase of a signal therefore V=IR remains valid.

- Ohm's law for resistors, inductors, and capacitors: V = IZ where Z is the complex impedance.

- In an AC circuit we have real power (P) which is a representation of the average power into the circuit and reactive power (Q) which indicates power flowing back and forward. We can also define the complex power S = P + jQ and the apparent power which is the magnitude of S. The power law for an AC circuit expressed in phasors is then S = VI* (where I* is the complex conjugate of I).

- Kirchhoff's circuit laws work with phasors in complex form

Given this we can apply the techniques of analysis of resistive circuits with phasors to analyze single frequency AC circuits containing resistors, capacitors, and inductors. Multiple frequency linear AC circuits and AC circuits with different waveforms can be analyzed to find voltages and currents by transforming all waveforms to sine wave components with magnitude and phase then analyzing each frequency separately, as allowed by the superposition theorem.

Power engineering

In analysis of three phase AC power systems, usually a set of phasors is defined as the three complex cube roots of unity, graphically represented as unit magnitudes at angles of 0, 120 and 240 degrees. By treating polyphase AC circuit quantities as phasors, balanced circuits can be simplified and unbalanced circuits can be treated as an algebraic combination of symmetrical circuits. This approach greatly simplifies the work required in electrical calculations of voltage drop, power flow, and short-circuit currents. In the context of power systems analysis, the phase angle is often given in degrees, and the magnitude in rms value rather than the peak amplitude of the sinusoid.

The technique of synchrophasors uses digital instruments to measure the phasors representing transmission system voltages at widespread points in a transmission network. Small changes in the phasors are sensitive indicators of power flow and system stability.

See also

- In-phase and quadrature components

- Analytic signal

- Complex envelope

- Phase factor, a phasor of unit magnitude

Footnotes

- ↑

- i is the Imaginary unit (

).

). - In electrical engineering texts, the imaginary unit is often symbolized by j.

- The frequency of the wave, in Hz, is given by

.

.

- i is the Imaginary unit (

- ↑ This results from:

which means that the complex exponential is the eigenfunction of the derivative operation.

which means that the complex exponential is the eigenfunction of the derivative operation.

- ↑ Proof:

-

(Eq.1)

, specifically:

, specifically:  it follows that:

it follows that:

-

(Eq.2)

and adding both equations gives:

and adding both equations gives:

-

References

- ↑ Huw Fox; William Bolton (2002). Mathematics for Engineers and Technologists. Butterworth-Heinemann. p. 30. ISBN 978-0-08-051119-1.

- ↑ Clay Rawlins (2000). Basic AC Circuits (2nd ed.). Newnes. p. 124. ISBN 978-0-08-049398-5.

- ↑ Bracewell, Ron. The Fourier Transform and Its Applications. McGraw-Hill, 1965. p269

- ↑ K. S. Suresh Kumar (2008). Electric Circuits and Networks. Pearson Education India. p. 272. ISBN 978-81-317-1390-7.

- ↑ Kequian Zhang; Dejie Li (2007). Electromagnetic Theory for Microwaves and Optoelectronics (2nd ed.). Springer Science & Business Media. p. 13. ISBN 978-3-540-74296-8.

- ↑ 6.0 6.1 6.2 J. Hindmarsh (1984). Electrical Machines & their Applications (4th ed.). Elsevier. p. 58. ISBN 978-1-4832-9492-6.

- ↑ William J. Eccles (2011). Pragmatic Electrical Engineering: Fundamentals. Morgan & Claypool Publishers. p. 51. ISBN 978-1-60845-668-0.

- ↑ 8.0 8.1 Richard C. Dorf; James A. Svoboda (2010). Introduction to Electric Circuits (8th ed.). John Wiley & Sons. p. 661. ISBN 978-0-470-52157-1.

- ↑ Allan H. Robbins; Wilhelm Miller (2012). Circuit Analysis: Theory and Practice (5th ed.). Cengage Learning. p. 536. ISBN 1-285-40192-1.

- ↑ 10.0 10.1 10.2 Won Y. Yang; Seung C. Lee (2008). Circuit Systems with MATLAB and PSpice. John Wiley & Sons. pp. 256–261. ISBN 978-0-470-82240-1.

Further reading

- Douglas C. Giancoli (1989). Physics for Scientists and Engineers. Prentice Hall. ISBN 0-13-666322-2.

- Dorf, Richard C.; Tallarida, Ronald J. (1993-07-15). Pocket Book of Electrical Engineering Formulas (1 ed.). Boca Raton,FL: CRC Press. pp. 152–155. ISBN 0849344735.

External links

| Wikimedia Commons has media related to Phasors. |

![\begin{align}

\operatorname{Re}\left\{\frac{d}{d t}(A e^{i\theta} \cdot e^{i\omega t})\right\}

&= \operatorname{Re}\{A e^{i\theta} \cdot i\omega e^{i\omega t}\} \\[8pt]

&= \operatorname{Re}\{A e^{i\theta} \cdot e^{i\pi/2} \omega e^{i\omega t}\} \\[8pt]

&= \operatorname{Re}\{\omega A e^{i(\theta + \pi/2)} \cdot e^{i\omega t}\} \\[8pt]

&= \omega A\cdot \cos(\omega t + \theta + \pi/2)

\end{align}](../I/m/ef633a8dac0b9c146957386fcb80d5f0.png)

![\begin{align}

A_1 \cos(\omega t + \theta_1) + A_2 \cos(\omega t + \theta_2)

&= \operatorname{Re} \{A_1 e^{i\theta_1}e^{i\omega t}\} + \operatorname{Re} \{A_2 e^{i\theta_2}e^{i\omega t}\} \\[8pt]

&= \operatorname{Re} \{A_1 e^{i\theta_1}e^{i\omega t} + A_2 e^{i\theta_2}e^{i\omega t}\} \\[8pt]

&= \operatorname{Re} \{(A_1 e^{i\theta_1} + A_2 e^{i\theta_2})e^{i\omega t}\} \\[8pt]

&= \operatorname{Re} \{(A_3 e^{i\theta_3})e^{i\omega t}\} \\[8pt]

&= A_3 \cos(\omega t + \theta_3),

\end{align}](../I/m/91ebad595facf98aa9d16513354f78ab.png)