nat (unit)

The natural unit of information (symbol nat),[1] sometimes also nit or nepit, is a unit of information or entropy, based on natural logarithms and powers of e, rather than the powers of 2 and base 2 logarithms, which define the bit. This unit is also known by its unit symbol, the nat. The nat is the natural unit for information entropy. Physical systems of natural units that normalize Boltzmann's constant to 1 are effectively measuring thermodynamic entropy in nats.

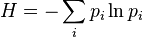

When the Shannon entropy is written using a natural logarithm,

it is implicitly giving a number measured in nats.

One nat is equal to 1/(ln 2) shannons ≈ 1.44 Sh or, equivalently, 1/(ln 10) hartleys ≈ 0.434 Hart.[2] The factors 1.44 and 0.434 arise from the relationships

, and

, and .

.

One nat is the information content of an event if the probability of that event occurring is 1/e.

History

Alan Turing used the natural ban (Hodges 1983, Alan Turing: The Enigma). Boulton and Wallace (1970) used the term nit in conjunction with minimum message length which was subsequently changed by the minimum description length community to nat to avoid confusion with the nit used as a unit of luminance (Comley and Dowe, 2005, sec. 11.4.1, p271).

References

- Comley, J. W. & Dowe, D. L. (2005). "Minimum Message Length, MDL and Generalised Bayesian Networks with Asymmetric Languages". In Grünwald, P.; Myung, I. J. & Pitt, M. A. Advances in Minimum Description Length: Theory and Applications. Cambridge: MIT Press. ISBN 0-262-07262-9.

- Reza, Fazlollah M. (1994). An Introduction to Information Theory. New York: Dover. ISBN 0-486-68210-2.

- ↑ IEC 80000-13:2008

- ↑ "IEC 80000-13:2008". International Electrotechnical Commission. Retrieved 21 July 2013.