Inverse function theorem

In mathematics, specifically differential calculus, the inverse function theorem gives sufficient conditions for a function to be invertible in a neighborhood of a point in its domain. The theorem also gives a formula for the derivative of the inverse function. In multivariable calculus, this theorem can be generalized to any continuously differentiable, vector-valued function whose Jacobian determinant is nonzero at a point in its domain. In this case, the theorem gives a formula for the Jacobian matrix of the inverse. There are also versions of the inverse function theorem for complex holomorphic functions, for differentiable maps between manifolds, for differentiable functions between Banach spaces, and so forth.

Statement of the theorem

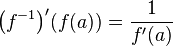

For functions of a single variable, the theorem states that if  is a continuously differentiable function with nonzero derivative at the point

is a continuously differentiable function with nonzero derivative at the point  , then

, then  is invertible in a neighborhood of

is invertible in a neighborhood of  , the inverse is continuously differentiable, and

, the inverse is continuously differentiable, and

.

.

For functions of more than one variable, the theorem states that if the total derivative of a continuously differentiable function  defined from an open set of

defined from an open set of  into

into  is invertible at a point

is invertible at a point  (i.e., the Jacobian determinant of

(i.e., the Jacobian determinant of  at

at  is non-zero), then

is non-zero), then  is an invertible function near

is an invertible function near  . That is, an inverse function to

. That is, an inverse function to  exists in some neighborhood of

exists in some neighborhood of  . Moreover, the inverse function

. Moreover, the inverse function  is also continuously differentiable. In the infinite dimensional case it is required that the Fréchet derivative have a bounded inverse at

is also continuously differentiable. In the infinite dimensional case it is required that the Fréchet derivative have a bounded inverse at  .

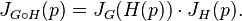

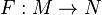

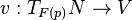

Finally, the theorem says that

.

Finally, the theorem says that

where ![[\cdot]^{-1}](../I/m/a14bd1b99710bb61ed05f2ddec407655.png) denotes matrix inverse and

denotes matrix inverse and  is the Jacobian matrix of the function

is the Jacobian matrix of the function  at

the point

at

the point  .

This formula can also be derived from the chain rule. The chain rule states that for functions

.

This formula can also be derived from the chain rule. The chain rule states that for functions  and

and  which have total derivatives at

which have total derivatives at  and

and  respectively,

respectively,

Letting  be

be  and

and  be

be  ,

,  is the identity function, whose Jacobian matrix is also

the identity. In this special case, the formula above can be solved for

is the identity function, whose Jacobian matrix is also

the identity. In this special case, the formula above can be solved for  .

Note that the chain rule assumes the existence of total derivative of the inside function

.

Note that the chain rule assumes the existence of total derivative of the inside function  , while

the inverse function theorem proves that

, while

the inverse function theorem proves that  has a total derivative at

has a total derivative at  .

The existence of an inverse function to

.

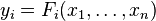

The existence of an inverse function to  is equivalent to saying that the system of

is equivalent to saying that the system of  equations

equations  can be solved for

can be solved for  in terms of

in terms of  if we restrict

if we restrict  and

and  to small enough neighborhoods of

to small enough neighborhoods of  and

and  , respectively.

, respectively.

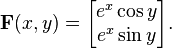

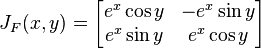

Example

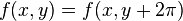

Consider the vector-valued function  from

from  to

to  defined by

defined by

Then the Jacobian matrix is

and the determinant is

The determinant  is nonzero everywhere. By the theorem, for every point

is nonzero everywhere. By the theorem, for every point  in

in  , there exists a neighborhood about

, there exists a neighborhood about  over which

over which  is invertible. Note that this is different than saying

is invertible. Note that this is different than saying  is invertible over its entire image. In this example,

is invertible over its entire image. In this example,  is not invertible because it is not injective (because

is not invertible because it is not injective (because  ).

).

Notes on methods of proof

As an important result, the inverse function theorem has been given numerous proofs. The proof most commonly seen in textbooks relies on the contraction mapping principle, also known as the Banach fixed point theorem. (This theorem can also be used as the key step in the proof of existence and uniqueness of solutions to ordinary differential equations.) Since this theorem applies in infinite-dimensional (Banach space) settings, it is the tool used in proving the infinite-dimensional version of the inverse function theorem (see "Generalizations", below). An alternate proof (which works only in finite dimensions) instead uses as the key tool the extreme value theorem for functions on a compact set.[1] Yet another proof uses Newton's method, which has the advantage of providing an effective version of the theorem. That is, given specific bounds on the derivative of the function, an estimate of the size of the neighborhood on which the function is invertible can be obtained.[2]

Generalizations

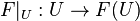

Manifolds

The inverse function theorem can be generalized to differentiable maps between differentiable manifolds. In this context the theorem states that for a differentiable map  , if the derivative of

, if the derivative of  ,

,

is a linear isomorphism at a point  in

in  then there exists an open neighborhood

then there exists an open neighborhood  of

of  such that

such that

is a diffeomorphism. Note that this implies that  and

and  must have the same dimension at

must have the same dimension at  .

If the derivative of

.

If the derivative of  is an isomorphism at all points

is an isomorphism at all points  in

in  then the map

then the map  is a local diffeomorphism.

is a local diffeomorphism.

Banach spaces

The inverse function theorem can also be generalized to differentiable maps between Banach spaces. Let  and

and  be Banach spaces and

be Banach spaces and  an open neighbourhood of the origin in

an open neighbourhood of the origin in  . Let

. Let  be continuously differentiable and assume that the derivative

be continuously differentiable and assume that the derivative  of

of  at 0 is a bounded linear isomorphism of

at 0 is a bounded linear isomorphism of  onto

onto  . Then there exists an open neighbourhood

. Then there exists an open neighbourhood  of

of  in

in  and a continuously differentiable map

and a continuously differentiable map  such that

such that  for all

for all  in

in  . Moreover,

. Moreover,  is the only sufficiently small solution

is the only sufficiently small solution  of the equation

of the equation  .

.

Banach manifolds

These two directions of generalization can be combined in the inverse function theorem for Banach manifolds.[3]

Constant rank theorem

The inverse function theorem (and the implicit function theorem) can be seen as a special case of the constant rank theorem, which states that a smooth map with constant rank near a point can be put in a particular normal form near that point.[4] Specifically, if  has constant rank near a point

has constant rank near a point  , then there are open neighborhoods

, then there are open neighborhoods  of

of  and

and  of

of  and there are diffeomorphisms

and there are diffeomorphisms  and

and  such that

such that  and such that the derivative

and such that the derivative  is equal to

is equal to  . That is,

. That is,  "looks like" its derivative near

"looks like" its derivative near  . Semicontinuity of the rank function implies that the set of points near which the derivative has constant rank is an open dense subset of the domain of the map. So the constant rank theorem applies "generically" across the domain.

. Semicontinuity of the rank function implies that the set of points near which the derivative has constant rank is an open dense subset of the domain of the map. So the constant rank theorem applies "generically" across the domain.

When the derivative of  is injective (resp. surjective) at a point

is injective (resp. surjective) at a point  , it is also injective (resp. surjective) in a neighborhood of

, it is also injective (resp. surjective) in a neighborhood of  , and hence the rank of

, and hence the rank of  is constant on that neighborhood, so the constant rank theorem applies.

is constant on that neighborhood, so the constant rank theorem applies.

Holomorphic Functions

If the Jacobian (in this context the matrix formed by the complex derivatives) of a holomorphic function  , defined from an open set

, defined from an open set  of

of  into

into  , is invertible at a point

, is invertible at a point  , then

, then  is an invertible function near

is an invertible function near  . This follows immediately from the theorem above. One can also show, that this inverse is again a holomorphic function.[5]

. This follows immediately from the theorem above. One can also show, that this inverse is again a holomorphic function.[5]

See also

Notes

- ↑ Michael Spivak, Calculus on Manifolds.

- ↑ John H. Hubbard and Barbara Burke Hubbard, Vector Analysis, Linear Algebra, and Differential Forms: a unified approach, Matrix Editions, 2001.

- ↑ Serge Lang, Differential and Riemannian Manifolds, Springer, 1995, ISBN 0-387-94338-2.

- ↑ William M. Boothby, An Introduction to Differentiable Manifolds and Riemannian Geometry, Revised Second Edition, Academic Press, 2002, ISBN 0-12-116051-3.

- ↑ K. Fritzsche, H. Grauert, "From Holomorphic Functions to Complex Manifolds", Springer-Verlag, (2002). Page 33.

References

- Nijenhuis, Albert (1974). "Strong derivatives and inverse mappings". Amer. Math. Monthly 81 (9): 969–980. doi:10.2307/2319298.

- Renardy, Michael and Rogers, Robert C. (2004). An introduction to partial differential equations. Texts in Applied Mathematics 13 (Second ed.). New York: Springer-Verlag. pp. 337–338. ISBN 0-387-00444-0.

- Rudin, Walter (1976). Principles of mathematical analysis. International Series in Pure and Applied Mathematics (Third ed.). New York: McGraw-Hill Book Co. pp. 221–223.

| ||||||||||||||||||||||||||||||||||

![J_{F^{-1}}(F(p)) = [ J_F(p) ]^{-1}](../I/m/4d57dae6db802d773b414d37986c07f1.png)