Idempotent matrix

In algebra, an idempotent matrix is a matrix which, when multiplied by itself, yields itself.[1][2] That is, the matrix M is idempotent if and only if MM = M. For this product MM to be defined, M must necessarily be a square matrix. Viewed this way, idempotent matrices are idempotent elements of matrix rings.

Example

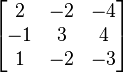

Examples of a  and a

and a  idempotent matrix are

idempotent matrix are  and

and  , respectively.

, respectively.

Real 2 × 2 case

If a matrix  is idempotent, then

is idempotent, then

-

-

implies

implies  so

so  or

or  .

. -

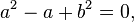

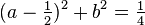

If b = c, the matrix  will be idempotent provided

will be idempotent provided  so a satisfies the quadratic equation

so a satisfies the quadratic equation

or

or

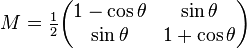

which is a circle with center (1/2, 0) and radius 1/2. In terms of an angle θ,

is idempotent.

is idempotent.

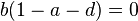

However, b = c is not a necessary condition: any matrix

with

with  is idempotent.

is idempotent.

Properties

With the exception of the identity matrix, an idempotent matrix is singular; that is, its number of independent rows (and columns) is less than its number of rows (and columns). This can be seen from writing MM = M, assuming that M has full rank (is non-singular), and pre-multiplying by M−1 to obtain M = M−1M = I.

When an idempotent matrix is subtracted from the identity matrix, the result is also idempotent. This holds since [I − M][I − M] = I − M − M + M2 = I − M − M + M = I − M.

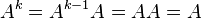

A matrix A is idempotent if and only if for any natural number n,  . The 'if' direction trivially follows by taking n=2. The `only if' part can be shown using proof by induction. Clearly we have the result for n=1, as

. The 'if' direction trivially follows by taking n=2. The `only if' part can be shown using proof by induction. Clearly we have the result for n=1, as  . Suppose that

. Suppose that  . Then,

. Then,  , as required. Hence by the principle of induction, the result follows.

, as required. Hence by the principle of induction, the result follows.

An idempotent matrix is always diagonalizable and its eigenvalues are either 0 or 1.[3] The trace of an idempotent matrix — the sum of the elements on its main diagonal — equals the rank of the matrix and thus is always an integer. This provides an easy way of computing the rank, or alternatively an easy way of determining the trace of a matrix whose elements are not specifically known (which is helpful in econometrics, for example, in establishing the degree of bias in using a sample variance as an estimate of a population variance).

Applications

Idempotent matrices arise frequently in regression analysis and econometrics. For example, in ordinary least squares, the regression problem is to choose a vector  of coefficient estimates so as to minimize the sum of squared residuals (mispredictions) ei: in matrix form,

of coefficient estimates so as to minimize the sum of squared residuals (mispredictions) ei: in matrix form,

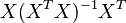

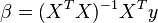

where y is a vector of dependent variable observations, and X is a matrix each of whose columns is a column of observations on one of the independent variables. The resulting estimator is

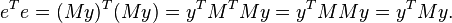

where superscript T indicates a transpose, and the vector of residuals is[2]

Here both M and  (the latter being known as the hat matrix) are idempotent and symmetric matrices, a fact which allows simplification when the sum of squared residuals is computed:

(the latter being known as the hat matrix) are idempotent and symmetric matrices, a fact which allows simplification when the sum of squared residuals is computed:

The idempotency of M plays a role in other calculations as well, such as in determining the variance of the estimator  .

.

An idempotent linear operator P is a projection operator on the range space R(P) along its null space N(P). P is an orthogonal projection operator if and only if it is idempotent and symmetric.

See also

References

- ↑ Chiang, Alpha C. (1984). Fundamental Methods of Mathematical Economics (3rd ed.). New York: McGraw–Hill. p. 80. ISBN 0070108137.

- ↑ 2.0 2.1 Greene, William H. (2003). Econometric Analysis (5th ed.). Upper Saddle River, NJ: Prentice–Hall. pp. 808–809. ISBN 0130661899.

- ↑ Horn, Roger A.; Johnson, Charles R. (1990). Matrix analysis. Cambridge University Press. p. p. 148. ISBN 0521386322.

![e = y - X \beta = y - X(X^TX)^{-1}X^Ty = [I - X(X^TX)^{-1}X^T]y = My. \,](../I/m/3c1f4808165dd23a73bac357e02ecd7c.png)