Dyadics

In multilinear algebra, a dyadic or dyadic tensor is a second order tensor written in a special notation, formed by juxtaposing pairs of vectors, along with a notation for manipulating such expressions analogous to the rules for matrix algebra. The notation and terminology are relatively obsolete today. Its uses in physics include continuum mechanics and electromagnetism.

Dyadic notation was first established by Josiah Willard Gibbs in 1884.

In this article, upper-case bold variables denote dyadics (including dyads) whereas lower-case bold variables denote vectors. An alternative notation uses respectively double and single over- or underbars.

Definitions and terminology

Dyadic, outer, and tensor products

A dyad is a tensor of order two and rank one, and is the result of the dyadic product of two vectors (complex vectors in general), whereas a dyadic is a general tensor of order two.

There are several equivalent terms and notations for this product:

- the dyadic product of two vectors a and b is denoted by the juxtaposition ab,

- the outer product of two column vectors a and b is denoted and defined as a ⊗ b or abT, where T means transpose,

- the tensor product of two vectors a and b is denoted a ⊗ b,

In the dyadic context they all have the same definition and meaning, and are used synonymously, although the tensor product is an instance of the more general and abstract use of the term.

Three-dimensional Euclidean space

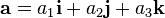

To illustrate the equivalent usage, consider three-dimensional Euclidean space, letting:

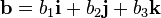

be two vectors where i, j, k (also denoted e1, e2, e3) are the standard basis vectors in this vector space (see also Cartesian coordinates). Then the dyadic product of a and b can be represented as a sum:

or by extension from row and column vectors, a 3×3 matrix (also the result of the outer product or tensor product of a and b):

A dyad is a component of the dyadic (a monomial of the sum or equivalently entry of the matrix) - the juxtaposition of a pair of basis vectors scalar multiplied by a number.

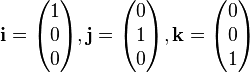

Just as the standard basis (and unit) vectors i, j, k, have the representations:

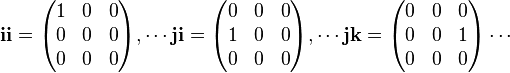

(which can be transposed), the standard basis (and unit) dyads have the representation:

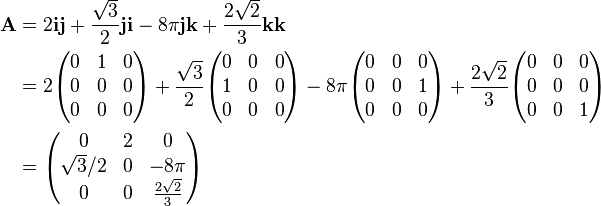

For a simple numerical example in the standard basis:

N-dimensional Euclidean space

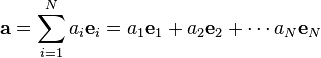

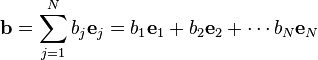

If the Euclidean space is N-dimensional, and

where ei and ej are the standard basis vectors in N-dimensions (the index i on ei selects a specific vector, not a component of the vector as in ai), then in algebraic form their dyadic product is:

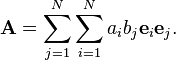

This is known as the nonion form of the dyadic. Their outer/tensor product in matrix form is:

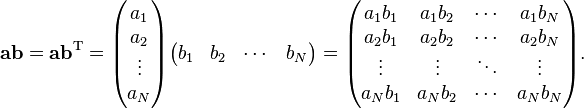

A dyadic polynomial A, otherwise known as a dyadic, is formed from multiple vectors ai and bj:

A dyadic which cannot be reduced to a sum of less than N dyads is said to be complete. In this case, the forming vectors are non-coplanar, see Chen (1983).

Classification

The following table classifies dyadics:

Determinant Adjugate Matrix and its rank Zero = 0 = 0 = 0; rank 0: all zeroes Linear = 0 = 0 ≠ 0; rank 1: at least one non-zero element and all 2 × 2 subdeterminants zero (single dyadic) Planar = 0 ≠ 0 (single dyadic) ≠ 0; rank 2: at least one non-zero 2 × 2 subdeterminant Complete ≠ 0 ≠ 0 ≠ 0; rank 3: non-zero determinant

Identities

The following identities are a direct consequence of the definition of the tensor product:[1]

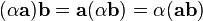

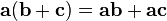

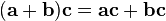

- Compatible with scalar multiplication:

.

. - Distributive over vector addition:

Dyadic algebra

Product of dyadic and vector

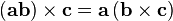

There are four operations defined on a vector and dyadic, constructed from the products defined on vectors.

Left Right Dot product

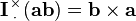

Cross product

Product of dyadic and dyadic

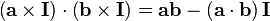

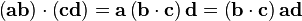

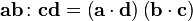

There are five operations for a dyadic to another dyadic. Let a, b, c, d be vectors. Then:

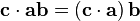

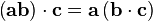

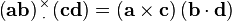

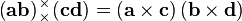

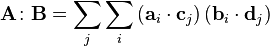

Dot Cross Dot Dot product

Double dot product

or

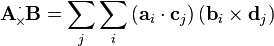

Dot–cross product

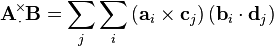

Cross Cross–dot product

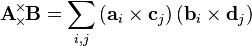

Double cross product

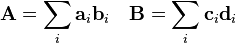

Letting

be two general dyadics, we have:

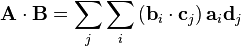

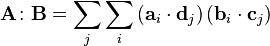

Dot Cross Dot Dot product

Double dot product

or

Dot–cross product

Cross Cross–dot product

Double cross product

Double-dot product

There are two ways to define the double dot product, one must be careful when deciding which convention to use. As there are no analogous matrix operations for the remaining dyadic products, no ambiguities in their definitions appear.

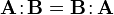

The double-dot product is commutative due to commutativity of the normal dot-product:

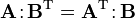

There is a special double dot product with a transpose

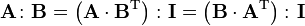

Another identity is:

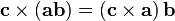

Double-cross product

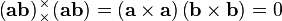

We can see that, for any dyad formed from two vectors a and b, its double cross product is zero.

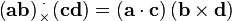

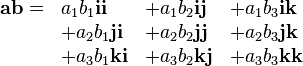

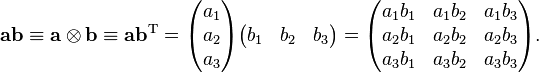

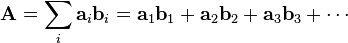

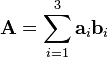

However, by definition, a dyadic double-cross product on itself will generally be non-zero. For example, a dyadic A composed of six different vectors

has a non-zero self-double-cross product of

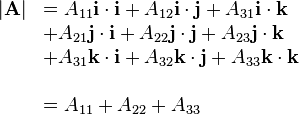

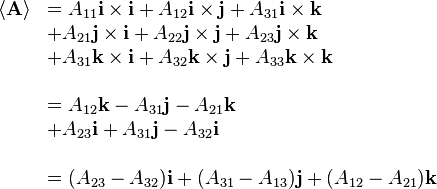

Tensor contraction

The spur or expansion factor arises from the formal expansion of the dyadic in a coordinate basis by replacing each juxtaposition by a dot product of vectors:

in index notation this is the contraction of indices on the dyadic:

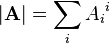

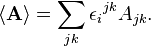

In three dimensions only, the rotation factor arises by replacing every juxtaposition by a cross product

In index notation this is the contraction of A with the Levi-Civita tensor

Special dyadics

Unit dyadic

There exists a unit dyadic, denoted by I, such that, for any vector a,

Given a basis of 3 vectors a, b and c, with reciprocal basis  , the unit dyadic is expressed by

, the unit dyadic is expressed by

In the standard basis,

The corresponding matrix is

This can be put on more careful foundations (explaining what the logical content of "juxtaposing notation" could possibly mean) using the language of tensor products. If V is a finite-dimensional vector space, a dyadic tensor on V is an elementary tensor in the tensor product of V with its dual space.

The tensor product of V and its dual space is isomorphic to the space of linear maps from V to V: a dyadic tensor vf is simply the linear map sending any w in V to f(w)v. When V is Euclidean n-space, we can use the inner product to identify the dual space with V itself, making a dyadic tensor an elementary tensor product of two vectors in Euclidean space.

In this sense, the unit dyadic ij is the function from 3-space to itself sending a1i + a2j + a3k to a2i, and jj sends this sum to a2j. Now it is revealed in what (precise) sense ii + jj + kk is the identity: it sends a1i + a2j + a3k to itself because its effect is to sum each unit vector in the standard basis scaled by the coefficient of the vector in that basis.

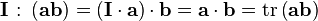

- Properties of unit dyadics

where "tr" denotes the trace.

Rotation dyadic

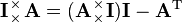

For any vector a in two dimensions, the left-cross product with the identity dyad I:

is a 90 degree anticlockwise rotation dyadic around a. Alternatively the dyadic tensor

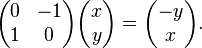

- J = ji − ij =

is a 90° anticlockwise rotation operator in 2d. It can be left-dotted with a vector to produce the rotation:

or in matrix notation

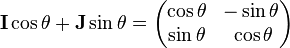

A general 2d rotation dyadic for θ angle anti-clockwise is

where I and J are as above.

Related terms

Some authors generalize from the term dyadic to related terms triadic, tetradic and polyadic.[2]

See also

- Kronecker product

- Polyadic algebra

- Unit vector

- Multivector

- Differential form

- Quaternions

- Field (mathematics)

References

- P. Mitiguy (2009). "Vectors and dyadics". Stanford, USA. Chapter 2

- Spiegel, M.R.; Lipschutz, S.; Spellman, D. (2009). Vector analysis, Schaum's outlines. McGraw Hill. ISBN 978-0-07-161545-7.

- A.J.M. Spencer (1992). Continuum Mechanics. Dover Publications. ISBN 0-486-43594-6..

- Morse, Philip M.; Feshbach, Herman (1953), "§1.6: Dyadics and other vector operators", Methods of theoretical physics, Volume 1, New York: McGraw-Hill, pp. 54–92, ISBN 978-0-07-043316-8, MR 0059774.

- Ismo V. Lindell (1996). Methods for Electromagnetic Field Analysis. Wiley-Blackwell. ISBN 978-0-7803-6039-6..

- Hollis C. Chen (1983). Theory of Electromagnetic Wave - A Coordinate-free approach. McGraw Hill. ISBN 978-0-07-010688-8..

External links

- Advanced Field Theory, I.V.Lindel

- Vector and Dyadic Analysis

- Introductory Tensor Analysis

- Nasa.gov, Foundations of Tensor Analysis for students of Physics and Engineering with an Introduction to the Theory of Relativity, J.C. Kolecki

- Nasa.gov, An introduction to Tensors for students of Physics and Engineering, J.C. Kolecki

| ||||||||||||||||||||||||||||||||||||||||||||||

![\mathbf{A}

\!\!\!\begin{array}{c}

_\times \\

^\times

\end{array}\!\!\!

\mathbf{A} = 2 \left[\left(\mathbf{a}_1\times \mathbf{a}_2\right)\left(\mathbf{b}_1\times \mathbf{b}_2\right)+\left(\mathbf{a}_2\times \mathbf{a}_3\right)\left(\mathbf{b}_2\times \mathbf{b}_3\right)+\left(\mathbf{a}_3\times \mathbf{a}_1\right)\left(\mathbf{b}_3\times \mathbf{b}_1\right)\right]](../I/m/3d64f89e390e61852325f734cf13cd02.png)