Coupon collector's problem

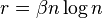

In probability theory, the coupon collector's problem describes the "collect all coupons and win" contests. It asks the following question: Suppose that there is an urn of n different coupons, from which coupons are being collected, equally likely, with replacement. What is the probability that more than t sample trials are needed to collect all n coupons? An alternative statement is: Given n coupons, how many coupons do you expect you need to draw with replacement before having drawn each coupon at least once? The mathematical analysis of the problem reveals that the expected number of trials needed grows as  .[1] For example, when n = 50 it takes about 225[2] trials to collect all 50 coupons.

.[1] For example, when n = 50 it takes about 225[2] trials to collect all 50 coupons.

Understanding the problem

The key to solving the problem is understanding that it takes very little time to collect the first few coupons. On the other hand, it takes a long time to collect the last few coupons. In fact, for 50 coupons, it takes on average 50 trials to collect the very last coupon after the other 49 coupons have been collected. This is why the expected time to collect all coupons is much longer than 50. The idea now is to split the total time into 50 intervals where the expected time can be calculated.

Answer

The following table (click [show] to expand) gives the expected number of tries to get sets of 1 to 100 coupons.

| Number of coupons, n | Expected number of tries per coupon = Hn | Expected total number of tries, E (T ) = ⌈n Hn ⌉ |

Number of coupons, n | Expected number of tries per coupon = Hn | Expected total number of tries, E (T ) = ⌈n Hn ⌉ | |

|---|---|---|---|---|---|---|

| 1 | 1 | 1 | 51 | 4.51881 | 231 | |

| 2 | 1.5 | 3 | 52 | 4.53804 | 236 | |

| 3 | 1.83333 | 6 | 53 | 4.55691 | 242 | |

| 4 | 2.08333 | 9 | 54 | 4.57543 | 248 | |

| 5 | 2.28333 | 12 | 55 | 4.59361 | 253 | |

| 6 | 2.45 | 15 | 56 | 4.61147 | 259 | |

| 7 | 2.59286 | 19 | 57 | 4.62901 | 264 | |

| 8 | 2.71786 | 22 | 58 | 4.64625 | 270 | |

| 9 | 2.82897 | 26 | 59 | 4.66320 | 276 | |

| 10 | 2.92897 | 30 | 60 | 4.67987 | 281 | |

| 11 | 3.01988 | 34 | 61 | 4.69626 | 287 | |

| 12 | 3.10321 | 38 | 62 | 4.71239 | 293 | |

| 13 | 3.18013 | 42 | 63 | 4.72827 | 298 | |

| 14 | 3.25156 | 46 | 64 | 4.74389 | 304 | |

| 15 | 3.31823 | 50 | 65 | 4.75928 | 310 | |

| 16 | 3.38073 | 55 | 66 | 4.77443 | 316 | |

| 17 | 3.43955 | 59 | 67 | 4.78935 | 321 | |

| 18 | 3.49511 | 63 | 68 | 4.80406 | 327 | |

| 19 | 3.54774 | 68 | 69 | 4.81855 | 333 | |

| 20 | 3.59774 | 72 | 70 | 4.83284 | 339 | |

| 21 | 3.64536 | 77 | 71 | 4.84692 | 345 | |

| 22 | 3.69081 | 82 | 72 | 4.86081 | 350 | |

| 23 | 3.73429 | 86 | 73 | 4.87451 | 356 | |

| 24 | 3.77596 | 91 | 74 | 4.88802 | 362 | |

| 25 | 3.81596 | 96 | 75 | 4.90136 | 368 | |

| 26 | 3.85442 | 101 | 76 | 4.91451 | 374 | |

| 27 | 3.89146 | 106 | 77 | 4.92750 | 380 | |

| 28 | 3.92717 | 110 | 78 | 4.94032 | 386 | |

| 29 | 3.96165 | 115 | 79 | 4.95298 | 392 | |

| 30 | 3.99499 | 120 | 80 | 4.96548 | 398 | |

| 31 | 4.02725 | 125 | 81 | 4.97782 | 404 | |

| 32 | 4.05850 | 130 | 82 | 4.99002 | 410 | |

| 33 | 4.08880 | 135 | 83 | 5.00207 | 416 | |

| 34 | 4.11821 | 141 | 84 | 5.01397 | 422 | |

| 35 | 4.14678 | 146 | 85 | 5.02574 | 428 | |

| 36 | 4.17456 | 151 | 86 | 5.03737 | 434 | |

| 37 | 4.20159 | 156 | 87 | 5.04886 | 440 | |

| 38 | 4.22790 | 161 | 88 | 5.06022 | 446 | |

| 39 | 4.25354 | 166 | 89 | 5.07146 | 452 | |

| 40 | 4.27854 | 172 | 90 | 5.08257 | 458 | |

| 41 | 4.30293 | 177 | 91 | 5.09356 | 464 | |

| 42 | 4.32674 | 182 | 92 | 5.10443 | 470 | |

| 43 | 4.35000 | 188 | 93 | 5.11518 | 476 | |

| 44 | 4.37273 | 193 | 94 | 5.12582 | 482 | |

| 45 | 4.39495 | 198 | 95 | 5.13635 | 488 | |

| 46 | 4.41669 | 204 | 96 | 5.14676 | 495 | |

| 47 | 4.43796 | 209 | 97 | 5.15707 | 501 | |

| 48 | 4.45880 | 215 | 98 | 5.16728 | 507 | |

| 49 | 4.47921 | 220 | 99 | 5.17738 | 513 | |

| 50 | 4.49921 | 225 | 100 | 5.18738 | 519 |

Solution

Calculating the expectation

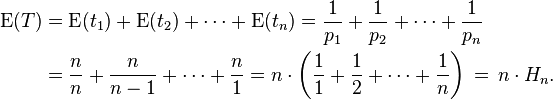

Let T be the time to collect all n coupons, and let ti be the time to collect the i-th coupon after i − 1 coupons have been collected. Think of T and ti as random variables. Observe that the probability of collecting a new coupon given i − 1 coupons is pi = (n − (i − 1))/n. Therefore, ti has geometric distribution with expectation 1/pi. By the linearity of expectations we have:

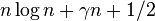

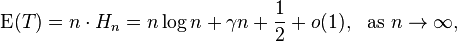

Here Hn is the harmonic number. Using the asymptotics of the harmonic numbers, we obtain:

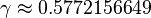

where  is the Euler–Mascheroni constant.

is the Euler–Mascheroni constant.

Now one can use the Markov inequality to bound the desired probability:

Calculating the variance

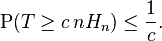

Using the independence of random variables ti, we obtain:

The  is a value of the Riemann zeta function (see Basel problem).

is a value of the Riemann zeta function (see Basel problem).

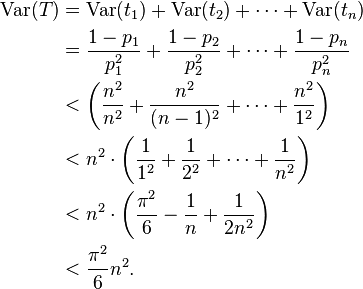

Now one can use the Chebyshev inequality to bound the desired probability:

Tail estimates

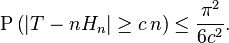

A different upper bound can be derived from the following observation. Let  denote the event that the

denote the event that the  -th coupon was not picked in the first

-th coupon was not picked in the first  trials. Then:

trials. Then:

Thus, for  , we have

, we have ![P\left [ {Z}_i^r \right ] \le e^{(-\beta n \log n ) / n} = n^{-\beta}](../I/m/e29c6f399fd5ceb04b85ac88ec6ea399.png) .

.

Extensions and generalizations

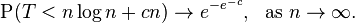

- Paul Erdős and Alfréd Rényi proved the limit theorem for the distribution of T. This result is a further extension of previous bounds.

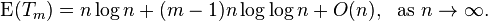

- Donald J. Newman and Lawrence Shepp found a generalization of the coupon collector's problem when m copies of each coupon needs to be collected. Let Tm be the first time m copies of each coupon are collected. They showed that the expectation in this case satisfies:

- Here m is fixed. When m = 1 we get the earlier formula for the expectation.

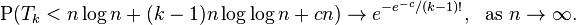

- Common generalization, also due to Erdős and Rényi:

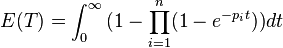

- In the general case of a nonuniform probability distribution, according to Philippe Flajolet,

See also

- Watterson estimator

- Birthday problem - This is the problem of how many coupons must be drawn before seeing a duplicate.

Notes

- ↑ Here and throughout this article, "log" refers to the natural logarithm rather than a logarithm to some other base. The use of Θ here invokes big O notation.

- ↑ E(50) = 50(1 + 1/2 + 1/3 + ... + 1/50) = 224.9603, the expected number of trials to collect all 50 coupons. The approximation

for this expected number gives in this case

for this expected number gives in this case  .

.

References

- Blom, Gunnar; Holst, Lars; Sandell, Dennis (1994), "7.5 Coupon collecting I, 7.6 Coupon collecting II, and 15.4 Coupon collecting III", Problems and Snapshots from the World of Probability, New York: Springer-Verlag, pp. 85–87, 191, ISBN 0-387-94161-4, MR 1265713.

- Dawkins, Brian (1991), "Siobhan's problem: the coupon collector revisited", The American Statistician 45 (1): 76–82, JSTOR 2685247.

- Erdős, Paul; Rényi, Alfréd (1961), "On a classical problem of probability theory", Magyar Tudományos Akadémia Matematikai Kutató Intézetének Közleményei 6: 215–220, MR 0150807.

- Newman, Donald J.; Shepp, Lawrence (1960), "The double dixie cup problem", American Mathematical Monthly 67: 58–61, doi:10.2307/2308930, MR 0120672

- Flajolet, Philippe; Gardy, Danièle; Thimonier, Loÿs (1992), "Birthday paradox, coupon collectors, caching algorithms and self-organizing search", Discrete Applied Mathematics 39 (3): 207–229, doi:10.1016/0166-218X(92)90177-C, MR 1189469.

- Isaac, Richard (1995), "8.4 The coupon collector's problem solved", The Pleasures of Probability, Undergraduate Texts in Mathematics, New York: Springer-Verlag, pp. 80–82, ISBN 0-387-94415-X, MR 1329545.

- Motwani, Rajeev; Raghavan, Prabhakar (1995), "3.6. The Coupon Collector's Problem", Randomized algorithms, Cambridge: Cambridge University Press, pp. 57–63, MR 1344451.

External links

- "Coupon Collector Problem" by Ed Pegg, Jr., the Wolfram Demonstrations Project. Mathematica package.

- Coupon Collector Problem, a simple Java applet.

- How Many Singles, Doubles, Triples, Etc., Should The Coupon Collector Expect?, a short note by Doron Zeilberger.

![\begin{align}

P\left [ {Z}_i^r \right ] = \left(1-\frac{1}{n}\right)^r \le e^{-r / n}

\end{align}](../I/m/1926fb36da39cd66a984c6c1b87f0072.png)

![\begin{align}

P\left [ T > \beta n \log n \right ] = P \left [ \bigcup_i {Z}_i^{\beta n \log n} \right ] \le n \cdot P [ {Z}_1^{\beta n \log n} ] \le n^{-\beta + 1}

\end{align}](../I/m/310e987d6fc2de39b6ab7bc96c3153f7.png)