Correlation ratio

In statistics, the correlation ratio is a measure of the relationship between the statistical dispersion within individual categories and the dispersion across the whole population or sample. The measure is defined as the ratio of two standard deviations representing these types of variation. The context here is the same as that of the intraclass correlation coefficient, whose value is the square of the correlation ratio.

Definition

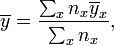

Suppose each observation is yxi where x indicates the category that observation is in and i is the label of the particular observation. Let nx be the number of observations in category x and

and

and

where  is the mean of the category x and

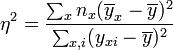

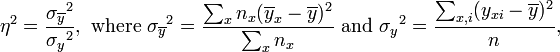

is the mean of the category x and  is the mean of the whole population. The correlation ratio η (eta) is defined as to satisfy

is the mean of the whole population. The correlation ratio η (eta) is defined as to satisfy

which can be written as

i.e. the weighted variance of the category means divided by the variance of all samples.

It is worth noting that if the relationship between values of  and values of

and values of  is linear (which is certainly true when there are only two possibilities for x) this will give the same result as the square of Pearson's correlation coefficient, otherwise the correlation ratio will be larger in magnitude. It can therefore be used for judging non-linear relationships.

is linear (which is certainly true when there are only two possibilities for x) this will give the same result as the square of Pearson's correlation coefficient, otherwise the correlation ratio will be larger in magnitude. It can therefore be used for judging non-linear relationships.

Range

The correlation ratio  takes values between 0 and 1. The limit

takes values between 0 and 1. The limit  represents the special case of no dispersion among the means of the different categories, while

represents the special case of no dispersion among the means of the different categories, while  refers to no dispersion within the respective categories. Note further, that

refers to no dispersion within the respective categories. Note further, that  is undefined when all data points of the complete population take the same value.

is undefined when all data points of the complete population take the same value.

Example

Suppose there is a distribution of test scores in three topics (categories):

- Algebra: 45, 70, 29, 15 and 21 (5 scores)

- Geometry: 40, 20, 30 and 42 (4 scores)

- Statistics: 65, 95, 80, 70, 85 and 73 (6 scores).

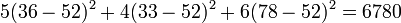

Then the subject averages are 36, 33 and 78, with an overall average of 52.

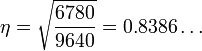

The sums of squares of the differences from the subject averages are 1952 for Algebra, 308 for Geometry and 600 for Statistics, adding to 2860. The overall sum of squares of the differences from the overall average is 9640. The difference of 6780 between these is also the weighted sum of the square of the differences between the subject averages and the overall average:

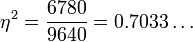

This gives

suggesting that most of the overall dispersion is a result of differences between topics, rather than within topics. Taking the square root

Observe that for  the overall sample dispersion is purely due to dispersion among the categories and not at all due to dispersion within the individual categories. For a quick comprehension simply imagine all Algebra, Geometry, and Statistics scores being the same respectively, e.g. 5 times 36, 4 times 33, 6 times 78.

the overall sample dispersion is purely due to dispersion among the categories and not at all due to dispersion within the individual categories. For a quick comprehension simply imagine all Algebra, Geometry, and Statistics scores being the same respectively, e.g. 5 times 36, 4 times 33, 6 times 78.

The limit  refers to the case without dispersion in the categories contributing to the overall dispersion. The trivial requirement for this extreme is that all category means are the same.

refers to the case without dispersion in the categories contributing to the overall dispersion. The trivial requirement for this extreme is that all category means are the same.

Pearson v. Fisher

The correlation ratio was introduced by Karl Pearson as part of analysis of variance. Ronald Fisher commented:

As a descriptive statistic the utility of the correlation ratio is extremely limited. It will be noticed that the number of degrees of freedom in the numerator ofdepends on the number of the arrays[1]

to which Egon Pearson (Karl's son) responded by saying

Again, a long-established method such as the use of the correlation ratio [§45 The "Correlation Ratio" η] is passed over in a few words without adequate description, which is perhaps hardly fair to the student who is given no opportunity of judging its scope for himself.[2]

References

- ↑ Ronald Fisher (1926) Statistical Methods for Research Workers, ISBN 0-05-002170-2 (excerpt)

- ↑ Pearson E.S. (1926) "Review of Statistical Methods for Research Workers (R. A. Fisher)", Science Progress, 20, 733-734. (excerpt)