Scalability

In electronics (including hardware, communication and software) scalability is the ability of a system, network, or process, to handle growing amount of work in a capable manner or its ability to be enlarged to accommodate that growth.[1] For example, it can refer to the capability of a system to increase total throughput under an increased load when resources (typically hardware) are added. An analogous meaning is implied when the word is used in a commercial context, where scalability of a company implies that the underlying business model offers the potential for economic growth within the company.

Scalability, as a property of systems, is generally difficult to define[2] and in any particular case it is necessary to define the specific requirements for scalability on those dimensions that are deemed important. It is a highly significant issue in electronics systems, databases, routers, and networking. A system whose performance improves after adding hardware, proportionally to the capacity added, is said to be a scalable system. An algorithm, design, networking protocol, program, or other system is said to scale, if it is suitably efficient and practical when applied to large situations (e.g. a large input data set or a large number of participating nodes in the case of a distributed system). If the design fails when the quantity increases, it does not scale.

The concept of scalability is desirable in technology as well as business settings. The base concept is consistent - the ability for a business or technology to accept increased volume without impacting the contribution margin (= revenue - variable costs). For example, a given piece of equipment may have capacity from 1-1000 users, and beyond 1000 users, additional equipment is needed or performance will decline (variable costs will increase and reduce contribution margin).

Contents |

Measures

Scalability can be measured in various dimensions, such as:

- Administrative scalability: The ability for an increasing number of organizations to easily share a single distributed system.

- Functional scalability: The ability to enhance the system by adding new functionality at minimal effort.

- Geographic scalability: The ability to maintain performance, usefulness, or usability regardless of expansion from concentration in a local area to a more distributed geographic pattern.

- Load scalability: The ability for a distributed system to easily expand and contract its resource pool to accommodate heavier or lighter loads. Alternatively, the ease with which a system or component can be modified, added, or removed, to accommodate changing load.

Examples

- A routing protocol is considered scalable with respect to network size, if the size of the necessary routing table on each node grows as O(log N), where N is the number of nodes in the network.

- A scalable online transaction processing system or database management system is one that can be upgraded to process more transactions by adding new processors, devices and storage, and which can be upgraded easily and transparently without shutting it down.

- Some early peer-to-peer (P2P) implementations of Gnutella had scaling issues. Each node query flooded its requests to all peers. The demand on each peer would increase in proportion to the total number of peers, quickly overrunning the peers' limited capacity. Other P2P systems like BitTorrent scale well because the demand on each peer is independent of the total number of peers. There is no centralized bottleneck, so the system may expand indefinitely without the addition of supporting resources (other than the peers themselves).

- The distributed nature of the Domain Name System allows it to work efficiently even when all hosts on the worldwide Internet are served, so it is said to "scale well".

Scale horizontally vs. vertically

Methods of adding more resources for a particular application fall into two broad categories:[3]

Scale horizontally (scale out)

To scale horizontally (or scale out) means to add more nodes to a system, such as adding a new computer to a distributed software application. An example might be scaling out from one Web server system to three.

As computer prices drop and performance continues to increase, low cost "commodity" systems can be used for high performance computing applications such as seismic analysis and biotechnology workloads that could in the past only be handled by supercomputers. Hundreds of small computers may be configured in a cluster to obtain aggregate computing power that often exceeds that of single traditional RISC processor based scientific computers. This model has further been fueled by the availability of high performance interconnects such as Myrinet and InfiniBand technologies. It has also led to demand for features such as remote maintenance and batch processing management previously not available for "commodity" systems.

The scale-out model has created an increased demand for shared data storage with very high I/O performance, especially where processing of large amounts of data is required, such as in seismic analysis. This has fueled the development of new storage technologies such as object storage devices.

Scale vertically (scale up)

To scale vertically (or scale up) means to add resources to a single node in a system, typically involving the addition of CPUs or memory to a single computer. Such vertical scaling of existing systems also enables them to use virtualization technology more effectively, as it provides more resources for the hosted set of operating system and application modules to share.

Taking advantage of such resources can also be called "scaling up", such as expanding the number of Apache daemon processes currently running.

Tradeoffs

There are tradeoffs between the two models. Larger numbers of computers means increased management complexity, as well as a more complex programming model and issues such as throughput and latency between nodes; also, some applications do not lend themselves to a distributed computing model. In the past, the price difference between the two models has favored "scale out" computing for those applications that fit its paradigm, but recent advances in virtualization technology have blurred that advantage, since deploying a new virtual system over a hypervisor (where possible) is almost always less expensive than actually buying and installing a real one.Configuring an existing idle system has always been less expensive than buying, installing, and configuring a new one, regardless of the model.

Database scalability

A number of different approaches enable databases to grow to very large size while supporting an ever-increasing rate of transactions per second. Not to be discounted, of course, is the rapid pace of hardware advances in both the speed and capacity of mass storage devices, as well as similar advances in CPU and networking speed. Beyond that, a variety of architectures are employed in the implementation of very large-scale databases.

One technique supported by most of the major database management system (DBMS) products is the partitioning of large tables, based on ranges of values in a key field. In this manner, the database can be scaled out across a cluster of separate database servers. Also, with the advent of 64-bit microprocessors, multi-core CPUs, and large SMP multiprocessors, DBMS vendors have been at the forefront of supporting multi-threaded implementations that substantially scale up transaction processing capacity.

Network-attached storage (NAS) and Storage area networks (SANs) coupled with fast local area networks and Fibre Channel technology enable still larger, more loosely coupled configurations of databases and distributed computing power. The widely supported X/Open XA standard employs a global transaction monitor to coordinate distributed transactions among semi-autonomous XA-compliant database resources. Oracle RAC uses a different model to achieve scalability, based on a "shared-everything" architecture that relies upon high-speed connections between servers.

While DBMS vendors debate the relative merits of their favored designs, some companies and researchers question the inherent limitations of relational database management systems. GigaSpaces, for example, contends that an entirely different model of distributed data access and transaction processing, named Space based architecture, is required to achieve the highest performance and scalability.[4] On the other hand, Base One makes the case for extreme scalability without departing from mainstream database technology.[5] In either case, there appears to be no limit in sight to database scalability.

Design for scalability

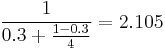

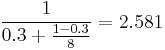

It is often advised to focus system design on hardware scalability rather than on capacity. It is typically cheaper to add a new node to a system in order to achieve improved performance than to partake in performance tuning to improve the capacity that each node can handle. But this approach can have diminishing returns (as discussed in performance engineering). For example: suppose 70% of a program can be sped up if parallelized and run on multiple CPUs instead of one. If  is the fraction of a calculation that is sequential, and

is the fraction of a calculation that is sequential, and  is the fraction that can be parallelized, the maximum speedup that can be achieved by using P processors is given according to Amdahl's Law:

is the fraction that can be parallelized, the maximum speedup that can be achieved by using P processors is given according to Amdahl's Law:  . Substituting the value for this example, using 4 processors we get

. Substituting the value for this example, using 4 processors we get  . If we double the compute power to 8 processors we get

. If we double the compute power to 8 processors we get  . Doubling the processing power has only improved the speedup by roughly one-fifth. If the whole problem was parallelizable, we would, of course, expect the speed up to double also. Therefore, throwing in more hardware is not necessarily the optimal approach.

. Doubling the processing power has only improved the speedup by roughly one-fifth. If the whole problem was parallelizable, we would, of course, expect the speed up to double also. Therefore, throwing in more hardware is not necessarily the optimal approach.

Weak versus strong scaling

In the context of high performance computing there are two common notions of scalability. The first is strong scaling, which is defined as how the solution time varies with the number of processors for a fixed total problem size.[6] The second is weak scaling, which is defined as how the solution time varies with the number of processors for a fixed problem size per processor.

See also

- Amdahl's law

- Asymptotic complexity

- Gustafson's law

- List of system quality attributes

- Load balancing (computing)

- Lock (computer science)

- NoSQL

- Parallelism in computing

- Performance engineering

- Scalable Video Coding (SVC)

- Similitude

- Space-based architecture (SBA)

References

- ^ André B. Bondi, 'Characteristics of scalability and their impact on performance', Proceedings of the 2nd international workshop on Software and performance, Ottawa, Ontario, Canada, 2000, ISBN 1-58113-195-X, pages 195 - 203

- ^ See for instance, Mark D. Hill, 'What is scalability?' in ACM SIGARCH Computer Architecture News, December 1990, Volume 18 Issue 4, pages 18-21, (ISSN 0163-5964) and Leticia Duboc, David S. Rosenblum, Tony Wicks, 'Doctoral symposium: presentations: A framework for modelling and analysis of software systems scalability' in Proceeding of the 28th international conference on Software engineering ICSE '06, May 2006. ISBN 1-59593-375-1, pages 949 - 952

- ^ Michael, M.; J.E. Moreira, D. Shiloach, R.W. Wisniewski (March 26, 2007). "Scale-up x Scale-out: A Case Study using Nutch/Lucene". Parallel and Distributed Processing Symposium, 2007. IPDPS 2007.. IEEE International. http://ieeexplore.ieee.org/xpl/freeabs_all.jsp?arnumber=4228359. Retrieved 2008-01-10.

- ^ GigaSpaces. "Space-Based Architecture and The End of Tier-based Computing", 2006. Retrieved on May 23, 2007.

- ^ Base One. "Database Scalability - Dispelling myths about the limits of database-centric architecture", 2007. Retrieved on May 23, 2007.

- ^ The Weak Scaling of DL_POLY 3

External links

- Architecture of a Highly Scalable NIO-Based Server - an article about writing scalable server in Java (java.net).

- Links to diverse learning resources - page curated by the memcached project.

- Scalable Definition - by The Linux Information Project (LINFO)