Lukaszyk–Karmowski metric

In mathematics, the Lukaszyk–Karmowski metric is a function defining a distance between two random variables or two random vectors[1][2]. This function is not a metric as it does not satisfy the identity of indiscernibles condition of the metric, that is for two identical arguments its value is greater than zero.

Contents |

Continuous random variables

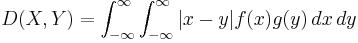

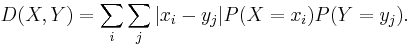

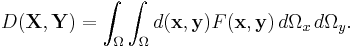

The Lukaszyk–Karmowski metric D between two continuous independent random variables X and Y is defined as:

where f(x) and g(y) are the probability density functions of X and Y respectively.

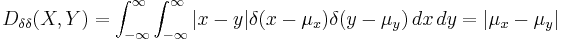

One may easily show that such metrics above do not satisfy the identity of indiscernibles condition required to be satisfied by the metric of the metric space. In fact they satisfy this condition if and only if both arguments X, Y are certain events described by Dirac delta density probability distribution functions. In such a case:

the Lukaszyk-Karmowski metric simply transforms into the metric between expected values  ,

,  of the variables X and Y and obviously:

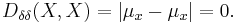

of the variables X and Y and obviously:

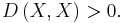

For all the other cases however:

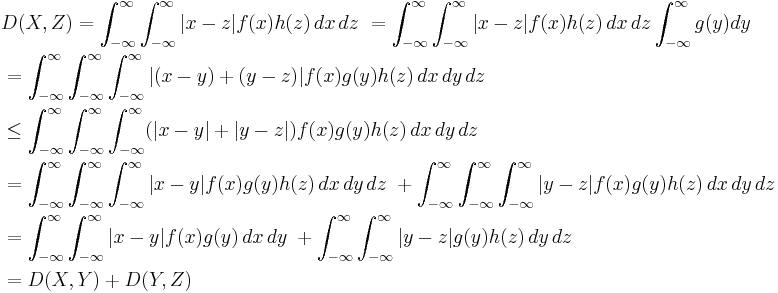

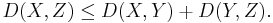

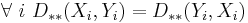

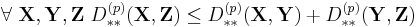

The Lukaszyk–Karmowski metric satisfies the remaining non-negativity and symmetry conditions of metric directly from its definition (symmetry of modulus), as well as subadditivity/triangle inequality condition:

Thus

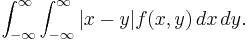

In the case where X and Y are dependent on each other, having a joint probability density function f(x, y), the L-K metric has the following form:

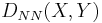

Example: two continuous random variables with normal distributions (NN)

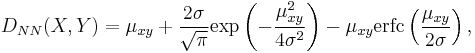

If both random variables X and Y have normal distributions with the same standard deviation σ, and if moreover X and Y are independent, then D(X, Y) is given by

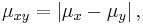

where

where erfc(x) is the complementary error function and where the subscripts NN indicate the type of the L-K metric.

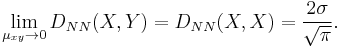

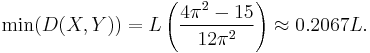

In this case, the lowest possible value of the function  is given by

is given by

Example: two continuous random variables with uniform distributions (RR)

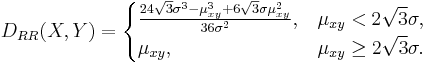

When both random variables X and Y have uniform distributions (R) of the same standard deviation σ, D(X, Y) is given by

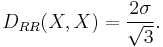

The minimal value of this kind of L–K metric is

Discrete random variables

In case the random variables X and Y are characterized by discrete probability distribution the Lukaszyk–Karmowski metric D is defined as:

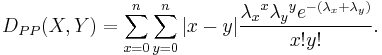

For example for two discrete Poisson-distributed random variables X and Y the equation above transforms into:

Random vectors

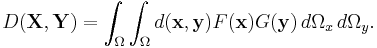

The Lukaszyk–Karmowski metric of random variables may be easily extended into metric D(X, Y) of random vectors X, Y by substituting  with any metric operator d(x,y):

with any metric operator d(x,y):

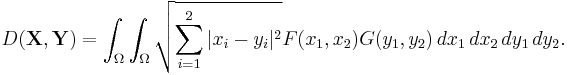

For example substituting d(x,y) with an Euclidean metric and assuming two-dimensionality of random vectors X, Y would yield:

This form of L–K metric is also greater than zero for the same vectors being measured (with the exception of two vectors having Dirac delta coefficients) and satisfies non-negativity and symmetry conditions of metric. The proofs are analogous to the ones provided for the L–K metric of random variables discussed above.

In case random vectors X and Y are dependent on each other, sharing common joint probability distribution F(X, Y) the L–K metric has the form:

Random vectors – the Euclidean form

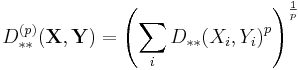

If the random vectors X and Y are not also only mutually independent but also all components of each vector are mutually independent, the Lukaszyk–Karmowski metric for random vectors is defined as:

where:

is a particular form of L–K metric of random variables chosen in dependence of the distributions of particular coefficients  and

and  of vectors X, Y .

of vectors X, Y .

Such a form of L–K metric also shares the common properties of all L–K metrics.

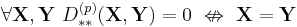

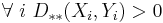

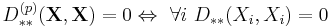

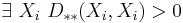

- It does not satisfy the identity of indiscernibles condition:

- since:

- but from the properties of L–K metric for random variables it follows that:

- It is non-negative and symmetric since the particular coefficients are also non-negative and symmetric:

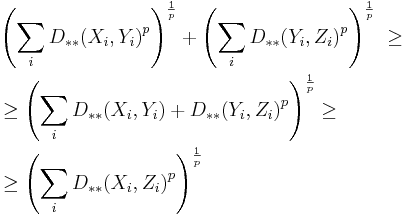

- It satisfies the triangle inequality:

- since (cf. Minkowski inequality):

Physical interpretation

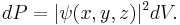

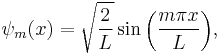

The Lukaszyk–Karmowski metric may be considered as a distance between quantum mechanics particles described by wavefunctions ψ, where the probability dP that given particle is present in given volume of space dV amounts:

A quantum particle in a box

For example the wavefunction of a quantum particle (X) in a box of length L has the form:

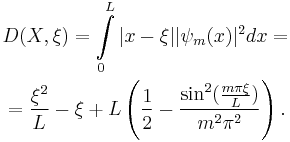

In this case the L–K metric between this particle and any point  of the box amounts:

of the box amounts:

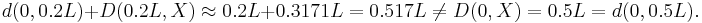

From the properties of the L–K metric it follows that the sum of distances between the edge of the box (ξ = 0 or ξ= L) and any given point and the L–K metric between this point and the particle X is greater than L–K metric between the edge of the box and the particle. E.g. for a quantum particle X at an energy level m = 2 and point ξ = 0.2:

Obviously the L–K metric between the particle and the edge of the box (D(0, X) or D(L, X)) amounts 0.5L and is independent on the particle's energy level.

Two quantum particles in a box

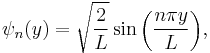

A distance between two particles bouncing in one dimensional box of length L having time-independent wavefunctions:

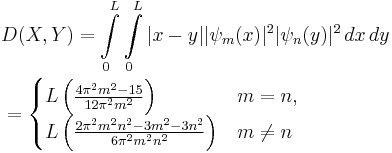

may be defined in terms of Lukaszyk-Karmowski metric of independent random variables as:

The distance between particles X and Y is minimal for m = 1 i n = 1, that is for the minimum energy levels of these particles and amounts:

According to properties of this function, the minimum distance is nonzero. For greater energy levels m, n it approaches to L/3.

Popular explanation

Suppose than we have to measure the distance between point µx and point µy, which are collinear with some point 0. Suppose further that we instructed this task to two independent and large groups of surveyors equipped with tape measures, wherein each surveyor of the first group will measure distance between 0 and µx and each surveyor of the second group will measure distance between 0 and µy.

Under the following assumptions we may consider the two sets of received observations xi, yj as random variables X and Y having normal distribution of the same variance σ 2 and distributed over "factual locations" of points µx, µy.

Calculating the arithmetic mean for all pairs |xi − yj| we should then obtain the value of L–K metric DNN(X, Y). Its characteristic curvilinearity arises from the symmetry of modulus and overlapping of distributions f(x), g(y) when their means approach each other.

An interesting experiment the results of which coincide with the properties of L-K metric was performed in 1967 by Robert Moyer and Thomas Landauer who measured the precise time an adult took to decide which of two Arabic digits was the largest. When the two digits were numerically distanced such as 2 and 9. subjects responded quickly and accurately. But their response time slowed by more than 100 milliseconds when they were closer such as 5 and 6, and subjects then erred as often as Once in every ten trials. The distance effect was present both among highly intelligent persons, as well as those who were trained to escape it[3].

Practical applications

A Lukaszyk–Karmowski metric may be used instead of a metric operator (commonly the Euclidean distance) in various numerical methods, and in particular in approximation algorithms such us radial basis function networks [4][5], inverse distance weighting or Kohonen self-organizing maps.

This approach is physically based, allowing the real uncertainty in the location of the sample points to be considered. [6][7]

See also

References

- ^ Metryka Pomiarowa, przykłady zastosowań aproksymacyjnych w mechanice doświadczalnej (Measurement metric, examples of approximation applications in experimental mechanics), PhD thesis, Szymon Łukaszyk (author), Wojciech Karmowski (supervisor), Tadeusz Kościuszko Cracow University of Technology, submitted December 31, 2001, completed March 31, 2004

- ^ A new concept of probability metric and its applications in approximation of scattered data sets, Łukaszyk Szymon, Computational Mechanics Volume 33, Number 4, 299–304, Springer-Verlag 2003 doi: 10.1007/s00466-003-0532-2

- ^ The Number Sense: How the Mind Creates Mathematics, Stanislas Dehaene, Oxford University Press US, 1999, ISBN 0195132408, p. 73–75

- ^ Taher Zaki, Driss Mammass, Abdellatif Ennaji, Fathallah Nouboud (2010) Classification of Arabic Documents by a Model of Fuzzy Proximity with a Radial Basis Function, International Journal of Future Generation Communication and Networking, 3 (4), p. 34

- ^ Florian Hogewind, Peter Bissolli (2010) Operational maps of monthly mean temperature for WMO-Region VI (Europe and Middle East), IDŐJÁRÁS, Quarterly Journal of the Hungarian Meteorological Service, Vol. 115, No. 1-2, January-June 2011, pp. 31-49, p. 41

- ^ Gang Meng, Jane Law, Mary E. Thompson (2010) "Small-scale health-related indicator acquisition using secondary data spatial interpolation", International Journal of Health Geographics, 9:50 doi:10.1186/1476-072X-9-50

- ^ Gang Meng (2010)Social and Spatial Determinants of Adverse Birth Outcome Inequalities in Socially Advanced Societies, Thesis (Doctor of Philosophy in Planning), University of Waterloo, Canada,