Linear probability model

In statistics, a linear probability model is a special case of a binomial regression model. Here the observed variable for each observation takes values which are either 0 or 1. The probability of observing a 0 or 1 in any one case is treated as depending on one or more explanatory variables. For the "linear probability model", this relationship is a particularly simple one, and allows the model to be fitted by simple linear regression.

The model

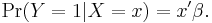

The model assumes that, for a binary outcome (Bernoulli trial), Y, and its associated vector of explanatory variables, X,[1]

For this model,

and hence the vector of parameters β can be estimated using least squares. This method of fitting would be inefficient[1] This method of fitting can be improved[1] by adopting an iterative scheme based on weighted least squares, in which the model from the previous iteration is used to supply estimates of the conditional variances, var(Y|X=x), which would vary between observations. This approach can be related to fitting the model by maximum likelihood.[1]

A drawback of this model for the parameter of the Bernoulli distribution is that, unless restrictions are placed on  , the estimated coefficients can imply probabilities outside the unit interval

, the estimated coefficients can imply probabilities outside the unit interval ![[0,1]](/2012-wikipedia_en_all_nopic_01_2012/I/ccfcd347d0bf65dc77afe01a3306a96b.png) . For this reason, models such as the logit model or the probit model are more commonly used.

. For this reason, models such as the logit model or the probit model are more commonly used.

![E[Y|X] = \Pr(Y=1|X) =x'\beta,](/2012-wikipedia_en_all_nopic_01_2012/I/76761aca77f10e732a2da10a986d7c91.png)