Independence (probability theory)

In probability theory, to say that two events are independent intuitively means that the occurrence of one event makes it neither more nor less probable that the other occurs. For example:

- The event of getting a 6 the first time a die is rolled and the event of getting a 6 the second time are independent.

- By contrast, the event of getting a 6 the first time a die is rolled and the event that the sum of the numbers seen on the first and second trials is 8 are not independent.

- If two cards are drawn with replacement from a deck of cards, the event of drawing a red card on the first trial and that of drawing a red card on the second trial are independent.

- By contrast, if two cards are drawn without replacement from a deck of cards, the event of drawing a red card on the first trial and that of drawing a red card on the second trial are again not independent.

Similarly, two random variables are independent if the conditional probability distribution of either given the observed value of the other is the same as if the other's value had not been observed. The concept of independence extends to dealing with collections of more than two events or random variables.

In some instances, the term "independent" is replaced by "statistically independent", "marginally independent", or "absolutely independent".[1]

Contents |

Independent events

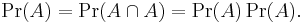

The standard definition says:

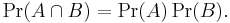

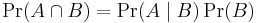

- Two events A and B are independent if and only if Pr(A ∩ B) = Pr(A)Pr(B).

Here A ∩ B is the intersection of A and B, that is, it is the event that both events A and B occur.

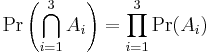

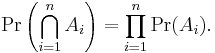

More generally, any collection of events—possibly more than just two of them—are mutually independent if and only if for every finite subset A1, ..., An of the collection we have

This is called the multiplication rule for independent events. Notice that independence requires this rule to hold for every subset of the collection; see[2] for a three-event example in which  and yet no two of the three events are pairwise independent.

and yet no two of the three events are pairwise independent.

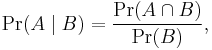

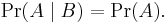

If two events A and B are independent, then the conditional probability of A given B is the same as the unconditional (or marginal) probability of A, that is,

There are at least two reasons why this statement is not taken to be the definition of independence: (1) the two events A and B do not play symmetrical roles in this statement, and (2) problems arise with this statement when events of probability 0 are involved.

The conditional probability of event A given B is given by

(so long as Pr(B) ≠ 0 )

(so long as Pr(B) ≠ 0 )

The statement above, when  is equivalent to

is equivalent to

which is the standard definition given above.

Note that an event is independent of itself if and only if

That is, if its probability is one or zero. Thus if an event or its complement almost surely occurs, it is independent of itself. For example, if event A is choosing any number but 0.5 from a uniform distribution on the unit interval, A is independent of itself, even though, tautologically, A fully determines A.

Independent random variables

What is defined above is independence of events. In this section we treat independence of random variables. If X is a real-valued random variable and a is a number then the event X ≤ a is the set of outcomes whose corresponding value of X is less than or equal to a. Since these are sets of outcomes that have probabilities, it makes sense to refer to events of this sort being independent of other events of this sort.

Two random variables X and Y are independent if and only if for every a and b, the events {X ≤ a} and {Y ≤ b} are independent events as defined above. Mathematically, this can be described as follows:

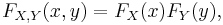

The random variables X and Y with cumulative distribution functions FX(x) and FY(y), and probability densities ƒX(x) and ƒY(y), are independent if and only if the combined random variable (X, Y) has a joint cumulative distribution function

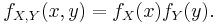

or equivalently, a joint density

.

.

Similar expressions characterise independence more generally for more than two random variables.

An arbitrary collection of random variables – possibly more than just two of them — is independent precisely if for any finite collection X1, ..., Xn and any finite set of numbers a1, ..., an, the events {X1 ≤ a1}, ..., {Xn ≤ an} are independent events as defined above.

The measure-theoretically inclined may prefer to substitute events {X ∈ A} for events {X ≤ a} in the above definition, where A is any Borel set. That definition is exactly equivalent to the one above when the values of the random variables are real numbers. It has the advantage of working also for complex-valued random variables or for random variables taking values in any measurable space (which includes topological spaces endowed by appropriate σ-algebras).

If any two of a collection of random variables are independent, they may nonetheless fail to be mutually independent; this is called pairwise independence.

If X and Y are independent, then the expectation operator E has the property

and for the covariance since we have

so the covariance cov(X, Y) is zero. (The converse of these, i.e. the proposition that if two random variables have a covariance of 0 they must be independent, is not true. See uncorrelated.)

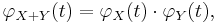

Two independent random variables X and Y have the property that the characteristic function of their sum is the product of their marginal characteristic functions:

but the reverse implication is not true (see subindependence).

Independent σ-algebras

The definitions above are both generalized by the following definition of independence for σ-algebras. Let (Ω, Σ, Pr) be a probability space and let A and B be two sub-σ-algebras of Σ. A and B are said to be independent if, whenever A ∈ A and B ∈ B,

The new definition relates to the previous ones very directly:

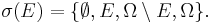

- Two events are independent (in the old sense) if and only if the σ-algebras that they generate are independent (in the new sense). The σ-algebra generated by an event E ∈ Σ is, by definition,

- Two random variables X and Y defined over Ω are independent (in the old sense) if and only if the σ-algebras that they generate are independent (in the new sense). The σ-algebra generated by a random variable X taking values in some measurable space S consists, by definition, of all subsets of Ω of the form X−1(U), where U is any measurable subset of S.

Using this definition, it is easy to show that if X and Y are random variables and Y is constant, then X and Y are independent, since the σ-algebra generated by a constant random variable is the trivial σ-algebra {∅, Ω}. Probability zero events cannot affect independence so independence also holds if Y is only Pr-almost surely constant.

Conditionally independent random variables

Intuitively, two random variables X and Y are conditionally independent given Z if, once Z is known, the value of Y does not add any additional information about X. For instance, two measurements X and Y of the same underlying quantity Z are not independent, but they are conditionally independent given Z (unless the errors in the two measurements are somehow connected).

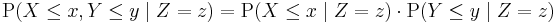

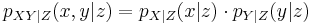

The formal definition of conditional independence is based on the idea of conditional distributions. If X, Y, and Z are discrete random variables, then we define X and Y to be conditionally independent given Z if

for all x, y and z such that P(Z = z) > 0. On the other hand, if the random variables are continuous and have a joint probability density function p, then X and Y are conditionally independent given Z if

for all real numbers x, y and z such that pZ(z) > 0.

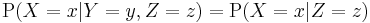

If X and Y are conditionally independent given Z, then

for any x, y and z with P(Z = z) > 0. That is, the conditional distribution for X given Y and Z is the same as that given Z alone. A similar equation holds for the conditional probability density functions in the continuous case.

Independence can be seen as a special kind of conditional independence, since probability can be seen as a kind of conditional probability given no events.

See also

- Copula (statistics)

- Independent and identically distributed random variables

- Mutually exclusive events

- Subindependence

- Linear dependence between random variables

- Conditional independence

![E[X Y] = E[X] E[Y], \,](/2012-wikipedia_en_all_nopic_01_2012/I/4bec6995e62ebfb4da1acd6da0f8c971.png)

![\text{cov}[X, Y] = E[X Y] - E[X] E[Y], \,](/2012-wikipedia_en_all_nopic_01_2012/I/135bbe04856cec10539257d29beb645f.png)