Exponential family

- "Natural parameter" links here. For the usage of this term in differential geometry, see differential geometry of curves.

In probability and statistics, an exponential family is an important class of probability distributions sharing a certain form, specified below. This special form is chosen for mathematical convenience, on account of some useful algebraic properties, as well as for generality, as exponential families are in a sense very natural distributions to consider. The concept of exponential families is credited to[1] E. J. G. Pitman,[2] G. Darmois,[3] and B. O. Koopman[4] in 1935–6. The term exponential class is sometimes used in place of "exponential family".[5]

The exponential families include many of the most common distributions, including the normal, exponential, gamma, chi-squared, beta, Dirichlet, Bernoulli, binomial, multinomial, Poisson, Wishart, Inverse Wishart and many others. Consideration of these, and other distributions that are with an exponential family of distributions, provides a framework for selecting a possible alternative parameterisation of the distribution, in terms of natural parameters, and for defining useful sample statistics, called the natural statistics of the family. See below for more information.

Contents |

Definition

The following is a sequence of increasingly general definitions of an exponential family. A casual reader may wish to restrict attention to the first and simplest definition, which corresponds to a single-parameter family of discrete or continuous probability distributions.

Scalar parameter

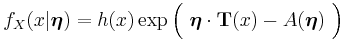

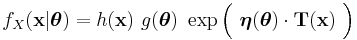

A single-parameter exponential family is a set of probability distributions whose probability density function (or probability mass function, for the case of a discrete distribution) can be expressed in the form

where  ,

,  ,

,  , and

, and  are known functions.

are known functions.

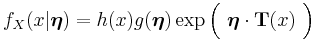

An alternative, equivalent form often given is

or equivalently

The value  is called the parameter of the family.

is called the parameter of the family.

Note that  is often a vector of measurements, in which case

is often a vector of measurements, in which case  is a function from the space of possible values of

is a function from the space of possible values of  to the real numbers.

to the real numbers.

If  , then the exponential family is said to be in canonical form. By defining a transformed parameter

, then the exponential family is said to be in canonical form. By defining a transformed parameter  , it is always possible to convert an exponential family to canonical form. The canonical form is non-unique, since

, it is always possible to convert an exponential family to canonical form. The canonical form is non-unique, since  can be multiplied by any nonzero constant, provided that

can be multiplied by any nonzero constant, provided that  is multiplied by that constant's reciprocal.

is multiplied by that constant's reciprocal.

Even when x is a scalar, and there is only a single parameter, the functions  and

and  can still be vectors, as described below.

can still be vectors, as described below.

Note also that the function  or equivalently

or equivalently  is automatically determined once the other functions have been chosen, and assumes a form that causes the distribution to be normalized (sum or integrate to one over the entire domain). Furthermore, both of these functions can always be written as functions of

is automatically determined once the other functions have been chosen, and assumes a form that causes the distribution to be normalized (sum or integrate to one over the entire domain). Furthermore, both of these functions can always be written as functions of  , even when

, even when  is not a one-to-one function, i.e. two or more different values of

is not a one-to-one function, i.e. two or more different values of  map to the same value of

map to the same value of  , and hence

, and hence  cannot be inverted. In such a case, all values of

cannot be inverted. In such a case, all values of  mapping to the same

mapping to the same  will also have the same value for

will also have the same value for  and

and  .

.

Further down the page is the example of a normal distribution with unknown mean and known variance.

Factorization of the variables involved

What is important to note, and what characterizes all exponential family variants, is that the parameter(s) and the observation variable(s) must factorize (can be separated into products each of which involves only one type of variable), either directly or within either part (the base or exponent) of an exponentiation operation. Generally, this means that all of the factors constituting the density or mass function must be of one of the following forms:  ,

,  ,

,  ,

,  ,

, ![{[f(x)]}^c](/2012-wikipedia_en_all_nopic_01_2012/I/34fca034cf9eb87dd0f40e9cf7e33235.png) ,

, ![{[g(\theta)]}^c](/2012-wikipedia_en_all_nopic_01_2012/I/3ec02037cda5e40fc610a1c9c579e557.png) ,

, ![{[f(x)]}^{g(\theta)}](/2012-wikipedia_en_all_nopic_01_2012/I/3700f25289321f2eeef7918bdfccfba7.png) ,

, ![{[g(\theta)]}^{f(x)}](/2012-wikipedia_en_all_nopic_01_2012/I/922be186dc80716e7103f58588f92423.png) ,

, ![{[f(x)]}^{h(x)g(\theta)}](/2012-wikipedia_en_all_nopic_01_2012/I/1d441c30fa7813a42b7e2ff77c6f8947.png) , or

, or ![{[g(\theta)]}^{h(x)j(\theta)}](/2012-wikipedia_en_all_nopic_01_2012/I/98c1b27d3ae9914608409906ed5c51ac.png) , where

, where  and

and  are arbitrary functions of

are arbitrary functions of  ;

;  and

and  are arbitrary functions of

are arbitrary functions of  ; and

; and  is an arbitrary "constant" expression (i.e. an expression not involving

is an arbitrary "constant" expression (i.e. an expression not involving  or

or  ).

).

There are further restrictions on how many such factors can occur. For example, an expression of the sort ![{[f(x) g(\theta)]}^{h(x)j(\theta)}](/2012-wikipedia_en_all_nopic_01_2012/I/b30cc9b632d58d3ac232e8ecc796677e.png) is the same as

is the same as ![{[f(x)]}^{h(x)j(\theta)} [g(\theta)]^{h(x)j(\theta)}](/2012-wikipedia_en_all_nopic_01_2012/I/889c40565a87e1391c7b243412e4ba92.png) , i.e. a product of two "allowed" factors. However, when rewritten into the factorized form,

, i.e. a product of two "allowed" factors. However, when rewritten into the factorized form,

it can be seen that it cannot be expressed in the required form. (However, a form of this sort is a member of a curved exponential family, which allows multiple factorized terms in the exponent.)

To see why an expression of the form ![{[f(x)]}^{g(\theta)}](/2012-wikipedia_en_all_nopic_01_2012/I/3700f25289321f2eeef7918bdfccfba7.png) qualifies, note that

qualifies, note that

and hence factorizes inside of the exponent. Similarly,

and again factorizes inside of the exponent.

Note also that a factor consisting of a sum where both types of variables are involved (e.g. a factor of the form  ) cannot be factorized in this fashion (except in some cases where occurring directly in an exponent); this is why, for example, the Cauchy distribution and Student's t distribution are not exponential families.

) cannot be factorized in this fashion (except in some cases where occurring directly in an exponent); this is why, for example, the Cauchy distribution and Student's t distribution are not exponential families.

Vector parameter

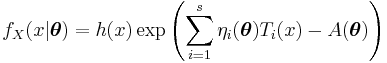

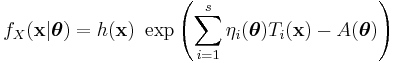

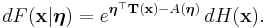

The definition in terms of one real-number parameter can be extended to one real-vector parameter  . A family of distributions is said to belong to a vector exponential family if the probability density function (or probability mass function, for discrete distributions) can be written as

. A family of distributions is said to belong to a vector exponential family if the probability density function (or probability mass function, for discrete distributions) can be written as

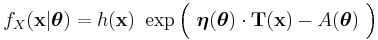

Or in a more compact form,

This form writes the sum as a dot product of vector-valued functions  and

and  .

.

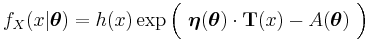

An alternative, equivalent form often seen is

As in the scalar valued case, the exponential family is said to be in canonical form if  , for all

, for all  .

.

A vector exponential family is said to be curved if the dimension of  is less than the dimension of the vector

is less than the dimension of the vector  . That is, if the dimension of the parameter vector is less than the number of functions of the parameter vector in the above representation of the probability density function. Note that most common distributions in the exponential family are not curved, and many algorithms designed to work with any member of the exponential family implicitly or explicitly assume that the distribution is not curved.

. That is, if the dimension of the parameter vector is less than the number of functions of the parameter vector in the above representation of the probability density function. Note that most common distributions in the exponential family are not curved, and many algorithms designed to work with any member of the exponential family implicitly or explicitly assume that the distribution is not curved.

Note that, as in the above case of a scalar-valued parameter, the function  or equivalently

or equivalently  is automatically determined once the other functions have been chosen, so that the entire distribution is normalized. In addition, as above, both of these functions can always be written as functions of

is automatically determined once the other functions have been chosen, so that the entire distribution is normalized. In addition, as above, both of these functions can always be written as functions of  , regardless of the form of the transformation that generates

, regardless of the form of the transformation that generates  from

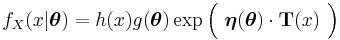

from  . Hence an exponential family in its "natural form" (parametrized by its natural parameter) looks like

. Hence an exponential family in its "natural form" (parametrized by its natural parameter) looks like

or equivalently

Note that the above forms may sometimes be seen with  in place of

in place of  . These are exactly equivalent formulations, merely using different notation for the dot product.

. These are exactly equivalent formulations, merely using different notation for the dot product.

Further down the page is the example of a normal distribution with unknown mean and variance.

Vector parameter, vector variable

The vector-parameter form over a single scalar-valued random variable can be trivially expanded to cover a joint distribution over a vector of random variables. The resulting distribution is simply the same as the above distribution for a scalar-valued random variable with each occurrence of the scalar  replaced by the vector

replaced by the vector  . Note that the dimension

. Note that the dimension  of the random variable need not match the dimension

of the random variable need not match the dimension  of the parameter vector, nor (in the case of a curved exponential function) the dimension

of the parameter vector, nor (in the case of a curved exponential function) the dimension  of the natural parameter

of the natural parameter  and sufficient statistic

and sufficient statistic  .

.

The distribution in this case is written as

Or more compactly as

Or alternatively as

Measure-theoretic formulation

We use cumulative distribution functions (cdf) in order to encompass both discrete and continuous distributions.

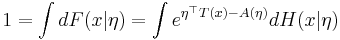

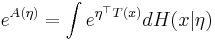

Suppose H is a non-decreasing function of a real variable. Then Lebesgue–Stieltjes integrals with respect to dH(x) are integrals with respect to the "reference measure" of the exponential family generated by H.

Any member of that exponential family has cumulative distribution function

If F is a continuous distribution with a density, one can write dF(x) = f(x) dx.

H(x) is a Lebesgue–Stieltjes integrator for the reference measure. When the reference measure is finite, it can be normalized and H is actually the cumulative distribution function of a probability distribution. If F is absolutely continuous with a density, then so is H, which can then be written dH(x) = h(x) dx. If F is discrete, then H is a step function (with steps on the support of F).

Interpretation

In the definitions above, the functions

and

and  were apparently arbitrarily defined. However, these functions play a significant role in the resulting probability distribution.

were apparently arbitrarily defined. However, these functions play a significant role in the resulting probability distribution.

is a sufficient statistic of the distribution. Thus, for exponential families, there exists a sufficient statistic whose dimension equals the number of parameters to be estimated. This important property is further discussed below.

is a sufficient statistic of the distribution. Thus, for exponential families, there exists a sufficient statistic whose dimension equals the number of parameters to be estimated. This important property is further discussed below.

is called the natural parameter. The set of values of

is called the natural parameter. The set of values of  for which the function

for which the function  is finite is called the natural parameter space. It can be shown that the natural parameter space is always convex.

is finite is called the natural parameter space. It can be shown that the natural parameter space is always convex.

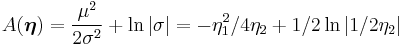

is a normalization factor, or log-partition function, without which

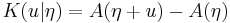

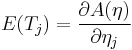

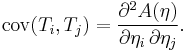

is a normalization factor, or log-partition function, without which  would not be a probability distribution. The function A is important in its own right, because K(u|η) = A(η + u) − A(η) is the cumulant generating function of the sufficient statistic T(x). This means one can fully understand the mean and covariance structure of T = (T1, T2, ... , Tp) by differentiating

would not be a probability distribution. The function A is important in its own right, because K(u|η) = A(η + u) − A(η) is the cumulant generating function of the sufficient statistic T(x). This means one can fully understand the mean and covariance structure of T = (T1, T2, ... , Tp) by differentiating  .

.

Examples

The normal, exponential, gamma, chi-squared, beta, Weibull (with known shape parameter k), Dirichlet, Bernoulli, binomial, multinomial, Poisson, negative binomial (with known stopping-time parameter r), and geometric distributions are all exponential families. The family of Pareto distributions with a fixed minimum bound form an exponential family.

The Cauchy and uniform families of distributions are not exponential families. The Laplace family is not an exponential family unless the mean is zero.

Following are some detailed examples of the representation of some useful distribution as exponential families.

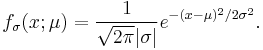

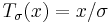

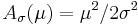

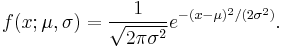

Normal distribution: Unknown mean, known variance

As a first example, consider a random variable distributed normally with unknown mean  and known variance

and known variance  . The probability density function is then

. The probability density function is then

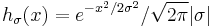

This is a single-parameter exponential family, as can be seen by setting

If σ = 1 this is in canonical form, as then η(μ) = μ.

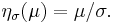

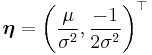

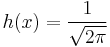

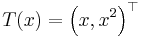

Normal distribution: Unknown mean and unknown variance

Next, consider the case of a normal distribution with unknown mean and unknown variance. The probability density function is then

This is an exponential family which can be written in canonical form by defining

Binomial distribution

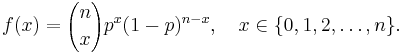

As an example of a discrete exponential family, consider the binomial distribution with known number of trials n. The probability mass function for this distribution is

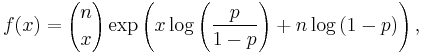

This can equivalently be written as

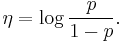

which shows that the binomial distribution is an exponential family, whose natural parameter is

This function of p is known as logit.

Moments and cumulants of the sufficient statistic

Normalization of the distribution

We start with the normalization of the probability distribution. Since

it follows that

This justifies calling A the log-partition function.

Moment generating function of the sufficient statistic

Now, the moment generating function of T(x) is

proving the earlier statement that  is the cumulant generating function for T.

is the cumulant generating function for T.

An important subclass of the exponential family the natural exponential family has a similar form for the moment generating function for the distribution of x.

Differential identities for cumulants

In particular,

and

The first two raw moments and all mixed second moments can be recovered from these two identities. Higher order moments and cumulants are obtained by higher derivatives. This technique is often useful when T is a complicated function of the data, whose moments are difficult to calculate by integration.

Example

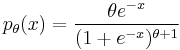

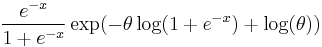

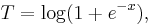

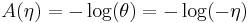

As an example consider a real valued random variable  with density

with density

indexed by shape parameter  (this is called the skew-logistic distribution). The density can be rewritten as

(this is called the skew-logistic distribution). The density can be rewritten as

Notice this is an exponential family with natural parameter

sufficient statistic

and normalizing factor

So using the first identity,

and using the second identity

This example illustrates a case where using this method is very simple, but the direct calculation would be nearly impossible.

Maximum entropy derivation

The exponential family arises naturally as the answer to the following question: what is the maximum-entropy distribution consistent with given constraints on expected values?

The information entropy of a probability distribution dF(x) can only be computed with respect to some other probability distribution (or, more generally, a positive measure), and both measures must be mutually absolutely continuous. Accordingly, we need to pick a reference measure dH(x) with the same support as dF(x). As an aside, frequentists need to realize that this is a largely arbitrary choice, while Bayesians can just make this choice part of their prior probability distribution.

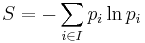

The entropy of dF(x) relative to dH(x) is

or

where dF/dH and dH/dF are Radon–Nikodym derivatives. Note that the ordinary definition of entropy for a discrete distribution supported on a set I, namely

assumes, though this is seldom pointed out, that dH is chosen to be the counting measure on I.

Consider now a collection of observable quantities (random variables) Ti. The probability distribution dF whose entropy with respect to dH is greatest, subject to the conditions that the expected value of Ti be equal to ti, is a member of the exponential family with dH as reference measure and (T1, ..., Tn) as sufficient statistic.

The derivation is a simple variational calculation using Lagrange multipliers. Normalization is imposed by letting T0 = 1 be one of the constraints. The natural parameters of the distribution are the Lagrange multipliers, and the normalization factor is the Lagrange multiplier associated to T0.

For examples of such derivations, see Maximum entropy probability distribution.

Role in statistics

Classical estimation: sufficiency

According to the Pitman–Koopman–Darmois theorem, among families of probability distributions whose domain does not vary with the parameter being estimated, only in exponential families is there a sufficient statistic whose dimension remains bounded as sample size increases. Less tersely, suppose Xn, n = 1, 2, 3, ... are independent identically distributed random variables whose distribution is known to be in some family of probability distributions. Only if that family is an exponential family is there a (possibly vector-valued) sufficient statistic T(X1, ..., Xn) whose number of scalar components does not increase as the sample size n increases.

Bayesian estimation: conjugate distributions

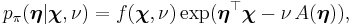

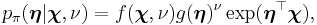

Exponential families are also important in Bayesian statistics. In Bayesian statistics a prior distribution is multiplied by a likelihood function and then normalised to produce a posterior distribution. In the case of a likelihood which belongs to the exponential family there exists a conjugate prior, which is often also in the exponential family. A conjugate prior  for the parameter

for the parameter  of an exponential family is given by

of an exponential family is given by

or equivalently

where  (where

(where  is the dimension of

is the dimension of  ) and

) and  are hyperparameters (parameters controlling parameters).

are hyperparameters (parameters controlling parameters).  corresponds to the effective number of observations that the prior distribution contributes, and

corresponds to the effective number of observations that the prior distribution contributes, and  corresponds to the total amount that these pseudo-observations contribute to the sufficient statistic over all observations and pseudo-observations.

corresponds to the total amount that these pseudo-observations contribute to the sufficient statistic over all observations and pseudo-observations.  is a normalization constant that is automatically determined by the remaining functions and serves to ensure that the given function is a probability density function (i.e. it is normalized).

is a normalization constant that is automatically determined by the remaining functions and serves to ensure that the given function is a probability density function (i.e. it is normalized).  and equivalently

and equivalently  are the same functions as in the definition of the distribution over which

are the same functions as in the definition of the distribution over which  is the conjugate prior.

is the conjugate prior.

A conjugate prior is one which, when combined with the likelihood and normalised, produces a posterior distribution which is of the same type as the prior. For example, if one is estimating the success probability of a binomial distribution, then if one chooses to use a beta distribution as one's prior, the posterior is another beta distribution. This makes the computation of the posterior particularly simple. Similarly, if one is estimating the parameter of a Poisson distribution the use of a gamma prior will lead to another gamma posterior. Conjugate priors are often very flexible and can be very convenient. However, if one's belief about the likely value of the theta parameter of a binomial is represented by (say) a bimodal (two-humped) prior distribution, then this cannot be represented by a beta distribution. It can however be represented by using a mixture density as the prior, here a combination of two beta distributions; this is a form of hyperprior.

An arbitrary likelihood will not belong to the exponential family, and thus in general no conjugate prior exists. The posterior will then have to be computed by numerical methods.

Hypothesis testing: Uniformly most powerful tests

The one-parameter exponential family has a monotone non-decreasing likelihood ratio in the sufficient statistic T(x), provided that η(θ) is non-decreasing. As a consequence, there exists a uniformly most powerful test for testing the hypothesis H0: θ ≥ θ0 vs. H1: θ < θ0.

Generalized linear models

The exponential family forms the basis for the distribution function used in generalized linear models, a class of model that encompass many of the commonly used regression models in statistics.

See also

References

- ^ Andersen, Erling (September 1970). "Sufficiency and Exponential Families for Discrete Sample Spaces". Journal of the American Statistical Association (Journal of the American Statistical Association, Vol. 65, No. 331) 65 (331): 1248–1255. doi:10.2307/2284291. JSTOR 2284291. MR268992.

- ^ Pitman, E.; Wishart, J. (1936). "Sufficient statistics and intrinsic accuracy". Mathematical Proceedings of the Cambridge Philosophical Society 32 (4): 567–579. doi:10.1017/S0305004100019307.

- ^ Darmois, G. (1935). "Sur les lois de probabilites a estimation exhaustive" (in French). C.R. Acad. Sci. Paris 200: 1265–1266.

- ^ Koopman, B (1936). "On distribution admitting a sufficient statistic". Transactions of the American Mathematical Society (Transactions of the American Mathematical Society, Vol. 39, No. 3) 39 (3): 399–409. doi:10.2307/1989758. JSTOR 1989758. MR1501854.

- ^ Kupperman, M. (1958) "Probabilities of Hypotheses and Information-Statistics in Sampling from Exponential-Class Populations", Annals of Mathematical Statistics, 9 (2), 571–575 JSTOR 2237349

Further reading

- Lehmann, E. L.; Casella, G. (1998). Theory of Point Estimation. pp. 2nd ed., sec. 1.5.

- Keener, Robert W. (2006). Statistical Theory: Notes for a Course in Theoretical Statistics. Springer. pp. 27–28, 32–33.

- Fahrmeier, Ludwig; Tutz, G. (1994). Multivariate statistical modelling based on generalized linear models. Springer. pp. 18–22, 345–349.

External links

- A primer on the exponential family of distributions

- Exponential family of distributions on the Earliest known uses of some of the words of mathematics

- jMEF: A Java library for exponential families

![f_X(x|\theta) = h(x)\ \exp[\ \eta(\theta) \cdot T(x)\ -\ A(\theta)\ ]](/2012-wikipedia_en_all_nopic_01_2012/I/bdbcd29a4797f1ed8dda6db476e58d91.png)

![f_X(x|\theta) = h(x)\ g(\theta) \exp[\ \eta(\theta) \cdot T(x)\ ]\,](/2012-wikipedia_en_all_nopic_01_2012/I/b4c27b41d8eee3479879d02c1565d539.png)

![f_X(x|\theta) = \exp[\ \eta(\theta) \cdot T(x)\ -\ A(\theta) %2B B(x)\ ]](/2012-wikipedia_en_all_nopic_01_2012/I/61c77bc3f3ea9da3433a0b2405c4bf83.png)

![{[f(x) g(\theta)]}^{h(x)j(\theta)} = {[f(x)]}^{h(x)j(\theta)} [g(\theta)]^{h(x)j(\theta)} = e^{[h(x) \ln f(x)] j(\theta) %2B h(x) [j(\theta) \ln g(\theta)]}\, ,](/2012-wikipedia_en_all_nopic_01_2012/I/0dcf9f11a8e13799bdf0355f3d19a79f.png)

![{[f(x)]}^{g(\theta)} = e^{g(\theta) \ln f(x)}\,](/2012-wikipedia_en_all_nopic_01_2012/I/ac4e2c417c8602d3c7f4de77a2e17c53.png)

![{[f(x)]}^{h(x)g(\theta)} = e^{h(x)g(\theta)\ln f(x)} = e^{[h(x) \ln f(x)] g(\theta)}\,](/2012-wikipedia_en_all_nopic_01_2012/I/72d3d06de7d5bda7b78d42f1fd782997.png)

![M_T(u) \equiv E[e^{u^\top T(x)}|\eta] = \int e^{(\eta%2Bu)^\top T(x)-A(\eta)}dH(x|\eta) = e^{A(\eta %2B u)-A(\eta)}](/2012-wikipedia_en_all_nopic_01_2012/I/a00a81537d99a93de6900a4aac1799af.png)

![E(\log(1 %2B e^{-X})) = E(T) = \frac{ \partial A(\eta) }{ \partial \eta } = \frac{ \partial }{ \partial \eta } [-\log(-\eta)] = \frac{1}{-\eta} = \frac{1}{\theta},](/2012-wikipedia_en_all_nopic_01_2012/I/84f71d7902f9877b602f22d065ecf8ae.png)

![\mathrm{var}(\log(1 %2B e^{-X})) = \frac{ \partial^2 A(\eta) }{ \partial \eta^2 } = \frac{ \partial }{ \partial \eta } \left[\frac{1}{-\eta}\right] = \frac{1}{(-\eta)^2} = \frac{1}{\theta^2}.](/2012-wikipedia_en_all_nopic_01_2012/I/942abb07166342ea6f563bc950c6bfd1.png)

![S[dF|dH]=-\int {dF\over dH}\ln{dF\over dH}\,dH](/2012-wikipedia_en_all_nopic_01_2012/I/5efc2cdb67b140dbc87572875a028bb2.png)

![S[dF|dH]=\int\ln{dH\over dF}\,dF](/2012-wikipedia_en_all_nopic_01_2012/I/29631dbc474d8acf46fc11d365054146.png)