Covariance

In probability theory and statistics, covariance is a measure of how much two variables change together. Variance is a special case of the covariance when the two variables are identical.

Contents |

Definition

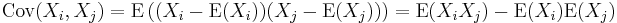

The covariance between two real-valued random variables X and Y with finite second moments is

where E(X) is the expected value of X. By using some properties of expectations, this can be simplified to

For random vectors X and Y (of dimensions m×1 and n×1 respectively) the m×n covariance matrix is equal to

where M ′ is the transpose of M.

The (i,j)-th element of this matrix is equal to the covariance Cov(Xi, Yj) between the i-th scalar component of X and the j-th scalar component of Y. In particular, Cov(Y, X) is the transpose of Cov(X, Y).

Random variables whose covariance is zero are called uncorrelated.

The units of measurement of the covariance Cov(X, Y) are those of X times those of Y. By contrast, correlation, which depends on the covariance, is a dimensionless measure of linear dependence.

Properties

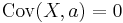

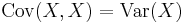

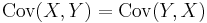

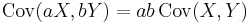

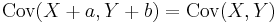

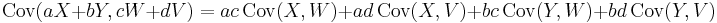

If X, Y, W, and V are real-valued random variables and a, b, c, d are constant ("constant" in this context means non-random), then the following facts are a consequence of the definition of covariance:

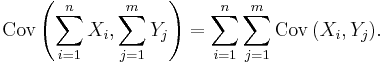

For sequences X1, ..., Xn and Y1, ..., Ym of random variables, we have

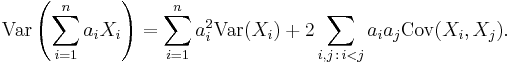

For a sequence X1, ..., Xn of random variables, and constants a1, ..., an, we have

Incremental computation

Covariance can be computed efficiently from incrementally available values using a generalization of the computational formula for the variance:

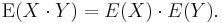

If X and Y are independent, then their covariance is zero. This follows because under independence,

The converse, however, is generally not true: Some pairs of random variables have covariance zero although they are not independent. Under some additional assumptions, covariance zero sometimes does entail independence, as for example in the case of multivariate normal distributions. Distance covariance [1] [2] addresses this deficiency of covariance, distance covariance is a measure for general dependencies. Distance covariance zero does imply independence. An alternative but equivalent approach is Brownian covariance.

Relationship to inner products

Many of the properties of covariance can be extracted elegantly by observing that it satisfies similar properties to those of an inner product:

- bilinear: for constants a and b and random variables X, Y, and U, Cov(aX + bY, U) = a Cov(X, U) + b Cov(Y, U)

- symmetric: Cov(X, Y) = Cov(Y, X)

- positive semi-definite: Var(X) = Cov(X, X) ≥ 0, and Cov(X, X) = 0 implies that X is a constant random variable (K).

In fact these properties imply that the covariance defines an inner product over the quotient vector space obtained by taking the subspace of random variables with finite second moment and identifying any two that differ by a constant. (This identification turns the positive semi-definiteness above into positive definiteness.) That quotient vector space is isomorphic to the subspace of random variables with finite second moment and mean zero; on that subspace, the covariance is exactly the L2 inner product of real-valued functions on the sample space.

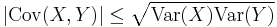

As a result for random variables with finite variance the following inequality holds via the Cauchy–Schwarz inequality:

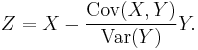

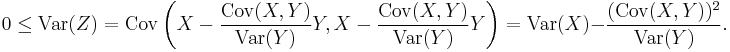

Proof: If Var("Y") = 0, then it holds trivially. Otherwise, let random variable

Then we have:

QED.

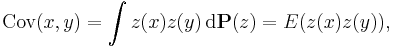

Covariance operator, bilinear form, and function

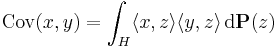

More generally, for a probability measure P on a Hilbert space H with inner product  , the covariance of P is the bilinear form Cov: H × H → R given by

, the covariance of P is the bilinear form Cov: H × H → R given by

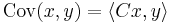

for all x and y in H. The covariance operator C is then defined by

(from the Riesz representation theorem, such operator exists if Cov is bounded). Since Cov is symmetric in its arguments, the covariance operator is self-adjoint (the infinite-dimensional analogy of the transposition symmetry in the finite-dimensional case). When P is a centred Gaussian measure, C is also a nuclear operator. In particular, it is a compact operator of trace class, that is, it has finite trace.

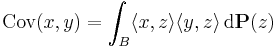

Even more generally, for a probability measure P on a Banach space B, the covariance of P is the bilinear form on the algebraic dual B#, defined by

where  is now the value of the linear functional x on the element z.

is now the value of the linear functional x on the element z.

Quite similarly, the covariance function of a function-valued random element (in special cases called random process or random field) z is

where z(x) is now the value of the function z at the point x, i.e., the value of the linear functional  evaluated at z.

evaluated at z.

Comments

The covariance is sometimes called a measure of "linear dependence" between the two random variables. That does not mean the same thing as in the context of linear algebra (see linear dependence). When the covariance is normalized, one obtains the correlation matrix. From it, one can obtain the Pearson coefficient, which gives us the goodness of the fit for the best possible linear function describing the relation between the variables. In this sense covariance is a linear gauge of dependence.

References

See also

- Brownian covariance

- Correlation

- Covariance and correlation

- Covariance function

- Covariance matrix

- Distance covariance

- Law of total covariance

- Autocovariance

- Analysis of covariance

- Sample mean and sample covariance

- Variance

References

External links

|

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

![\operatorname{Cov}(X,Y) = \operatorname{E}\big[(X - \operatorname{E}[X])(Y - \operatorname{E}[Y])\big],](/2010-wikipedia_en_wp1-0.8_orig_2010-12/I/fa887a702c8b1488fca97548d2475444.png)

![\operatorname{Cov}(X,Y) = \operatorname{E}\big[X Y\big] - \operatorname{E}[X]\cdot\operatorname{E}[Y].](/2010-wikipedia_en_wp1-0.8_orig_2010-12/I/e5bb45bcd4f717f06e1fc23b6f10de50.png)

![\operatorname{Cov}(X,Y)

= \operatorname{E}\big[(X - \operatorname{E}[X])(Y - \operatorname{E}[Y])'\big]

= \operatorname{E}\big[X Y'\big] - \operatorname{E}[X]\operatorname{E}[Y]',](/2010-wikipedia_en_wp1-0.8_orig_2010-12/I/0ddbd46e706e12f34e0682b333f0a86b.png)