Accuracy and precision

In the fields of science, engineering, industry and statistics, the accuracy of a measurement system is the degree of closeness of measurements of a quantity to its actual (true) value. The precision of a measurement system, also called reproducibility or repeatability, is the degree to which repeated measurements under unchanged conditions show the same results.[1] Although the two words can be synonymous in colloquial use, they are deliberately contrasted in the context of the scientific method.

A measurement system can be accurate but not precise, precise but not accurate, neither, or both. For example, if an experiment contains a systematic error, then increasing the sample size generally increases precision but does not improve accuracy. Eliminating the systematic error improves accuracy but does not change precision.

A measurement system is called valid if it is both accurate and precise. Related terms are bias (non-random or directed effects caused by a factor or factors unrelated by the independent variable) and error (random variability), respectively.

The terminology is also applied to indirect measurements, that is, values obtained by a computational procedure from observed data.

In addition to accuracy and precision, measurements may have also a measurement resolution, which is the smallest change in the underlying physical quantity that produces a response in the measurement. Its precision, however, may be low.

Accuracy versus precision; the target analogy

Accuracy is the degree of veracity while precision is the degree of reproducibility. The analogy used here to explain the difference between accuracy and precision is the target comparison. In this analogy, repeated measurements are compared to arrows that are shot at a target. Accuracy describes the closeness of arrows to the bullseye at the target center. Arrows that strike closer to the bullseye are considered more accurate. The closer a system's measurements to the accepted value, the more accurate the system is considered to be.

To continue the analogy, if a large number of arrows are shot, precision would be the size of the arrow cluster. (When only one arrow is shot, precision is the size of the cluster one would expect if this were repeated many times under the same conditions.) When all arrows are grouped tightly together, the cluster is considered precise since they all struck close to the same spot, even if not necessarily near the bullseye. The measurements are precise, though not necessarily accurate.

However, it is not possible to reliably achieve accuracy in individual measurements without precision—if the arrows are not grouped close to one another, they cannot all be close to the bullseye. (Their average position might be an accurate estimation of the bullseye, but the individual arrows are inaccurate.) See also circular error probable for application of precision to the science of ballistics.

Quantifying accuracy and precision

Ideally a measurement device is both accurate and precise, with measurements all close to and tightly clustered around the known value. The accuracy and precision of a measurement process is usually established by repeatedly measuring some traceable reference standard. Such standards are defined in the International System of Units and maintained by national standards organizations such as the National Institute of Standards and Technology.

This also applies when measurements are repeated and averaged. In that case, the term standard error is properly applied: the precision of the average is equal to the known standard deviation of the process divided by the square root of the number of measurements averaged. Further, the central limit theorem shows that the probability distribution of the averaged measurements will be closer to a normal distribution than that of individual measurements.

With regard to accuracy we can distinguish:

- the difference between the mean of the measurements and the reference value, the bias. Establishing and correcting for bias is necessary for calibration.

- the combined effect of that and precision.

A common convention in science and engineering is to express accuracy and/or precision implicitly by means of significant figures. Here, when not explicitly stated, the margin of error is understood to be one-half the value of the last significant place. For instance, a recording of 843.6 m, or 843.0 m, or 800.0 m would imply a margin of 0.05 m (the last significant place is the tenths place), while a recording of 8,436 m would imply a margin of error of 0.5 m (the last significant digits are the units).

A reading of 8,000 m, with trailing zeroes and no decimal point, is ambiguous; the trailing zeroes may or may not be intended as significant figures. To avoid this ambiguity, the number could be represented in scientific notation: 8.0 × 103 m indicates that the first zero is significant (hence a margin of 50 m) while 8.000 × 103 m indicates that all three zeroes are significant, giving a margin of 0.5 m. Similarly, it is possible to use a multiple of the basic measurement unit: 8.0 km is equivalent to 8.0 × 103 m. In fact, it indicates a margin of 0.05 km (50 m). However, reliance on this convention can lead to false precision errors when accepting data from sources that do not obey it.

Looking at this in another way, a value of 8 would mean that the measurement has been made with a precision of 1 (the measuring instrument was able to measure only down to 1s place) whereas a value of 8.0 (though mathematically equal to 8) would mean that the value at the first decimal place was measured and was found to be zero. (The measuring instrument was able to measure the first decimal place.) The second value is more precise. Neither of the measured values may be accurate (the actual value could be 9.5 but measured inaccurately as 8 in both instances). Thus, accuracy can be said to be the 'correctness' of a measurement, while precision could be identified as the ability to resolve smaller differences.

Precision is sometimes stratified into:

- Repeatability — the variation arising when all efforts are made to keep conditions constant by using the same instrument and operator, and repeating during a short time period; and

- Reproducibility — the variation arising using the same measurement process among different instruments and operators, and over longer time periods.

Accuracy and precision in binary classification

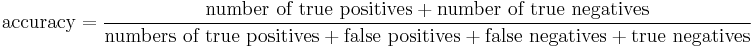

Accuracy is also used as a statistical measure of how well a binary classification test correctly identifies or excludes a condition.

| Condition (e.g., disease) As determined by Gold standard |

||||

| True | False | |||

| Test outcome |

Positive | True positive | False positive | → Positive predictive value |

| Negative | False negative | True negative | → Negative predictive value | |

| ↓ Sensitivity |

↓ Specificity |

Accuracy | ||

That is, the accuracy is the proportion of true results (both true positives and true negatives) in the population. It is a parameter of the test.

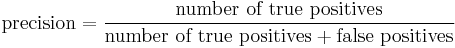

On the other hand, precision is defined as the proportion of the true positives against all the positive results (both true positives and false positives)

An accuracy of 100% means that the measured values are exactly the same as the given values.

Also see Sensitivity and specificity.

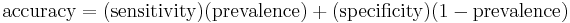

Accuracy may be determined from Sensitivity and Specificity, provided Prevalence is known, using the equation:

The accuracy paradox for predictive analytics states that predictive models with a given level of accuracy may have greater predictive power than models with higher accuracy. It may be better to avoid the accuracy metric in favor of other metrics such as precision and recall.

Accuracy and precision in psychometrics and psychophysics

In psychometrics and psychophysics, the term accuracy is interchangeably used with validity and constant error. Precision is a synonym for reliability and variable error. The validity of a measurement instrument or psychological test is established through experiment or correlation with behavior. Reliability is established with a variety of statistical techniques, classically through an internal consistency test like Cronbach's alpha to ensure sets of related questions have related responses, and then comparison of those related question between reference and target population.

Accuracy and precision in logic simulation

In logic simulation, a common mistake in evaluation of accurate models is to compare a logic simulation model to a transistor circuit simulation model. This is a comparison of differences in precision, not accuracy. Precision is measured with respect to detail and accuracy is measured with respect to reality.[2][3]

Accuracy and precision in information systems

The concepts of accuracy and precision have also been studied in the context of data bases, information systems and their sociotechnical context. The necessary extension of these two concepts on the basis of theory of science suggests that they (as well as data quality and information quality) should be centered on accuracy defined as the closeness to the true value seen as the degree of agreement of readings or of calculated values of one same conceived entity, measured or calculated by different methods, in the context of maximum possible disagreement.[4]

See also

- Accuracy class

- ANOVA Gauge R&R

- ASTM E177 Standard Practice for Use of the Terms Precision and Bias in ASTM Test Methods

- Data quality

- Experimental uncertainty analysis

- Failure assessment

- Gain (information retrieval)

- Information quality

- Precision bias

- Precision engineering

- Precision (statistics)

- Sensitivity and specificity

- Measurement resolution

References

- ↑ John Robert Taylor (1999). An Introduction to Error Analysis: The Study of Uncertainties in Physical Measurements. University Science Books. pp. 128–129. ISBN 093570275X. http://books.google.com/books?id=giFQcZub80oC&pg=PA128.

- ↑ John M. Acken, Encyclopedia of Computer Science and Technology, Vol 36, 1997, page 281-306

- ↑ 1990 Workshop on Logic-Level Modelling for ASICS, Mark Glasser, Rob Mathews, and John M. Acken, SIGDA Newsletter, Vol 20. Number 1, June 1990

- ↑ Ivanov, K. (1972). "Quality-control of information: On the concept of accuracy of information in data banks and in management information systems". The University of Stockholm and The Royal Institute of Technology. Doctoral dissertation. Further details are found in Ivanov, K. (1995). A subsystem in the design of informatics: Recalling an archetypal engineer. In B. Dahlbom (Ed.), The infological equation: Essays in honor of Börje Langefors, (pp. 287-301). Gothenburg: Gothenburg University, Dept. of Informatics (ISSN 1101-7422).

External links

- BIPM - Guides in metrology - Guide to the Expression of Uncertainty in Measurement (GUM) and International Vocabulary of Metrology (VIM)

- Precision and Accuracy with Three Psychophysical Methods

- Guidelines for Evaluating and Expressing the Uncertainty of NIST Measurement Results, Appendix D.1: Terminology

- Accuracy and Precision