Travelling salesman problem

The travelling salesman problem (TSP) in operations research is a problem in discrete or combinatorial optimization. It is a prominent illustration of a class of problems in computational complexity theory which are classified as NP-hard.

The problem is: given a number of cities and the costs of travelling from any city to any other city, what is the least-cost round-trip route that visits each city exactly once and then returns to the starting city?

Contents |

Problem statement

Given a number of cities and the costs of travelling from any city to any other city, what is the least-cost round-trip route that visits each city exactly once and then returns to the starting city?

Observation

The size of the solution space is (n - 1)!/2 for n > 2, where n is the number of cities. This is the number of Hamiltonian cycles in a complete graph of n nodes, that is, closed paths that visit all nodes exactly once.

Related problems

- An equivalent formulation in terms of graph theory is: Given a complete weighted graph (where the vertices would represent the cities, the edges would represent the roads, and the weights would be the cost or distance of that road), find a Hamiltonian cycle with the least weight.

- The requirement of returning to the starting city does not change the computational complexity of the problem, see Hamiltonian path problem.

- Another related problem is the bottleneck travelling salesman problem (bottleneck TSP): Find a Hamiltonian cycle in a weighted graph with the minimal weight of the weightiest edge. The problem is of considerable practical importance, apart from evident transportation and logistics areas. A classic example is in printed circuit manufacturing: scheduling of a route of the drill machine to drill holes in a PCB. In robotic machining or drilling applications, the "cities" are parts to machine or holes (of different sizes) to drill, and the "cost of travel" includes time for retooling the robot (single machine job sequencing problem).

- The generalized travelling salesman problem deals with "states" that have (one or more) "cities" and the salesman has to visit exactly one "city" from each "state". Also known as the "travelling politician problem". One application is encountered in ordering a solution to the cutting stock problem in order to minimise knife changes. Another is concerned with drilling in semiconductor manufacturing, see e.g. U.S. Patent 7,054,798. Surprisingly, Behzad and Modarres[1] demonstrated that the generalised travelling salesman problem can be transformed into a standard travelling salesman problem with the same number of cities, but a modified distance matrix.

History

Mathematical problems related to the travelling salesman problem were treated in the 1800s by the Irish mathematician Sir William Rowan Hamilton and by the British mathematician Thomas Kirkman. A discussion of the early work of Hamilton and Kirkman can be found in Graph Theory 1736-1936.[2] The general form of the TSP appears to have been first studied by mathematicians during the 1930s in Vienna and at Harvard, notably by Karl Menger.

The problem was later undertaken by Hassler Whitney and Merrill M. Flood at Princeton University. A detailed treatment of the connection between Menger and Whitney as well as the growth in the study of TSP can be found in Alexander Schrijver's 2005 paper "On the history of combinatorial optimization (till 1960)".[3]

NP-hardness

The problem has been shown to be NP-hard (more precisely, it is complete for the complexity class FPNP; see function problem), and the decision problem version ("given the costs and a number x, decide whether there is a round-trip route cheaper than x") is NP-complete. The bottleneck travelling salesman problem is also NP-hard. The problem remains NP-hard even for the case when the cities are in the plane with Euclidean distances, as well as in a number of other restrictive cases. Removing the condition of visiting each city "only once" does not remove the NP-hardness, since it is easily seen that in the planar case an optimal tour visits cities only once (otherwise, by the triangle inequality, a shortcut that skips a repeated visit would decrease the tour length).

Algorithms

The traditional lines of attacking for the NP-hard problems are the following:

- Devising algorithms for finding exact solutions (they will work reasonably fast only for relatively small problem sizes).

- Devising "suboptimal" or heuristic algorithms, i.e., algorithms that deliver either seemingly or probably good solutions, but which could not be proved to be optimal.

- Finding special cases for the problem ("subproblems") for which either better or exact heuristics are possible.

For benchmarking of TSP algorithms, TSPLIB is a library of sample instances of the TSP and related problems is maintained, see the TSPLIB external reference. Many of them are lists of actual cities and layouts of actual printed circuits.

Exact algorithms

The most direct solution would be to try all the permutations (ordered combinations) and see which one is cheapest (using brute force search), but given that the number of permutations is n! (the factorial of the number of cities, n), this solution rapidly becomes impractical. Using the techniques of dynamic programming, one can solve the problem in time  [4]. Although this is exponential, it is still much better than

[4]. Although this is exponential, it is still much better than  .

.

Other approaches include:

- Various branch-and-bound algorithms, which can be used to process TSPs containing 40-60 cities.

- Progressive improvement algorithms which use techniques reminiscent of linear programming. Works well for up to 200 cities.

- Implementations of branch-and-bound and problem-specific cut generation; this is the method of choice for solving large instances. This approach holds the current record, solving an instance with 85,900 cities, see below.[5]

An exact solution for 15,112 German towns from TSPLIB was found in 2001 using the cutting-plane method proposed by George Dantzig, Ray Fulkerson, and Selmer Johnson in 1954, based on linear programming. The computations were performed on a network of 110 processors located at Rice University and Princeton University (see the Princeton external link). The total computation time was equivalent to 22.6 years on a single 500 MHz Alpha processor. In May 2004, the travelling salesman problem of visiting all 24,978 towns in Sweden was solved: a tour of length approximately 72,500 kilometers was found and it was proven that no shorter tour exists.[6]

In March 2005, the travelling salesman problem of visiting all 33,810 points in a circuit board was solved using CONCORDE: a tour of length 66,048,945 units was found and it was proven that no shorter tour exists. The computation took approximately 15.7 CPU years (Cook et al. 2006). In April 2006 an instance with 85,900 points was solved using CONCORDE, taking over 136 CPU years, see the book by Applegate et al [2006] [7].

Heuristics

Various approximation algorithms, which quickly yield good solutions with high probability, have been devised. Modern methods can find solutions for extremely large problems (millions of cities) within a reasonable time which are with a high probability just 2-3% away from the optimal solution.

Several categories of heuristics are recognized.

Constructive heuristics

The nearest neighbour (NN) algorithm (the so-called greedy algorithm is similar, but slightly different) lets the salesman start from any one city and choose the nearest city not visited yet to be his next visit. This algorithm quickly yields an effectively short route.

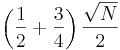

Rosenkrantz et al. [1977] showed that the NN algorithm has the approximation factor  for instances satisfying the triangle inequality. And the result is always of length <= 0.5*(log(n)+1), where n is the number of cities (Levitin, 2003).

for instances satisfying the triangle inequality. And the result is always of length <= 0.5*(log(n)+1), where n is the number of cities (Levitin, 2003).

For each n>1, there exist infinitely many examples for which the NN (greedy algorithm) gives the longest possible route (Gutin, Yeo, and Zverovich, 2002). This is true for both asymmetric and symmetric TSPs (Gutin and Yeo, 2007).

Recently a new constructive heuristic, Match Twice and Stitch (MTS) (Kahng, Reda 2004 [8]), is proposed. MTS has been shown to empirically outperform all existing tour construction heuristics. MTS performs two sequential matchings, where the second matching is executed after deleting all the edges of the first matching, to yield a set of cycles. The cycles are then stitched to produce the final tour.

Iterative improvement

- Pairwise exchange, or Lin-Kernighan heuristics.

- The pairwise exchange or '2-opt' technique involves iteratively removing two edges and replacing these with two different edges that reconnect the fragments created by edge removal into a new and more optimal tour. This is a special case of the k-opt method. Note that the label 'Lin-Kernighan' is an often heard misnomer for 2-opt. Lin-Kernighan is actually a more general method.

- k-opt heuristic

- Take a given tour and delete k mutually disjoint edges. Reassemble the remaining fragments into a tour, leaving no disjoint subtours (that is, don't connect a fragment's endpoints together). This in effect simplifies the TSP under consideration into a much simpler problem. Each fragment endpoint can be connected to 2k − 2 other possibilities: of 2k total fragment endpoints available, the two endpoints of the fragment under consideration are disallowed. Such a constrained 2k-city TSP can then be solved with brute force methods to find the least-cost recombination of the original fragments. The k-opt technique is a special case of the V-opt or variable-opt technique. The most popular of the k-opt methods are 3-opt, and these were introduced by Shen Lin of Bell Labs in 1965. There is a special case of 3-opt where the edges are not disjoint (two of the edges are adjacent to one another). In practice, it is often possible to achieve substantial improvement over 2-opt without the combinatorial cost of the general 3-opt by restricting the 3-changes to this special subset where two of the removed edges are adjacent. This so called two-and-a-half-opt typically falls roughly midway between 2-opt and 3-opt both in terms of the quality of tours achieved and the time required to achieve those tours.

- V'-opt heuristic

- The variable-opt method is related to, and a generalization of the k-opt method. Whereas the k-opt methods remove a fixed number (k) of edges from the original tour, the variable-opt methods do not fix the size of the edge set to remove. Instead they grow the set as the search process continues. The best known method in this family is the Lin-Kernighan method (mentioned above as a misnomer for 2-opt). Shen Lin and Brian Kernighan first published their method in 1972, and it was the most reliable heuristic for solving travelling salesman problems for nearly two decades. More advanced variable-opt methods were developed at Bell Labs in the late 1980s by David Johnson and his research team. These methods (sometimes called Lin-Kernighan-Johnson) build on the Lin-Kernighan method, adding ideas from tabu search and evolutionary computing. The basic Lin-Kernighan technique gives results that are guaranteed to be at least 3-opt. The Lin-Kernighan-Johnson methods compute a Lin-Kernighan tour, and then perturb the tour by what has been described as a mutation that removes at least four edges and reconnecting the tour in a different way, then v-opting the new tour. The mutation is often enough to move the tour from the local well identified by Lin-Kernighan. V-opt methods are widely considered the most powerful heuristics for the problem, and are able to address special cases, such as the Hamilton Cycle Problem and other non-metric TSPs that other heuristics fail on. For many years Lin-Kernighan-Johnson had identified optimal solutions for all TSPs where an optimal solution was known and had identified the best known solutions for all other TSPs on which the method had been tried.

Randomised improvement

- Optimised Markov chain algorithms which use local searching heuristic sub-algorithms can find a route extremely close to the optimal route for 700 to 800 cities.

- Random path change algorithms are currently the state-of-the-art search algorithms and work up to 100,000 cities. The concept is quite simple: Choose a random path, choose four nearby points, swap their ways to create a new random path, while in parallel decreasing the upper bound of the path length. If repeated until a certain number of trials of random path changes fail due to the upper bound, one has found a local minimum with high probability, and further it is a global minimum with high probability (where high means that the rest probability decreases exponentially in the size of the problem - thus for 10,000 or more nodes, the chances of failure is negligible).

TSP is a touchstone for many general heuristics devised for combinatorial optimization such as genetic algorithms, simulated annealing, Tabu search, ant colony optimization, and the cross entropy method.

Example letting the inversion operator find a good solution

Suppose that the number of towns is sixty. For a random search process, this is like having a deck of cards numbered 1, 2, 3, ... 59, 60 where the number of permutations is of the same order of magnitude as the total number of atoms in the known universe. If the hometown is not counted the number of possible tours becomes 60*59*58*...*4*3 (about 1080, i. e. a 1 followed by 80 zeros).

Suppose that the salesman does not have a map showing the location of the towns, but only a deck of numbered cards, which he may permute, put in a card reader - as in early computers - and let the computer calculate the length of the tour. The probability to find the shortest tour by random permutation is about one in 1080 so, it will never happen. So, should he give up?

No, by no means, evolution may be of great help to him; at least if it could be simulated on his computer. The natural evolution uses an inversion operator, which - in principle - is extremely well suited for finding good solutions to the problem. A part of the card deck (DNA) - chosen at random - is taken out, turned in opposite direction and put back in the deck again like in the figure below with 6 towns. The hometown (nr 1) is not counted.

If this inversion takes place where the tour happens to have a loop, then the loop is opened and the salesman is guaranteed a shorter tour. The probability that this will happen is greater than 1/(60*60) for any loop if we have 60 towns, so, in a population with one million card decks it might happen 1000000/3600 = 277 times that a loop will disappear.

This has been simulated with a population of 180 card decks, from which 60 decks are selected in every generation. The figure below shows a random tour at start

After about 1500 generations all loops have been removed and the length of the random tour at start has been reduced to 1/5 of the original tour. The human eye can see that some improvements can be made, but probably the random search has found a tour, which is not much longer than the shortest possible. See figure below.

In a special case when all towns are equidistantly placed along a circle, the optimal solution is found when all loops have been removed. This means that this simple random search is able to find one optimal tour out of as many as 1080. See also Goldberg, 1989.

Ant colony optimization

Artificial intelligence researcher Marco Dorigo described in 1997 a method of heuristically generating "good solutions" to the TSP using a simulation of an ant colony called ACS.[9] It uses some of the same ideas used by real ants to find short paths between food sources and their nest, an emergent behavior resulting from each ant's preference to follow trail pheromones deposited by other ants.

ACS sends out a large number of virtual ant agents to explore many possible routes on the map. Each ant probabilistically chooses the next city to visit based on a heuristic combining the distance to the city and the amount of virtual pheromone deposited on the edge to the city. The ants explore, depositing pheromone on each edge that they cross, until they have all completed a tour. At this point the ant which completed the shortest tour deposits virtual pheromone along its complete tour route (global trail updating). The amount of pheromone deposited is inversely proportional to the tour length; the shorter the tour, the more it deposits.

Special cases

Metric TSP

A very natural restriction of the TSP is to require that the distances between cities form a metric, i.e., they satisfy the triangle inequality. That is, for any 3 cities A, B and C, the distance between A and C must be at most the distance from A to B plus the distance from B to C. Most natural instances of TSP satisfy this constraint.

In this case, there is a constant-factor approximation algorithm due to Christofides[10] that always finds a tour of length at most 1.5 times the shortest tour. In the next paragraphs, we explain a weaker (but simpler) algorithm which finds a tour of length at most twice the shortest tour.

The length of the minimum spanning tree of the network is a natural lower bound for the length of the optimal route. In the TSP with triangle inequality case it is possible to prove upper bounds in terms of the minimum spanning tree and design an algorithm that has a provable upper bound on the length of the route. The first published (and the simplest) example follows.

- Construct the minimum spanning tree.

- Duplicate all its edges. That is, wherever there is an edge from u to v, add a second edge from u to v. This gives us an Eulerian graph.

- Find a Eulerian cycle in it. Clearly, its length is twice the length of the tree.

- Convert the Eulerian cycle into the Hamiltonian one in the following way: walk along the Eulerian cycle, and each time you are about to come into an already visited vertex, skip it and try to go to the next one (along the Eulerian cycle).

It is easy to prove that the last step works. Moreover, thanks to the triangle inequality, each skipping at Step 4 is in fact a shortcut, i.e., the length of the cycle does not increase. Hence it gives us a TSP tour no more than twice as long as the optimal one.

The Christofides algorithm follows a similar outline but combines the minimum spanning tree with a solution of another problem, minimum-weight perfect matching. This gives a TSP tour which is at most 1.5 times the optimal. The Christofides algorithm was one of the first approximation algorithms, and was in part responsible for drawing attention to approximation algorithms as a practical approach to intractable problems. As a matter of fact, the term "algorithm" was not commonly extended to approximation algorithms until later; the Christofides algorithm was initially referred to as the Christofides heuristic.

In the special case that distances between cities are all either one or two (and thus the triangle inequality is necessarily satisfied), there is a polynomial-time approximation algorithm that finds a tour of length at most 8/7 times the optimal tour length[11]. However, it is a long-standing (since 1975) open problem to improve the Christofides approximation factor of 1.5 for general metric TSP to a smaller constant. It is known that, unless P = NP, there is no polynomial-time algorithm that finds a tour of length at most 220/219=1.00456… times the optimal tour's length[12]. In the case of bounded metrics it is known that there is no polynomial time algorithm that constructs a tour of length at most 1/388 more than optimal, unless P = NP[13].

Euclidean TSP

Euclidean TSP, or planar TSP, is the TSP with the distance being the ordinary Euclidean distance.

Euclidean TSP is a particular case of TSP with triangle inequality, since distances in plane obey triangle inequality. However, it seems to be easier than general TSP with triangle inequality. For example, the minimum spanning tree of the graph associated with an instance of Euclidean TSP is a Euclidean minimum spanning tree, and so can be computed in expected O(n log n) time for n points (considerably less than the number of edges). This enables the simple 2-approximation algorithm for TSP with triangle inequality above to operate more quickly.

In general, for any c > 0, there is a polynomial-time algorithm that finds a tour of length at most (1 + 1/c) times the optimal for geometric instances of TSP in O(n (log n)^O(c)) time; this is called a polynomial-time approximation scheme[14] In practice, heuristics with weaker guarantees continue to be used.

Asymmetric TSP

In most cases, the distance between two nodes in the TSP network is the same in both directions. The case where the distance from A to B is not equal to the distance from B to A is called asymmetric TSP. A practical application of an asymmetric TSP is route optimisation using street-level routing (asymmetric due to one-way streets, slip-roads and motorways).

Solving by conversion to Symmetric TSP

Solving an asymmetric TSP graph can be somewhat complex. The following is a 3x3 matrix containing all possible path weights between the nodes A, B and C. One option is to turn an asymmetric matrix of size N into a symmetric matrix of size 2N, doubling the complexity.

-

Asymmetric Path Weights A B C A 1 2 B 6 3 C 5 4

To double the size, each of the nodes in the graph is duplicated, creating a second ghost node. Using duplicate points with very low weights, such as -∞, provides a cheap route "linking" back to the real node and allowing symmetric evaluation to continue. The original 3x3 matrix shown above is visible in the bottom left and the inverse of the original in the top-right. Both copies of the matrix have had their diagonals replaced by the low-cost hop paths, represented by -∞.

-

Symmetric Path Weights A B C A' B' C' A -∞ 6 5 B 1 -∞ 4 C 2 3 -∞ A' -∞ 1 2 B' 6 -∞ 3 C' 5 4 -∞

The original 3x3 matrix would produce two Hamiltonian cycles (a path that visits every node once), namely A-B-C-A [score 9] and A-C-B-A [score 12]. Evaluating the 6x6 symmetric version of the same problem now produces many paths, including A-A'-B-B'-C-C'-A, A-B'-C-A'-A, A-A'-B-C'-A [all score 9-∞].

The important thing about each new sequence is that there will be an alternation between dashed (A',B',C') and un-dashed nodes (A,B,C) and that the link to "jump" between any related pair (A-A') is effectively free. A version of the algorithm could use any weight for the A-A' path, as long as that weight is lower than all other path weights present in the graph. As the path weight to "jump" must effectively be "free", the value zero (0) could be used to represent this cost — if zero is not being used for another purpose already (such as designating invalid paths). In the two examples above, non-existent paths between nodes are shown as a blank square.

Human performance on TSP

The TSP, in particular the Euclidean variant of the problem, has attracted the attention of researchers in cognitive psychology. It is observed that humans are able to produce good quality solutions quickly. The first issue of the Journal of Problem Solving is devoted to the topic of human performance on TSP.

TSP path length

Many quick algorithms yield approximate TSP solutions for a large city number. To have an idea of the precision of an approximation, one should measure the resulted path length and compare it to the exact path length. To find out the exact path length, there are 3 approaches:

- find a lower bound of it,

- find an upper bound of it with CPU time T, do extrapolation on T to infinity so result in a reasonable guess of the exact value, or

- solve the exact value without solving the city sequence.

Lower bound

Consider N points randomly distributed in one unit square, with N>>1. A simple lower bound of the shortest path length is  , obtained by considering each point connected to its nearest neighbor which is

, obtained by considering each point connected to its nearest neighbor which is  distance away on average.

distance away on average.

Another lower bound is  , obtained by considering each point j connected to j's nearest neighbor, and j's second nearest neighbor connected to j. Since j's nearest neighbor is

, obtained by considering each point j connected to j's nearest neighbor, and j's second nearest neighbor connected to j. Since j's nearest neighbor is  distance away; j's second nearest neighbor is

distance away; j's second nearest neighbor is  distance away on average.

distance away on average.

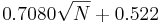

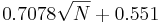

- David S. Johnson[15] obtained a lower bound by experiment:

- Christine L. Valenzuela and Antonia J. Jones [16] obtained a better lower bound by experiment:

See also

- Assignment problem

- Canadian traveller problem

- Greedy algorithm

- Route inspection problem

- Set TSP problem

- Seven Bridges of Königsberg

- Six degrees of separation

- Steiner tree problem

- Travelling repairman problem (Minimum latency problem)

- Travelling tourist problem

References

- ↑ Behzad, Arash; Modarres, Mohammad (2002), "New Efficient Transformation of the Generalized Traveling Salesman Problem into Traveling Salesman Problem", Proceedings of the 15th International Conference of Systems Engineering (Las Vegas)

- ↑ N.L. Biggs, E.K. LLoyd, and R.J. Wilson, Graph Theory 1736-1936, Clarendon Press, Oxford, 1976.

- ↑ Schrijver, Alexander. "On the history of combinatorial optimization (till 1960)," Handbook of Discrete Optimization (K. Aardal, G.L. Nemhauser, R. Weismantel, eds.), Elsevier, Amsterdam, 2005, pp. 1-68. PS, PDF

- ↑ Held, Michael; Karp, Richard M. (1962), "A Dynamic Programming Approach to Sequencing Problems", Journal of the Society for Industrial and Applied Mathematics 10 (1): 196–210, doi:

- ↑ Applegate, D. L.; Bixby, R. M.; Chvátal, V.; Cook (2006), The Traveling Salesman Problem.

- ↑ Work by David Applegate, AT&T Labs - Research, Robert Bixby, ILOG and Rice University, Vašek Chvátal, Concordia University, William Cook, Georgia Tech, and Keld Helsgaun, Roskilde University is discussed on their project web page hosted by Georgia Tech and last updated in June 2004, here

- ↑ Applegate, D. L.; Bixby, R. M.; Chvátal, V.; Cook (2006), The Traveling Salesman Problem

- ↑ A. B. Kahng and S. Reda, "Match Twice and Stitch: A New TSP Tour Construction Heuristic," Operations Research Letters, 2004, 32(6). pp. 499-509. http://www.sciencedirect.com/science?_ob=GatewayURL&_method=citationSearch&_uoikey=B6V8M-4CKFN5S-4&_origin=SDEMFRASCII&_version=1&md5=04d492ab46c07b9911e230ebecd0f70d

- ↑ Marco Dorigo. Ant Colonies for the Traveling Salesman Problem. IRIDIA, Université Libre de Bruxelles. IEEE Transactions on Evolutionary Computation, 1(1):53–66. 1997. http://citeseer.ist.psu.edu/86357.html

- ↑ N. Christofides, Worst-case analysis of a new heuristic for the traveling salesman problem, Report 388, Graduate School of Industrial Administration, Carnegie Mellon University, 1976.

- ↑ P. Berman (2006). M. Karpinski, "8/7-Approximation Algorithm for (1,2)-TSP", Proc. 17th ACM-SIAM SODA (2006), pp. 641-648.

- ↑ C.H. Papadimitriou and Santosh Vempala. (2000). "On the approximability of the traveling salesman problem", Proceedings of the 32nd Annual ACM Symposium on Theory of Computing, 2000.

- ↑ L. Engebretsen, M. Karpinski, Approximation hardness of TSP with bounded metrics, Proceedings of 28th ICALP (2001), LNCS 2076, Springer 2001, pp. 201-212.

- ↑ Sanjeev Arora. Polynomial Time Approximation Schemes for Euclidean Traveling Salesman and other Geometric Problems. Journal of the ACM, Vol.45, Issue 5, pp.753–782. ISSN:0004-5411. September 1998. http://citeseer.ist.psu.edu/arora96polynomial.html

- ↑ David S. Johnson

- ↑ Christine L. Valenzuela and Antonia J. Jones

Further reading

- Gilbert Babin Stephanie Deneaulty Gilbert Laportey (2005). "Improvements to the Or-opt Heuristic for the Symmetric Traveling Salesman Problem".

- D.L. Applegate, R.E. Bixby, V. Chvátal and W.J. Cook (2006). The Traveling Salesman Problem: A Computational Study. Princeton University Press. ISBN 978-0-691-12993-8.

- S. Arora (1998). "Polynomial Time Approximation Schemes for Euclidean Traveling Salesman and other Geometric Problems". Journal of ACM, 45 (1998), pp. 753-782.

- William Cook, Daniel Espinoza, Marcos Goycoolea (2006). Computing with domino-parity inequalities for the TSP. INFORMS Journal on Computing. Accepted.

- Thomas H. Cormen, Charles E. Leiserson, Ronald L. Rivest, and Clifford Stein (1954). Introduction to Algorithms, Second Edition. MIT Press and McGraw-Hill, 2001. ISBN 0-262-03293-7. Section 35.2: The traveling-salesman problem, pp. 1027–1033.

- G.B. Dantzig, R. Fulkerson, and S. M. Johnson, Solution of a large-scale traveling salesman problem, Operations Research 2 (1954), pp. 393-410.

- Michael R. Garey and David S. Johnson (1979). Computers and Intractability: A Guide to the Theory of NP-Completeness. W.H. Freeman. ISBN 0-7167-1045-5. A2.3: ND22–24, pp.211–212.

- D.E. Goldberg (1989). Genetic Algorithms in Search, Optimization & Machine Learning. Addison-Wesley, New York, 1989.

- G. Gutin, A. Yeo and A. Zverovich, Traveling salesman should not be greedy: domination analysis of greedy-type heuristics for the TSP. Discrete Applied Mathematics 117 (2002), 81-86.

- G. Gutin and A.P. Punnen (2006). The Traveling Salesman Problem and Its Variations. Springer. ISBN 0-387-44459-9.

- D.S. Johnson & L.A. McGeoch (1997). The Traveling Salesman Problem: A Case Study in Local Optimization, Local Search in Combinatorial Optimisation, E. H. L. Aarts and J.K. Lenstra (ed), John Wiley and Sons Ltd, 1997, pp. 215-310.

- E. L. Lawler and Jan Karel Lenstra and A. H. G. Rinnooy Kan and D. B. Shmoys (1985). The Traveling Salesman Problem: A Guided Tour of Combinatorial Optimization. John Wiley & Sons. ISBN 0-471-90413-9.

- J.N. MacGregor & T. Ormerod (1996). Human performance on the traveling salesman problem. Perception & Psychophysics, 58(4), pp. 527–539.

- J. Mitchell (1999). "Guillotine subdivisions approximate polygonal subdivisions: A simple polynomial-time approximation scheme for geometric TSP, k-MST, and related problems", SIAM Journal on Computing, 28 (1999), pp. 1298–1309.

- S. Rao, W. Smith. Approximating geometrical graphs via 'spanners' and 'banyans'. Proc. 30th Annual ACM Symposium on Theory of Computing, 1998, pp. 540-550.

- Daniel J. Rosenkrantz and Richard E. Stearns and Phlip M. Lewis II (1977). "An Analysis of Several Heuristics for the Traveling Salesman Problem". SIAM J. Comput. 6 (5): 563–581. doi:.

- D. Vickers, M. Butavicius, M. Lee, & A. Medvedev (2001). Human performance on visually presented traveling salesman problems. Psychological Research, 65, pp. 34–45.

External links

- Applet for TSP solving using genetic algorithm

- Approximate Solution to Travelling Salesman Problem using Kohonen Self-Organizing Maps

- Demo applet of a evolutionary algorithm for solving TSP's and VRPTW problems

- Flash Demo solving random TSP's with next neighbour, nearest Insert, farthest Insert and cheapest Insert heuristics

- Georgia Institute of Technology Home of the Concorde TSP Solver, an ANSI C library dedicated to solving the TSP problem.

- Gerhard Reinelt TSPLIB Databases

- Human TSP Solver Web application for crowdsourcing instances of the TSP.

- Kohonen Neural Network applied to the Travelling Salesman Problem (using three dimensions).

- R-package TSP Infrastructure for the travelling salesman problem for R.

- Solution of the Travelling Salesman Problem using a Kohonen Map

- Solving TSP through BOINC.

- Travelling Salesman Problem at Georgia Tech

- Travelling Salesman Problem by Jon McLoone based on a program by Stephen Wolfram, after work by Stan Wagon, Wolfram Demonstrations Project.

- TSP Flaming Solution of the Travelling Salesman Problem using Simulated Annealing.