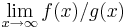

Big O notation

In mathematics, big O notation (so called because it uses the symbol O) describes the limiting behavior of a function for very small or very large arguments, usually in terms of simpler functions. It is also called Big Oh notation, Landau notation, Bachmann-Landau notation, and asymptotic notation. There are also other symbols o, Ω, ω, and Θ for related bounds.

Although developed as a part of pure mathematics, it is now frequently also used in computational complexity theory to describe how the size of the input data affects an algorithm's usage of computational resources (usually running time or memory). It is also used in many other fields to provide similar estimates.

Contents |

Formal definition

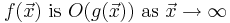

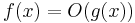

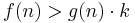

Suppose  and

and  are two functions defined on some subset of the real numbers. We say

are two functions defined on some subset of the real numbers. We say

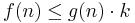

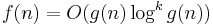

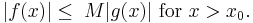

) if and only if there exists a positive real number M and a real number

) if and only if there exists a positive real number M and a real number  such that for all x,

such that for all x, , wherever

, wherever

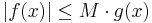

if and only if there exists a positive real number M and a real number  such that

such that  for

for

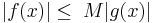

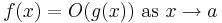

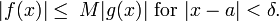

The notation can also be used to describe the behavior of f near some real number a: we say  if and only if there exist positive numbers δ and M such that

if and only if there exist positive numbers δ and M such that

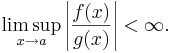

If  is non-zero for values of x sufficiently close to a, both of these definitions can be unified using the limit superior:

is non-zero for values of x sufficiently close to a, both of these definitions can be unified using the limit superior:

if and only if

History

The notation was first introduced by number theorist Paul Bachmann in 1894, in the second volume of his book Analytische Zahlentheorie ("analytic number theory"), the first volume of which (not yet containing big O notation) was published in 1892.[1] The notation was popularized in the work of a number theorist Edmund Landau; hence it is sometimes called a Landau symbol. The big-O, standing for "order of", was originally a capital omicron; today the identical-looking Latin capital letter O is also used, but never the digit zero.

Usage

Big O notation has two main areas of application. In mathematics, it is commonly used to describe how closely a finite series approximates a given function, especially in the case of a truncated Taylor series or asymptotic expansion. In computer science, it is useful in the analysis of algorithms. In both of the applications, the function g(x) appearing within the O(...) is typically chosen to be as simple as possible, omitting constant factors and lower order terms.

There are two formally close, but noticeably different, usages of this notation: infinite asymptotics and infinitesimal asymptotics. This distinction is only in application and not in principle, however—the formal definition for the "big O" is the same for both cases, only with different limits for the function argument.

Infinite asymptotics

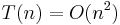

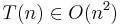

Big O notation is useful when analyzing algorithms for efficiency. For example, the time (or the number of steps) it takes to complete a problem of size n might be found to be T(n) = 4n² − 2n + 2.

As n grows large, the n² term will come to dominate, so that all other terms can be neglected — for instance when n = 500, the term 4n² is 1000 times as large as the 2n term. Ignoring the latter would have negligible effect on the expression's value for most purposes.

Further, the coefficients become irrelevant as well if we compare to any other order of expression, such as an expression containing a term n³ or n². Even if T(n) = 1,000,000n², if U(n) = n³, the latter will always exceed the former once n grows larger than 1,000,000 (T(1,000,000) = 1,000,000³ = U(1,000,000)). Additionally, the number of steps depends on the details of the machine model on which the algorithm runs, but different types of machines typically vary by only a constant factor in the number of steps needed to execute an algorithm.

So the big O notation captures what remains: we write either

or

(read as "big o of n squared") and say that the algorithm has order of n² time complexity.

Note that "=" is not meant to express "is equal to" in its normal mathematical sense, but rather a more colloquial "is", so the second expression may be more accurate (see the "Equals sign" discussion below).

Infinitesimal asymptotics

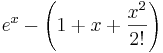

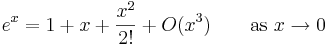

Big O can also be used to describe the error term in an approximation to a mathematical function. For example,

expresses the fact that the error, the difference  , is smaller in absolute value than some constant times

, is smaller in absolute value than some constant times  when

when  is close enough to 0.

is close enough to 0.

Example

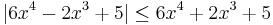

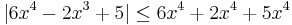

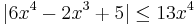

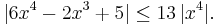

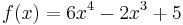

Take the polynomials:

We say f(x) has order O(g(x)) or O(x4) (as  )

)

From the definition of order

Proof:

- for all

(we take

(we take  ):

):

where M = 13 in this example

where M = 13 in this example

Matters of notation

Equals sign

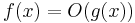

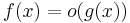

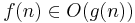

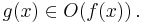

The statement " is

is  " as defined above is usually written as

" as defined above is usually written as  . This is a slight abuse of notation; equality of two functions is not asserted, and it cannot be since the property of being

. This is a slight abuse of notation; equality of two functions is not asserted, and it cannot be since the property of being  is not symmetric:

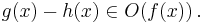

is not symmetric:

.

.

There is also a second reason why that notation is not precise. The symbol f(x) means the value of the function f for the argument x. Hence the symbol of the function is f and not f(x).

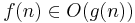

For these reasons, some authors prefer set notation and write  , thinking of

, thinking of  as the set of all functions dominated by g.

as the set of all functions dominated by g.

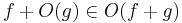

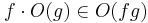

Other arithmetic operators

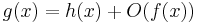

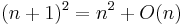

Big O notation can also be used in conjunction with other arithmetic operators in more complicated equations. For example, h(x) + O(f(x)) denotes the collection of functions having the growth of h(x) plus a part whose growth is limited to that of f(x). Thus,

expresses the same as

Example

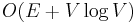

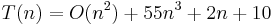

Suppose an algorithm is being developed to operate on a set of n elements. Its developers are interested in finding a function T(n) that will express how long the algorithm will take to run (in some arbitrary measurement of time) in terms of the number of elements in the input set. The algorithm works by first calling a subroutine to operate on the elements in the set (e.g. sort them) and then doing its own operation on the set. The subroutine has a known time complexity of  , and after the subroutine runs the algorithm must take an additional

, and after the subroutine runs the algorithm must take an additional  time before it terminates. Thus the overall time complexity of the algorithm can be expressed as

time before it terminates. Thus the overall time complexity of the algorithm can be expressed as

This can perhaps be most easily read by replacing  with "some function that grows asymptotically slower than

with "some function that grows asymptotically slower than  ". Again, this usage disregards some of the formal meaning of the "=" and "+" symbols, but it does allow one to use the big O notation as a kind of convenient placeholder.

". Again, this usage disregards some of the formal meaning of the "=" and "+" symbols, but it does allow one to use the big O notation as a kind of convenient placeholder.

Declaration of variables

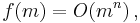

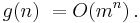

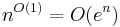

Another anomaly of the notation, although less exceptional, is that it does not make explicit which variable is the function argument, which may need to be inferred from the context if several variables are involved. The following two right-hand side big O notations have dramatically different meanings:

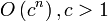

The first case states that f(m) exhibits polynomial growth, while the second, assuming m > 1, states that g(n) exhibits exponential growth. So as to avoid all possible confusion, some authors use the notation

meaning the same as what is denoted by others as

Complex usages

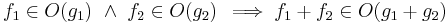

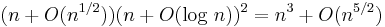

In more complex usage, O( ) can appear in different places in an equation, even several times on each side. For example, the following are true for

The meaning of such statements is as follows: for any functions which satisfy each O( ) on the left side, there are some functions satisfying each O( ) on the right side, such that substituting all these functions into the equation makes the two sides equal. For example, the third equation above means: "For any function f(n)=O(1), there is some function g(n)=O(en) such that nf(n)=g(n)." In terms of the "set notation" above, the meaning is that the class of functions represented by the left side is a subset of the class of functions represented by the right side.

Orders of common functions

Here is a list of classes of functions that are commonly encountered when analyzing algorithms. In each case, c is a constant and n increases without bound. The slower-growing functions are listed first.

| Notation | Name | Example |

|---|---|---|

|

constant | Determining if a number is even or odd; using a constant-size lookup table or hash table |

|

inverse Ackermann | Amortized time per operation using a disjoint set |

|

iterated logarithmic | The find algorithm of Hopcroft and Ullman on a disjoint set |

|

double logarithmic | Amortized time per operation using a bounded priority queue[2] |

|

logarithmic | Finding an item in a sorted array with a binary search |

|

polylogarithmic | Deciding if n is prime with the AKS primality test |

|

fractional power | Searching in a kd-tree |

|

linear | Finding an item in an unsorted list; adding two n-digit numbers |

|

linearithmic, loglinear, or quasilinear | Performing a Fast Fourier transform; heapsort or merge sort |

|

quadratic | Multiplying two n-digit numbers by a simple algorithm; adding two n×n matrices; bubble sort or insertion sort |

|

cubic | Multiplying two n×n matrices by simple algorithm; finding the shortest path on a weighted digraph with the Floyd-Warshall algorithm |

|

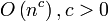

polynomial or algebraic | Tree-adjoining grammar parsing; maximum matching for bipartite graphs (grows faster than cubic iff  ) ) |

![L_n[\alpha,c], 0 < \alpha < 1=\,](/2009-wikipedia_en_wp1-0.7_2009-05/I/52acd8181ae48e5bf45c13868bce0371.png)  |

L-notation | Factoring a number using the special or general number field sieve |

|

exponential or geometric | Finding the (exact) solution to the traveling salesman problem using dynamic programming; determining if two logical statements are equivalent using brute force |

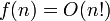

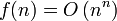

|

factorial or combinatorial | Solving the traveling salesman problem via brute-force search; finding the determinant with expansion by minors. The statement  is sometimes weakened to is sometimes weakened to  to derive simpler formulas for asymptotic complexity. to derive simpler formulas for asymptotic complexity. |

|

double exponential | Deciding the truth of a given statement in Presburger arithmetic |

Not as common, but even larger growth is possible, such as the single-valued version of the Ackermann function, A(n,n).

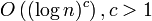

For any  and

and  ,

,  is a subset of

is a subset of  for any

for any  , so may be considered as a polynomial with some bigger order.

, so may be considered as a polynomial with some bigger order.

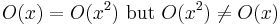

Properties

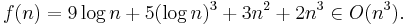

If a function f(n) can be written as a finite sum of other functions, then the fastest growing one determines the order of f(n). For example

In particular, if a function may be bounded by a polynomial in n, then as n tends to infinity, one may disregard lower-order terms of the polynomial.

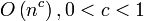

and

and  are very different. The latter grows much, much faster, no matter how big the constant c is (as long as it is greater than one). A function that grows faster than any power of n is called superpolynomial. One that grows more slowly than any exponential function of the form

are very different. The latter grows much, much faster, no matter how big the constant c is (as long as it is greater than one). A function that grows faster than any power of n is called superpolynomial. One that grows more slowly than any exponential function of the form  is called subexponential. An algorithm can require time that is both superpolynomial and subexponential; examples of this include the fastest known algorithms for integer factorization.

is called subexponential. An algorithm can require time that is both superpolynomial and subexponential; examples of this include the fastest known algorithms for integer factorization.

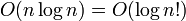

is exactly the same as

is exactly the same as  . The logarithms differ only by a constant factor, (since

. The logarithms differ only by a constant factor, (since  ) and thus the big O notation ignores that. Similarly, logs with different constant bases are equivalent. Exponentials with different bases, on the other hand, are not of the same order. For example,

) and thus the big O notation ignores that. Similarly, logs with different constant bases are equivalent. Exponentials with different bases, on the other hand, are not of the same order. For example,  and

and  are not of the same order.

are not of the same order.

Changing units may or may not affect the order of the resulting algorithm. Changing units is equivalent to multiplying the appropriate variable by a constant wherever it appears. For example, if an algorithm runs in the order of n2, replacing n by cn means the algorithm runs in the order of  , and the big O notation ignores the constant

, and the big O notation ignores the constant  . This can be written as

. This can be written as  . If, however, an algorithm runs in the order of

. If, however, an algorithm runs in the order of  , replacing n with cn gives

, replacing n with cn gives  . This is not equivalent to

. This is not equivalent to  (unless, of course, c=1).

(unless, of course, c=1).

Changing of variable may affect the order of the resulting algorithm. For example, if an algorithm runs on the order of O(n) when n is the number of digits of the input number, then it has order O(log n) when n is the input number itself.

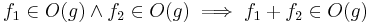

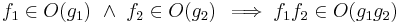

Product

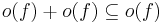

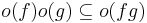

Sum

- This implies

, which means that

, which means that  is a convex cone.

is a convex cone.

- This implies

- If f and g are positive functions,

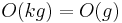

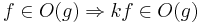

Multiplication by a constant

- Let k be a constant. Then:

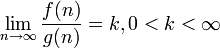

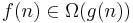

Related asymptotic notations

Big O is the most commonly used asymptotic notation for comparing functions, although in many cases Big O may be replaced with Big Theta Θ for asymptotically tighter bounds (Theta, see below). Here, we define some related notations in terms of Big O, progressing up to the family of Bachmann-Landau notations to which Big O notation belongs.

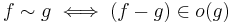

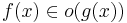

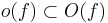

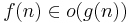

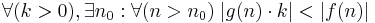

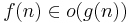

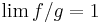

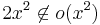

Little-o notation

The relation  is read as "

is read as " is little-o of

is little-o of  ". Intuitively, it means that

". Intuitively, it means that  grows much faster than

grows much faster than  . It assumes that f and g are both functions of one variable. Formally, it states that the limit of

. It assumes that f and g are both functions of one variable. Formally, it states that the limit of  is zero, as x approaches infinity. For algebraically defined functions

is zero, as x approaches infinity. For algebraically defined functions  and

and  ,

,  is generally found using L'Hôpital's rule.

is generally found using L'Hôpital's rule.

For example,

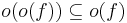

Little-o notation is common in mathematics but rarer in computer science. In computer science the variable (and function value) is most often a natural number. In math, the variable and function values are often real numbers. The following properties can be useful:

(and thus the above properties apply with most combinations of o and O).

(and thus the above properties apply with most combinations of o and O).

As with big O notation, the statement " is

is  " is usually written as

" is usually written as  , which is a slight abuse of notation.

, which is a slight abuse of notation.

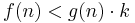

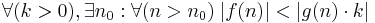

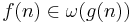

The family of Bachmann-Landau notations

| Notation | Name | Intuition | As  , eventually... , eventually... |

Definition |

|---|---|---|---|---|

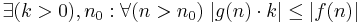

|

Big Omicron; Big O; Big Oh |  is bounded above by is bounded above by  (up to constant factor) asymptotically (up to constant factor) asymptotically |

|

or or  |

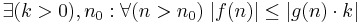

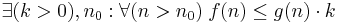

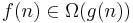

|

Big Omega |  is bounded below by is bounded below by  (up to constant factor) asymptotically (up to constant factor) asymptotically |

|

|

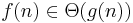

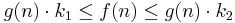

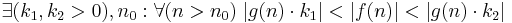

|

Big Theta |  is bounded both above and below by is bounded both above and below by  asymptotically asymptotically |

|

|

|

Small Omicron; Small O; Small Oh |  is dominated by is dominated by  asymptotically asymptotically |

|

|

|

Small Omega |  dominates dominates  asymptotically asymptotically |

|

|

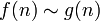

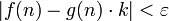

|

on the order of |  is equal to is equal to  asymptotically asymptotically |

|

|

Bachmann-Landau notation was designed around several mnemonics, as shown in the As  , eventually... column above and in the bullets below. To conceptually access these mnemonics, "omicron" can be read "o-micron" and "omega" can be read "o-mega". Also, the lower-case versus capitalization of the Greek letters in Bachmann-Landau notation is mnemonic.

, eventually... column above and in the bullets below. To conceptually access these mnemonics, "omicron" can be read "o-micron" and "omega" can be read "o-mega". Also, the lower-case versus capitalization of the Greek letters in Bachmann-Landau notation is mnemonic.

- the o-micron mnemonic: The o-micron reading of

and of

and of  can be thought of as "O-smaller than" and "o-smaller than", respectively. This micro/smaller mnemonic refers to: for sufficiently large input parameter(s),

can be thought of as "O-smaller than" and "o-smaller than", respectively. This micro/smaller mnemonic refers to: for sufficiently large input parameter(s),  grows at a rate that may henceforth be less than

grows at a rate that may henceforth be less than  regarding

regarding  or

or  .

. - the o-mega mnemonic: The o-mega reading of

and of

and of  can be thought of as "O-larger than". This mega/larger mnemonic refers to: for sufficiently large input parameter(s),

can be thought of as "O-larger than". This mega/larger mnemonic refers to: for sufficiently large input parameter(s),  grows at a rate that may henceforth be greater than

grows at a rate that may henceforth be greater than  regarding

regarding  or

or  .

. - the upper-case mnemonic: This mnemonic reminds us when to use the upper-case Greek letters in

and

and  : for sufficiently large input parameter(s),

: for sufficiently large input parameter(s),  grows at a rate that may henceforth be equal to

grows at a rate that may henceforth be equal to  regarding

regarding  .

. - the lower-case mnemonic: This mnemonic reminds us when to use the lower-case Greek letters in

and

and  : for sufficiently large input parameter(s),

: for sufficiently large input parameter(s),  grows at a rate that is henceforth inequal to

grows at a rate that is henceforth inequal to  regarding

regarding  .

.

Aside from Big O notation, the Big Theta Θ and Big Omega Ω notations are the two most often used in computer science; the Small Omega ω notation is rarely used in computer science.

Informally, especially in computer science, the Big O notation often is permitted to be somewhat abused to describe an asymptotic tight bound where using Big Theta Θ notation might be more factually appropriate in a given context. For example, when considering a function  , all of the following are generally acceptable, but tightnesses of bound (i.e., bullets 2 and 3 below) are usually strongly preferred over laxness of bound (i.e., bullet 1 below).

, all of the following are generally acceptable, but tightnesses of bound (i.e., bullets 2 and 3 below) are usually strongly preferred over laxness of bound (i.e., bullet 1 below).

, which is identical to

, which is identical to

, which is identical to

, which is identical to

, which is identical to

, which is identical to

The equivalent English statements are respectively:

grows asymptotically as fast or more slowly than

grows asymptotically as fast or more slowly than

grows asymptotically as fast or more slowly than

grows asymptotically as fast or more slowly than

grows asymptotically as fast as

grows asymptotically as fast as

So while all three statements are true, progressively more information is contained in each. In some fields, however, the Big O notation (bullets number 2 in the lists above) would be used more commonly than the Big Theta notation (bullets number 3 in the lists above) because functions that grow more slowly are more desirable. For example, if  represents the running time of a newly developed algorithm for input size

represents the running time of a newly developed algorithm for input size  , the inventors and users of the algorithm might be more inclined to put an upper asymptotic bound on how long it will take to run without making an explicit statement about the lower asymptotic bound.

, the inventors and users of the algorithm might be more inclined to put an upper asymptotic bound on how long it will take to run without making an explicit statement about the lower asymptotic bound.

Extensions to the Bachmann-Landau notations

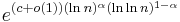

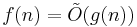

Another notation sometimes used in computer science is Õ (read soft-O).  is shorthand for

is shorthand for  for some k. Essentially, it is Big O notation, ignoring logarithmic factors because the growth-rate effects of some other super-logarithmic function indicate a growth-rate explosion for large-sized input parameters that is more important to predicting bad run-time performance than the finer-point effects contributed by the logarithmic-growth factor(s). This notation is often used to obviate the "nitpicking" within growth-rates that are stated as too tightly bounded for the matters at hand (since

for some k. Essentially, it is Big O notation, ignoring logarithmic factors because the growth-rate effects of some other super-logarithmic function indicate a growth-rate explosion for large-sized input parameters that is more important to predicting bad run-time performance than the finer-point effects contributed by the logarithmic-growth factor(s). This notation is often used to obviate the "nitpicking" within growth-rates that are stated as too tightly bounded for the matters at hand (since  is always

is always  for any constant

for any constant  and any

and any  ).

).

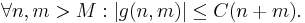

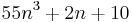

The L notation, defined as

is convenient for functions that are between polynomial and exponential.

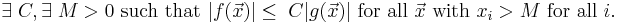

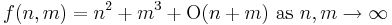

Multiple variables

Big O (and little o, and Ω...) can also be used with multiple variables.

To define Big O formally for multiple variables, suppose  and

and  are two functions defined on some subset of

are two functions defined on some subset of  . We say

. We say

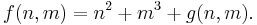

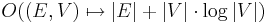

For example, the statement

asserts that there exist constants C and M such that

where g(n,m) is defined by

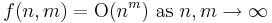

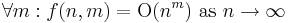

Note that this definition allows all of the coordinates of  to increase to infinity. In particular, the statement

to increase to infinity. In particular, the statement

(i.e.  ) is quite different from

) is quite different from

(i.e.  ).

).

Graph theory

It is often useful to bound the running time of graph algorithms. Unlike most other computational problems, for a graph G = (V, E) there are two relevant parameters describing the size of the input: the number |V| of vertices in the graph and the number |E| of edges in the graph. Inside asymptotic notation (and only there), it is common to use the symbols V and E, when someone really means |V| and |E|. We adopt this convention here to simplify asymptotic functions and make them easily readable. The symbols V and E are never used inside asymptotic notation with their literal meaning, so this abuse of notation does not risk ambiguity. For example  means

means  for a suitable metric of graphs. Another common convention—referring to the values |V| and |E| by the names n and m, respectively—sidesteps this ambiguity.

for a suitable metric of graphs. Another common convention—referring to the values |V| and |E| by the names n and m, respectively—sidesteps this ambiguity.

The generalization to functions taking values in any normed vector space is straightforward (replacing absolute values by norms), where f and g need not take their values in the same space. A generalization to functions g taking values in any topological group is also possible.

The "limiting process" x→xo can also be generalized by introducing an arbitrary filter base, i.e. to directed nets f and g.

The o notation can be used to define derivatives and differentiability in quite general spaces, and also (asymptotical) equivalence of functions,

which is an equivalence relation and a more restrictive notion than the relationship "f is Θ(g)" from above. (It reduces to  if f and g are positive real valued functions.) For example, 2x is Θ(x), but 2x − x is not o(x).

if f and g are positive real valued functions.) For example, 2x is Θ(x), but 2x − x is not o(x).

See also

- Asymptotic expansion: Approximation of functions generalizing Taylor's formula

- Asymptotically optimal: A phrase frequently used to describe an algorithm that has an upper bound asymptotically within a constant of a lower bound for the problem

- Hardy notation: A different asymptotic notation

- Limit superior and limit inferior: An explanation of some of the limit notation used in this article

- Nachbin's theorem: A precise method of bounding complex analytic functions so that the domain of convergence of integral transforms can be stated

References

Further reading

- Paul Bachmann. Die Analytische Zahlentheorie. Zahlentheorie. pt. 2 Leipzig: B. G. Teubner, 1894.

- Edmund Landau. Handbuch der Lehre von der Verteilung der Primzahlen. 2 vols. Leipzig: B. G. Teubner, 1909.

- G. H. Hardy. Orders of Infinity: The 'Infinitärcalcül' of Paul du Bois-Reymond, 1910.

- Marian Slodicka (Slodde vo de maten) & Sandy Van Wontergem. Mathematical Analysis I. University of Ghent, 2004.

- Donald Knuth. Big Omicron and big Omega and big Theta, ACM SIGACT News, Volume 8, Issue 2, 1976.

- Donald Knuth. The Art of Computer Programming, Volume 1: Fundamental Algorithms, Third Edition. Addison-Wesley, 1997. ISBN 0-201-89683-4. Section 1.2.11: Asymptotic Representations, pp.107–123.

- Thomas H. Cormen, Charles E. Leiserson, Ronald L. Rivest, and Clifford Stein. Introduction to Algorithms, Second Edition. MIT Press and McGraw-Hill, 2001. ISBN 0-262-03293-7. Section 3.1: Asymptotic notation, pp.41–50.

- Michael Sipser (1997). Introduction to the Theory of Computation. PWS Publishing. ISBN 0-534-94728-X. Pages 226–228 of section 7.1: Measuring complexity.

- Jeremy Avigad, Kevin Donnelly. Formalizing O notation in Isabelle/HOL

- Paul E. Black, "big-O notation", in Dictionary of Algorithms and Data Structures [online], Paul E. Black, ed., U.S. National Institute of Standards and Technology. 11 March 2005. Retrieved December 16, 2006.

- Paul E. Black, "little-o notation", in Dictionary of Algorithms and Data Structures [online], Paul E. Black, ed., U.S. National Institute of Standards and Technology. 17 December 2004. Retrieved December 16, 2006.

- Paul E. Black, "Ω", in Dictionary of Algorithms and Data Structures [online], Paul E. Black, ed., U.S. National Institute of Standards and Technology. 17 December 2004. Retrieved December 16, 2006.

- Paul E. Black, "ω", in Dictionary of Algorithms and Data Structures [online], Paul E. Black, ed., U.S. National Institute of Standards and Technology. 29 November 2004. Retrieved December 16, 2006.

- Paul E. Black, "Θ", in Dictionary of Algorithms and Data Structures [online], Paul E. Black, ed., U.S. National Institute of Standards and Technology. 17 December 2004. Retrieved December 16, 2006.

![L_n[\alpha,c]=O\left(e^{(c+o(1))(\ln n)^\alpha(\ln\ln n)^{1-\alpha}}\right),](/2009-wikipedia_en_wp1-0.7_2009-05/I/a742357e75a2dd425a7ff99159b0fbe0.png)